Before reading Charles Stross’s novel Rule 34, I’d been under the misapprehension that the title was referring to cellular automata or CAs.

[A reversible 1D Ca rule that I dubbed “Axons” in my and John Walker’s Cellab package.]

In the 1980s, the computer scientist Stephen Wolfram enumerated the simplest possible CAs in a list numbered from 1 to 255. Rule 30 is a very good generator of pseudorandom sequences, and Rule 110 is in fact a universal computer, capable of emulating any possible computational process. The CA Rule 34 is, however a very dull one, which produces patterns of parallel diagonal lines.

But it turns out “Rule 34” is hacker slang for the dictum: “If it exists, there is porn of it. No exceptions.” (You can find a nice summary of internet rules in this 2009 essay.)

So…Rule 34. Bowling ball porn? Check. Squid porn? But of course. Lightbulb porn? No doubt. Sally Field? Please no.

[Spicy photo of the divine Vanessa Lake with bowling ball, © Vanessa Lake 2012 — found on a Tumblr site called “A Purple Haze of Porn and JD.”]

You can test Rule 34 for whatever entity ____ you want by Googling “porn ____” and then selecting the Image option on the Google results page. You might want to adjust your Safe Search filter level to an ”˜adultness’ level you’re comfortable with.

Back in 2013 (when I wrote this post) you used to be able to search “Tumblr porn ____”, with the reason for narrowing down to Tumblr sites being that Tumblr pages weren’t encrusted with intense adware (and possible malware). But now, as I lightly revise it in 2021, Tumblr has cleaned up.

Anyway, the heroine of Stross’s novel Rule 34 is working in the playfully dubbed “Rule 34” branch of the Edinburgh police department, tasked with investigating the more kinky and dangerous things that the online locals might be getting up to.

In recent years, Charles Stross has become my favorite high-tech SF writer. And he’s not working in the sterile, Arthur Clarke mode of futurology, no, he’s writing druggy, antiestablishment satire, in some ways similar to cyberpunk—a mix of nihilistic humor and apocalyptic speculation. (Here’s a page listing Stross’s US editions.)

In 1995, I read Stross’s novel/story-sequence Accelerando. For several years before this, SF writers have been p*ssing and moaning and saying, “Gosh, we really can’t see past the Singularity.” And then Stross just goes in there and plows ahead. Machines as smart as gods? Why not. Hell, even the Greeks knew how to write about gods. You just do it. Pile on the bullsh*t and keep a straight face.

Accelerando gave me the courage to write my own Singularity novel—which I called Postsingular — it exists in paperback and ebook. See also the sequel, Hylozoic.

The notion of a Singularity became a quasi-religious belief among some techies, a millennial conviction that computers would essentially eat everything and we’d all be living in a giant videogame. Stross and Cory Doctorow took this line of thought to a maximal level in their recent novel/story-sequence Rapture of the Nerds, quite a wiggy romp.

But Stross and Doctorow aren’t Johnny One-Notes, not messianic Singulatarians. That whole rap is just one particular goof. Stross’s Heinlein-inspired far-future novel Saturn’s Children is more like retro, old-school SF, a book in which the author actually worries about things like rockets having enough fuel to fly from planet to planet within our solar system. And Doctorow’s engaging novels Makers and Little Brother and Pirate Cinema are tightly linked into near-future possiblities—the latter two might even be viewed as insurrectionary manuals for our youth.

Coming back to Stross’s Rule 34 , this book, like its loose prequel, Halting State , are quite close to the present-day world. It’s a world where some AI type behaviors have emerged among the applications that run on the Web. What do we mean by AI?

Stross observes, “If we understand how we do it, it isn’t artificial intelligence anymore. Playing chess, driving cars, generating conversational text… Perhaps we overestimate consciousness?”

He makes the point “We’re not very interested in reinventing human consciousness in a box. What gets the research grants flowing is applications.”

And, again: “general cognitive engines [are all] hardwired [to] project the seat of their identity onto you … what we really want is identity amplification.”

In my opinion if you have a really effective AI system, it’s in fact pretty easy to give it a sense of having a conscious self. It’s basically just a matter of equipping your program with a mental image of itself. Here’s a summary of my views of consciousness and AI, sdapted from my tome, The Lifebox, The Seashell, and The Soul, available in paperback, ebook, or as a free online webpage version — here’s a link to the section relevant to what I’m talking about here: Section 4.4 of Rucker’s LIFEBOX Tome: “I Am”.

(Thesis) The slowly advancing work in AI seems to indicate that any clearly described human behavior can be emulated by a machine— if not by an actually constructible machine, then at least by a theoretically possible machine.

(Antithesis) Upon introspection we feel there is a mental residue that isn’t captured by any scientific system; we feel ourselves to be quite unlike machines. This is the sense of having a soul.

(Synthesis) Sensing that you have a soul—or, more simply, feeling a sense of “I am”— can be modelled by equipping your mental computation with a self-symbol, setting up a “movie-in-the-brain” emulation of the self-symbol in the world, and then going one step further to tabulate the ongoing feelings of your self-symbol as it watches the mental movie. And the program watches the movie, and itself in the movie, and the tabulations of its feelings about itself and the movie…and that’s what it means to be conscious.

I admit that the synthesis step is a little confusing the first time you hear about it. I had to think about it for several years before it made sense. And, truth be told, I’m still revising it every time I come back to it. I got the idea from Antonio Damasio, The Feeling of What Happens.

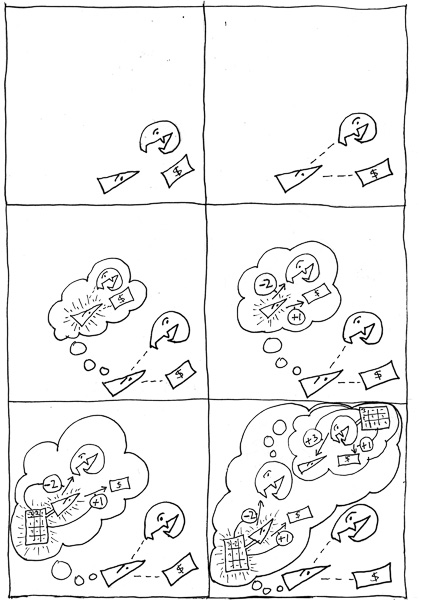

And here’s an illustration from that same section of my The Lifebox, the Seashell, and the Soul, showing a game-design-style sequence of building up to the simulation of consciousness…and on beyond conscousness to empathy. I was teaching courses on videogame programming when I came up with this diagram…which took me a very long time to figure out..

Left to right and top to bottom, the six cartoon frames represent, respectively:

*** immersion (you are the triangle critter)

*** seeing objects

*** movie-in-the-brain with self

*** feelings

*** core consciousness (movie-in-the-brain with self-and-feelings)

*** empathy (imagining another’s core consciousness)

In this cartoon, core consciousness is represented as a weighting table of “feelings” here. Buckminster Fuller used to say, “I seem to be a verb.” Here we might say, “I seem to be a self-modifying lookup table.”

But I’m off on a tangent here—emulating consciousness isn’t a main theme in Rule 34 —although, near the end, Stross can’t resist dropping the reader into the stream of consciousness of an intelligent program.

Most of the novel works to dramatize the fact that we can go a long way towards the illusion of intelligence with a large database, some clever search software and a smidgen of creative intelligence. To some extent, we’re simply beating the problem to death by having faster and bigger computers.

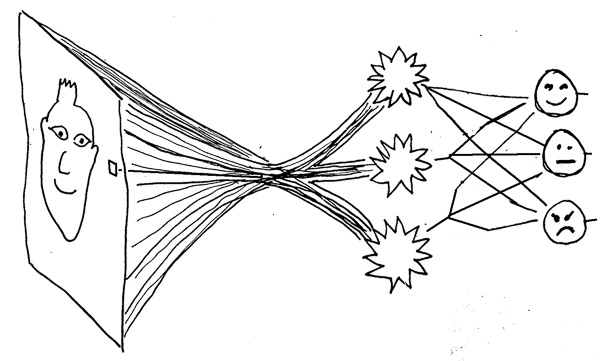

Where does that extra pinch of AI come from? Do we need a big insight into how we think? Maybe not. The AI programming method known as neural nets works by letting a machine program learn and get smarter. Given enough time and hardware, it may be that neural nets can bring us to something that feels AI. Even though we won’t know, in any exact sense, how it works. So, once again, we’ll just have a huge data base with a neural net that’s self-evolved a zillion effective weights to put onto the links between inputs and possible outputs.

(A great description of this process can be found in an excellent but less-than-well-known book On Intelligence by Jeff Hawkins and Sandra Blakeslee.)

The end result is a construct too complicated to design. It can only evolve. Stross sees the evolution as happening “in the wild,” that is, in the context of AI spam generators versus AI smart filters. “…much span is generated by drivel-speaking AI, designed purely to fool the smart filters by convincing them that it’s the effusion of a real human being… Slowly but surely the Turing Test war proceeds…”

[A neural net that takes a bundle of pixel-level inputs and decides what expression a face has.]

On another note, I relished Stross’s wry and realistic view of which of our past dreams do and do not come true:

“Even when they’re working, online conferencing systems just aren’t quite good enough to make face-to-face meetings obsolete. Working teleconferencing is right around the corner, just like food pills, the flying car, and energy too cheap to meter.”

And: “Reliable automatic face recognition is right around the corner next week, next year, next decade, just like it’s always been.”

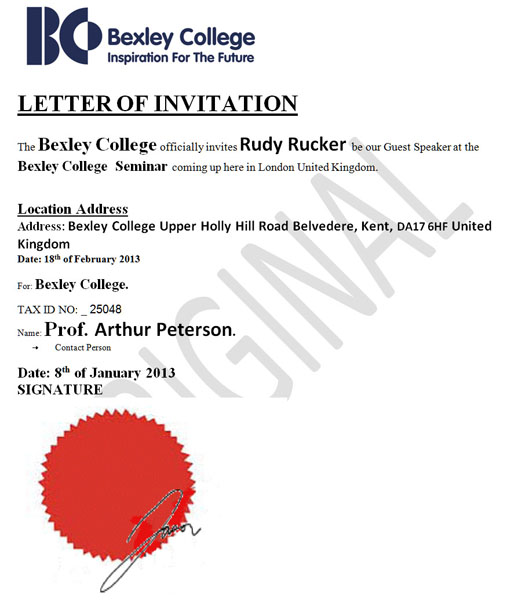

Last week, I was nearly scammed by some Nigerian(?) people (or bots) who pretended to invite me to give a talk in London, all expenses paid, with a good speaker’s fee, under the condition that I obtain a UK work permit which would, it slowly came out, cost $1400, to be paid in cash via Western Union (and at this point I balked). What made the scam initially believable was that a variety of different addresses were emailing me about it. Stross explains this tactic in Rule 34.

“I have Junkbot establish a bunch of sock puppets… Junkbot then engages [the target person] in several conversation scripts in parallel. A linear chat-up rarely works—people are too suspicious these days—but you can game them. Set up an artificial reality game … built around your victim’s world, with a bunch of sock puppets who are there to sucker them into the drama.”

The Phil Dick option: What if all your email friends are sock puppets? What is it that they’re trying to make you do?

January 14th, 2013 at 10:55 pm

I really enjoyed the little 6-panel discussion about consciousness. The idea that we might be driven by “ever changing lookup tables” certainly goes a long way towards explaining a lot of human behavior. Unfortunately, it seems many lookup tables don’t change at the macro level…

January 15th, 2013 at 10:30 am

That quote about spam generators vs. filters, and the Turing Test made me laugh when I read it. A couple months before “Rule 34″ came out I spotted a spam comment on Charlie’s blog, it started with something like ‘I really like the colors on your website…” and was followed by a couple of non-sequitur sentences, as if a bot had cut and pasted random bits of text. I left a comment pointing it out, ending it with Turing Test fail? Obviously he’d been thinking about it for a while, not surprising considering the amount of spam his blog was getting hit with at the time.

You weren’t the only one they tried to scam, from John Scalzi’s blog:

http://whatever.scalzi.com/2013/01/07/scam-attempt-warning-for-sff-writers/

January 16th, 2013 at 12:46 pm

RULE 34

I fear that you’ll never respect me

That our long-time friendship is done

When you find out what really infects me:

The DIRAC EQUATION makes me c*m!

January 20th, 2013 at 4:05 pm

You can get pretty far by doing a little statistical analysis on the comments section of a website to generate something like a Markov Chain that can produce text that looks reasonable (at first glance).

January 22nd, 2013 at 11:20 pm

Follow-up: I decided to test your theory, so I tried images.google.com with the query “cellular automata porn”. I almost laughed out loud at one of the results: http://4.bp.blogspot.com/-Mk1-186zz_U/Tcs7b47ipoI/AAAAAAAADnY/3IxP_92sH5Q/s1600/zz18.jpg

It bears a striking resemblance to a certain author.