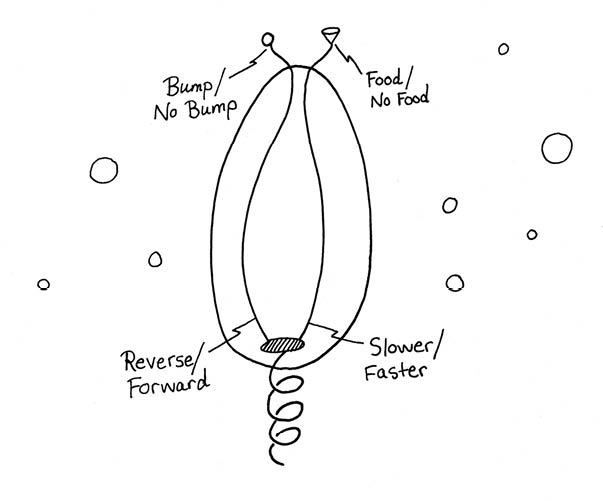

• See book's home page for info and buy links.

• Go to web version's table of contents.

4. Enjoying Your Mind

We’re fortunate enough to be able to observe minds in action all day long. You can make a fair amount of progress on researching the mind by paying close attention to what passes through your head as you carry out your usual activities.

Doing book and journal research on the mind is another story. Everybody has their own opinion—and everybody disagrees. Surprising as it may seem, at this point in mankind’s intellectual history, we have no commonly accepted theory about the workings of our minds.

In such uncharted waters, it’s the trip that counts, not the destination. At the very least, I’ve had fun pondering this chapter, in that it’s made me more consciously appreciative of my mental life. I hope it’ll do the same for you—whether or not you end up agreeing with me.

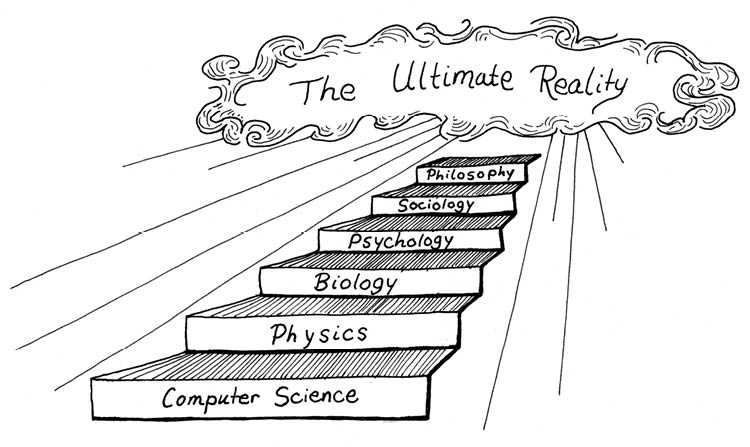

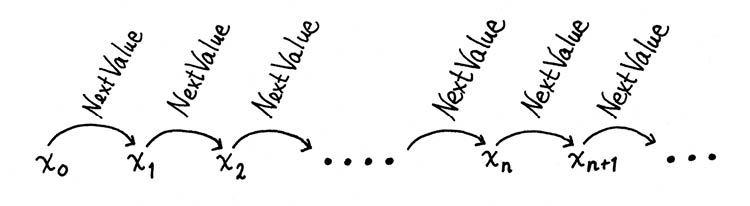

As in the earlier chapters, I’ll speak of a hierarchy of computational processes. Running from the lowest to the highest, I’ll distinguish among the following eight levels of mind, devoting a section to each level, always looking for connections to notions of computation.

• 4.1: Sensational Emotions. Sensation, action, and emotion.

• 4.2: The Network Within. Reflexes and learning.

• 4.3: Thoughts as Gliders and Scrolls. Thoughts and moods.

• 4.4: “I Am.” Self-awareness.

• 4.5: The Lifebox. Memory and personality.

• 4.6: The Mind Recipe. Cogitation and creativity.

• 4.7: What Do You Want? Free will.

• 4.8: Quantum Soul. Enlightenment.

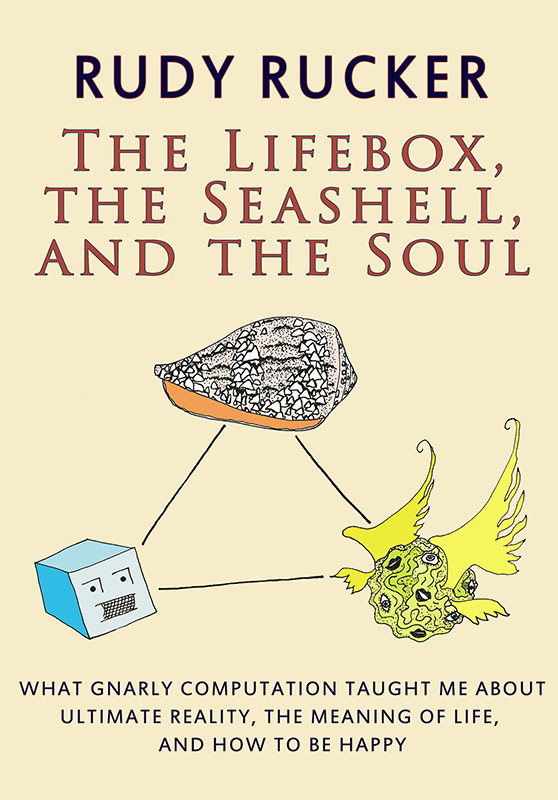

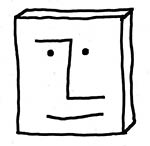

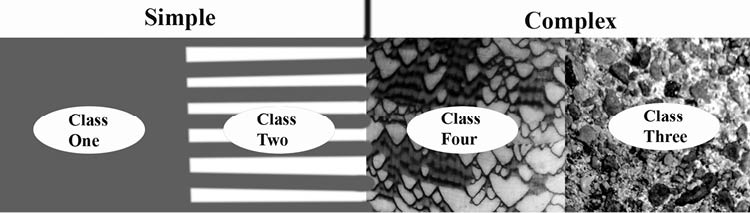

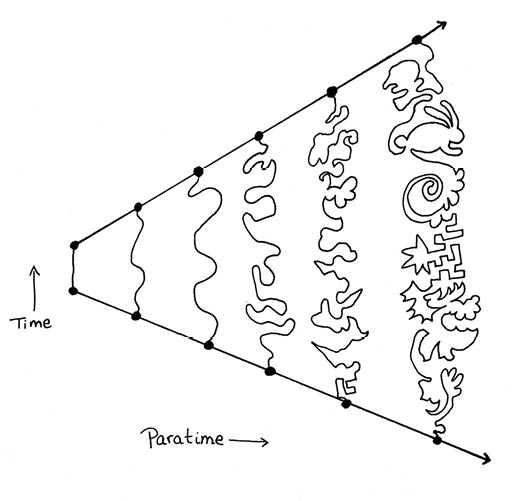

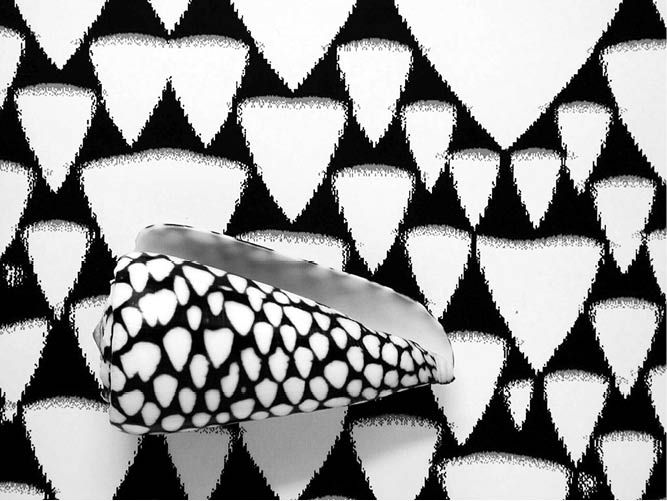

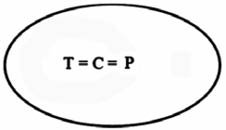

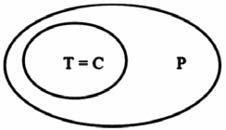

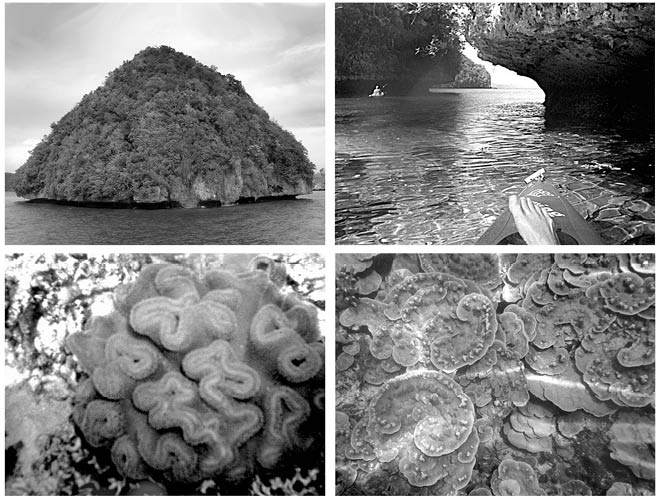

One of my special goals here will be to make good on the dialectic triad embodied in the title, The Lifebox, the Seashell, and the Soul. As I mentioned earlier, there’s a tension between the computational “lifebox” view of the mind vs. one’s innate feeling of being a creative being with a soul. I want to present the possibility that the creative mind might be a kind of class four computation akin to a scroll-generating or cone-shell-pattern-generating cellular automaton.

But class four computation may not be the whole story. In the final section of the chapter I’ll discuss my friend Nick Herbert’s not-quite-relevant but too-good-to-pass-up notion of viewing the gap between deterministic lifebox and unpredictable soul as relating to a gap between the decoherent pure states and coherent superposed states of quantum mechanics.

• See book's home page for info and buy links.

• Go to web version's table of contents.

4.1: Sensational Emotions

Mind level one—sensation, action, and emotion—begins with the fact that, in the actual world, minds live in physical bodies. Having a body situated in a real world allows a mind to receive sensations from the world, to act upon the world, and to observe the effects of these actions.

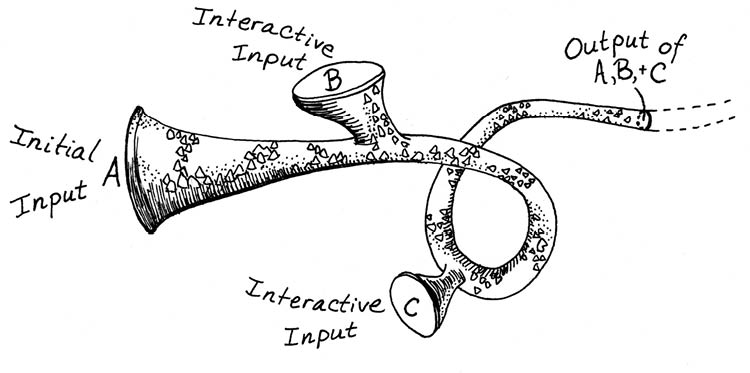

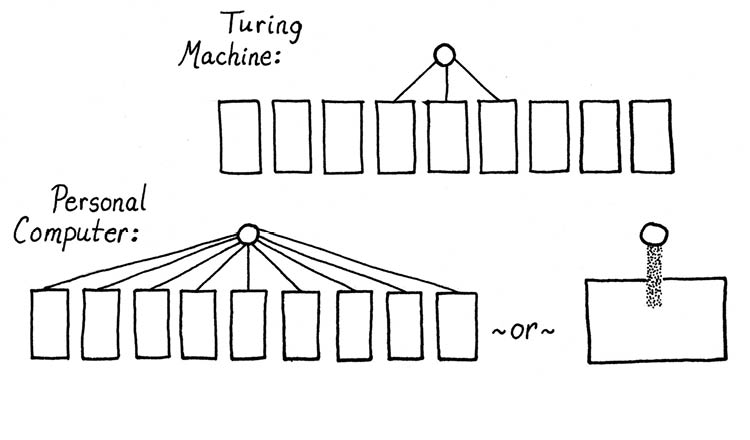

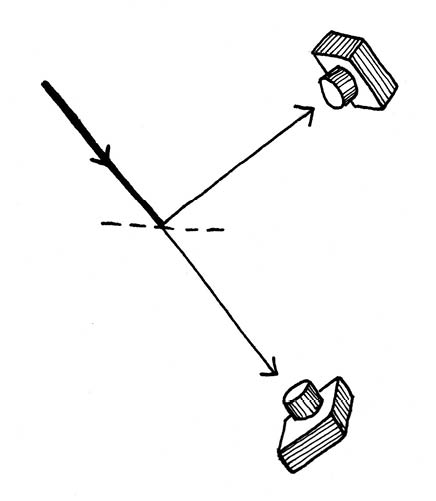

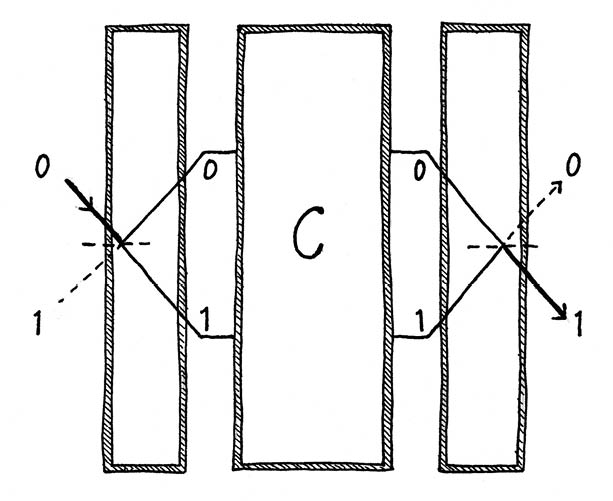

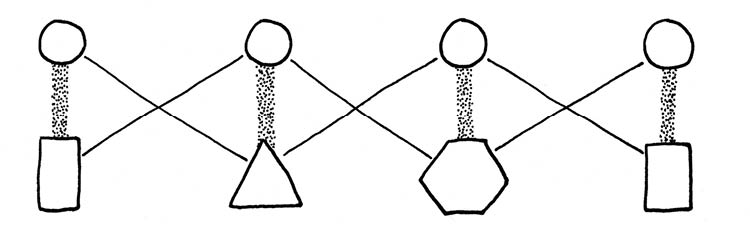

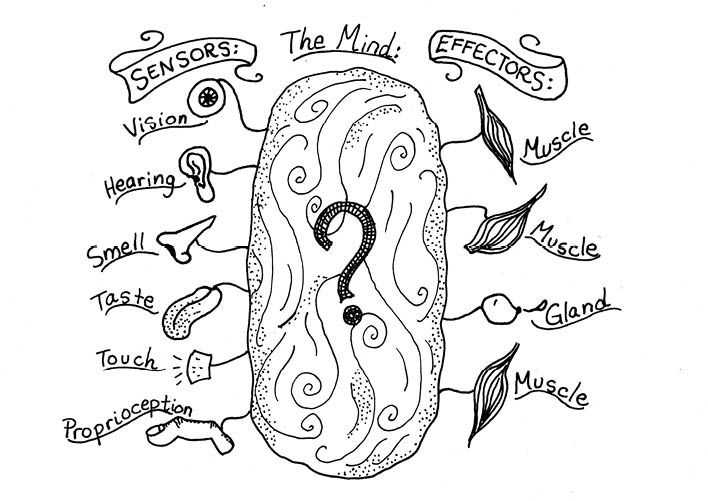

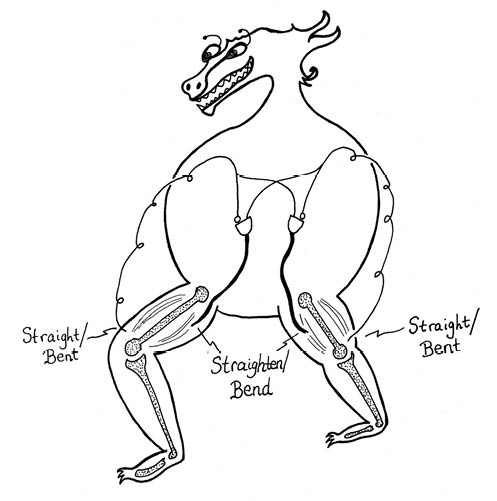

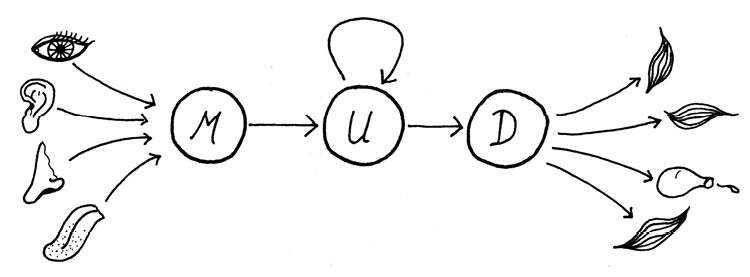

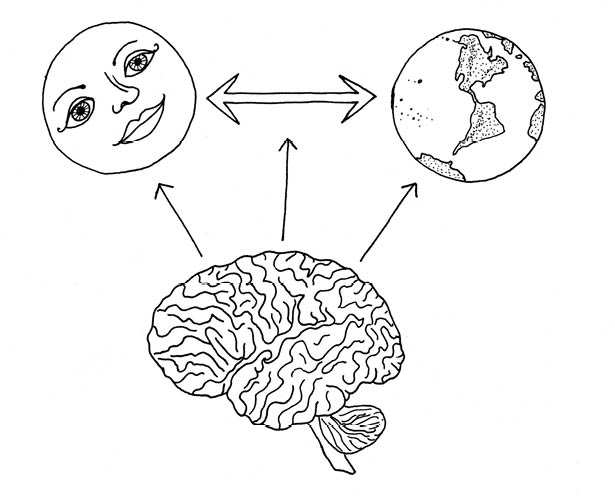

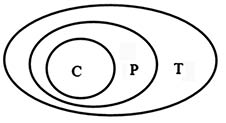

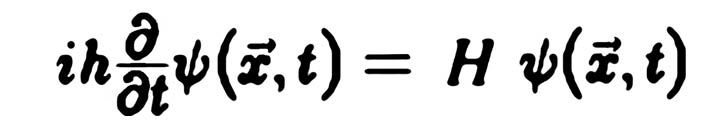

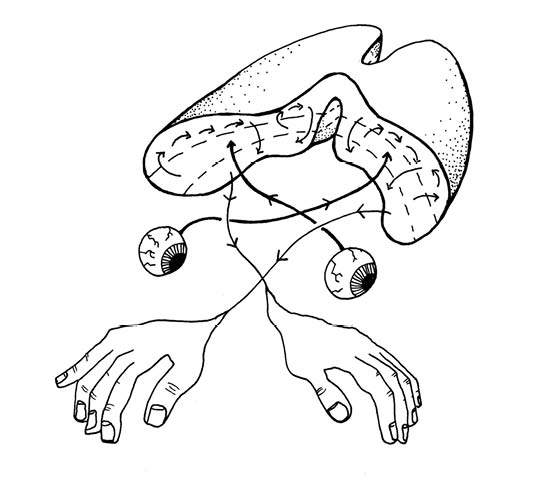

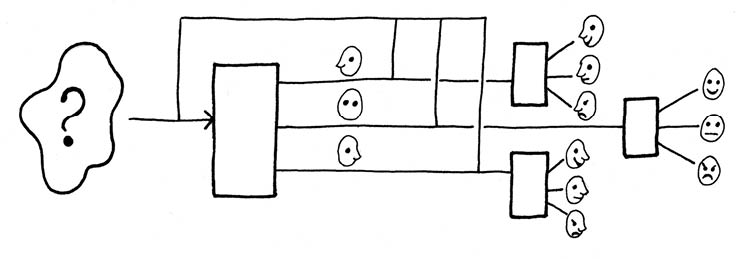

We commonly use the term sensors for an organism’s channels for getting input information about the world, and the term effectors for the output channels through which the organism does things in the world—moving, biting, growing, and so on (see Figure 79).

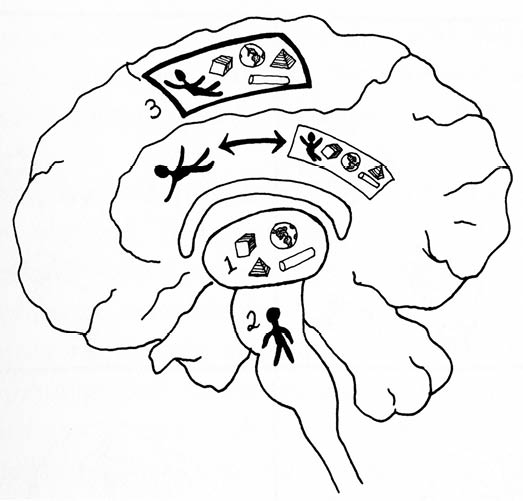

Figure 79 : A Mind Connects Sensors to Effectors

The lump in between the sensors and the effectors represents the organism’s mind. The not-so-familiar word proprioception refers to the awareness of the relative positions of one’s joints and limbs.

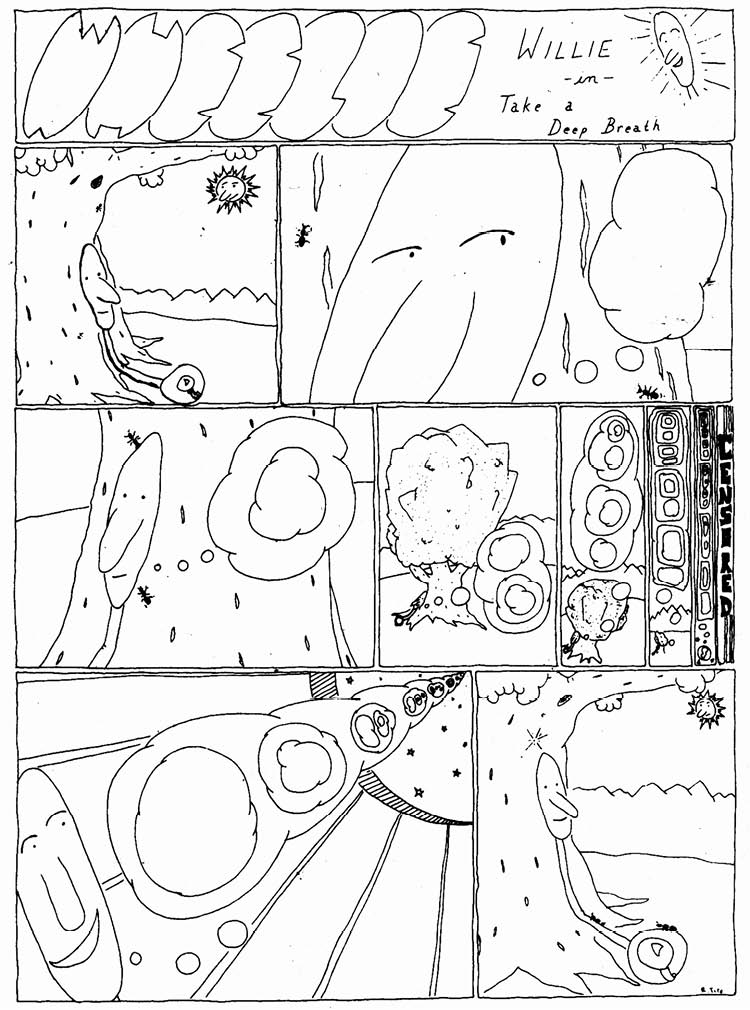

Being embedded in the world is a given for any material entity—human, dog, robot, or boulder. Paradoxically enough, the goal of high-level meditative practices is to savor fully this basic level of being. Meditation often begins by focusing on the simplest possible action that a human takes, which is breathing in and out.

Although being embedded in the world comes naturally for physical beings, if you’re running an artificial-life (a-life) program with artificial organisms, your virtual creatures won’t be embedded in a world unless you code up some sensor and effector methods they can use to interact with their simulated environment.

The fact that something which is automatic for real organisms requires special effort for simulated organisms may be one of the fundamental reasons why artificial-life and simulated evolution experiments haven’t yet proved as effective as we’d like them to be. It may be that efforts to construct artificially intelligent programs for personal computers (PCs) will founder until the PCs are put inside robot bodies with sensors and effectors that tie them to the physical world.

In most of this chapter, I’m going to talk about the mind as if it resides exclusively in the brain. But really your whole body participates in your thinking.

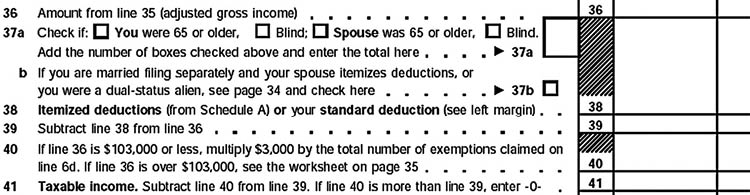

Regarding the mind-brain-body distinction, I find it amusing to remember how the ancient Egyptians prepared a body for mummification (see Table 9). Before curing and wrapping the corpse’s muscles and bones, they’d eviscerate the body and save some key organs in so-called canopic jars. These jars were carved from alabaster stone, each with a fancy little sculpture atop its lid. Being a muscle, the heart was left intact within the body. And what about the brain? The ancient Egyptians regarded the brain as a worthless agglomeration of mucus; they pulled it out through the nose with a hooked stick!

|

Organ

|

Fate

|

|

Intestines

|

Preserved in falcon-headed canopic jar

|

|

Stomach

|

Preserved in jackal-headed canopic jar

|

|

Liver

|

Preserved in human-headed canopic jar

|

|

Lungs

|

Preserved in baboon-headed canopic jar

|

|

Heart

|

Mummified with the body

|

|

Brain

|

Pulled out the nose and fed to the dogs

|

Table 9: What Ancient Egyptians Did with a Mummy’s Organs

The degree to which we’ve swung in the other direction can be seen by considering the science-fictional notion of preserving a person as a brain in a jar. If you can’t afford to court immortality by having your entire body frozen, the cryonicists at the Alcor Life Extension Foundation are glad to offer you what they delicately term the neuropreservation option—that is, they’ll freeze your brain until such a time as the brain-in-a-jar or grow-yourself-a-newly-cloned-body technology comes on line. But the underlying impulse is, of course, similar to that of the ancient Egyptians.

Anyway, my point was that in some sense you think with your body as well as with your mind. This becomes quite clear when we analyze the nature of emotions. Before taking any physical action, we get ourselves into a state of readiness for the given motions. The whole body is aroused. The brain scientist Rodolfo Llinas argues that this “premotor” state of body activation is what we mean by an emotion.77

One of the results of becoming socialized and civilized is that we don’t in fact carry out every physical action that occurs to us—if I did, my perpetually malfunctioning home and office PCs would be utterly thrashed, with monitors smashed in, wires ripped out, and beige cases kicked in. We know how to experience an emotional premotor state without carrying out some grossly inappropriate physical action. But even the most tightly controlled emotions manifest themselves in the face and voice box, in the lower back, in the heart rate, and in the secretions of the body’s glands.

One of the most stressful things you can do to yourself is to spend the day repeating a tape loop of thoughts that involves some kind of negative emotion. Every pulse of angry emotion puts your body under additional activation. But if that’s the kind of day you’re having—oh well, accept it. Being unhappy’s bad enough without feeling guilty about being unhappy!

Given the deep involvement of emotions with the body, it’s perhaps questionable whether a disembodied program can truly have an emotion—despite the classic example of HAL in 2001: A Space Odyssey. But, come to think of it, HAL’s emotionality was connected with his hardware, so in that sense he did have a body. Certainly humanoid robots will have bodies, and it seems reasonable to think of them as having emotions. Just as a person does, a robot would tend to power up its muscle motors before taking a step. Getting ready to fight someone would involve one kind of preparation, whereas preparing for a nourishing session of plugging into an electrical outlet would involve something else. It might be that robots could begin to view their internal somatic preparations as emotions. And spending too much time a prefight state could cause premature wear in, say, a robot’s lower-back motors.

A slightly different way to imagine robot emotions might be to suppose that a robot continually computes a happiness value that reflects its status according to various measures. In planning what to do, a robot might internally model various possible sequences of actions and evaluate the internal happiness values associated with the different outcomes—not unlike a person thinking ahead to get an idea of the emotional consequences of a proposed action. The measures used for a robot “happiness” might be a charged battery, no hardware error-messages, the successful completion of some tasks, and the proximity of a partner robot. Is human happiness so different?

• See book's home page for info and buy links.

• Go to web version's table of contents.

4.2: The Network Within

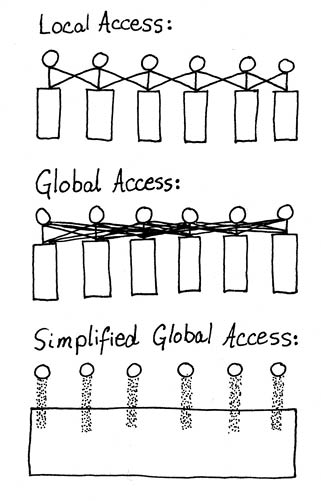

Looking back at Figure 79, what goes inside the lump between the sensors and the effectors? A universal automatist would expect to find computations in there. In this section, I’ll discuss some popular computational models of how we map patterns of sensory stimulation into patterns of effector activation.

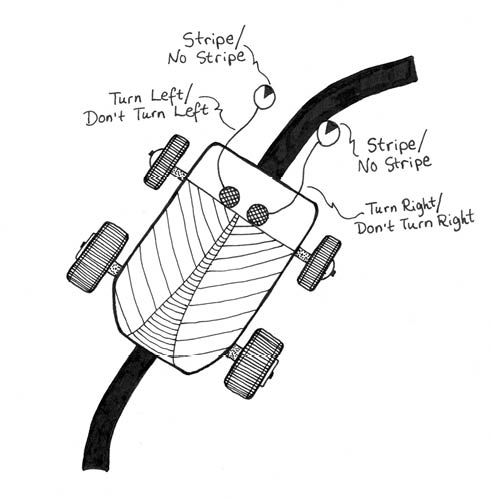

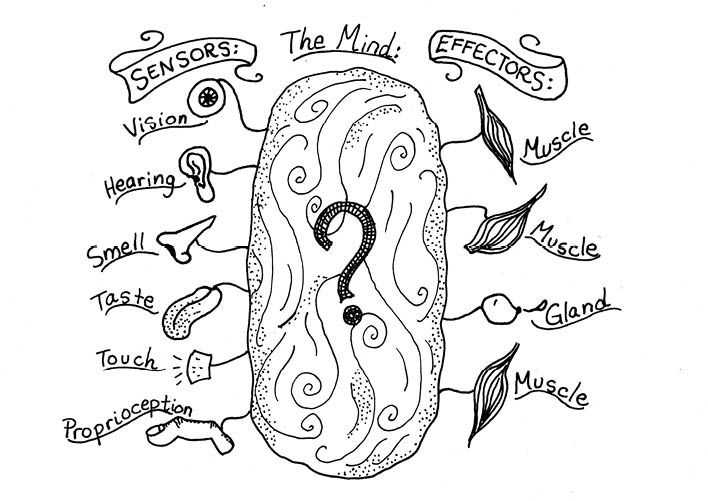

Perhaps the simplest possible mind component is a reflex: If the inputs are in such and such a pattern, then take this or that action. The most rudimentary reflex connects each sensor input to an effector output. A classic example of this is a self-steering toy car, as shown in Figure 80.

Figure 80: A toy Car That Straddles a Stripe

The eyes stare down at the ground. When the right eye sees the stripe, this means the car is too far to the left and the car swerves right. Likewise for the other side.

Our minds include lots of simple reflexes—if something sticks in your throat, you cough. If a bug flies toward your eye, you blink. When your lungs are empty you breathe in; when they’re full you breathe out.

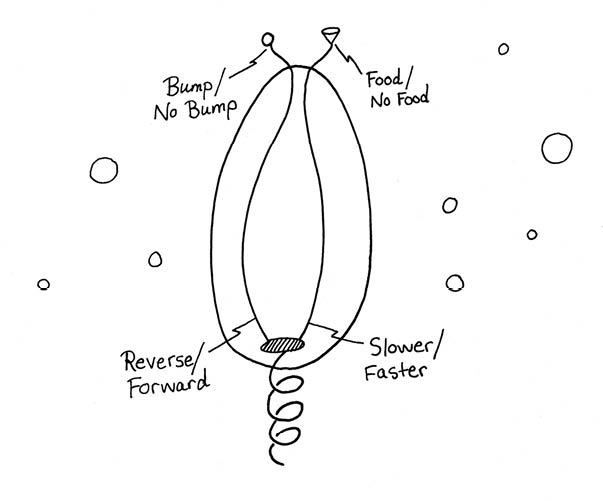

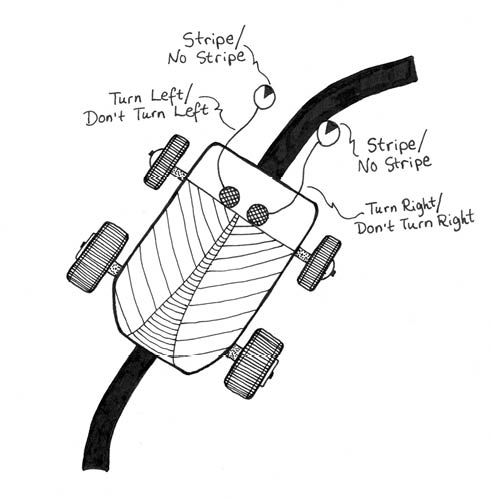

Microorganisms get by solely on reflexes—a protozoan swims forward until it bumps something, at which time it reverses direction. If the water holds the scent of food, the creature slows down, otherwise it swims at full speed. Bumping and backing, speeding and slowing down, the animalcule finds its way all around its watery environment. When combined with the unpredictable structures of the environment, a protozoan’s bump-and-reverse reflex together with its slow-down-near-food reflex are enough to make the creature seem to move in an individualistic way.

Looking through a microscope, you may even find yourself rooting for this or that particular microorganism—but remember that we humans are great ones for projecting personality onto the moving things we see. As shown in Figure 81, the internal sensor-to-effector circuitry of a protozoan can be exceedingly simple.

Figure 81: A Flagellate’s Swimming Reflexes

I’m drawing the little guy with a corkscrew flagellum tail that the can twirl in one direction or the other, at a greater or lesser speed. On sensor controls speed and the other sensor controls the direction of the twirling.

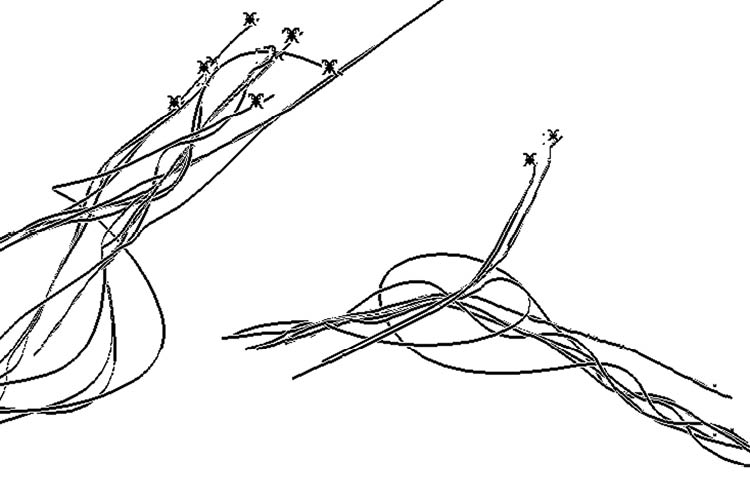

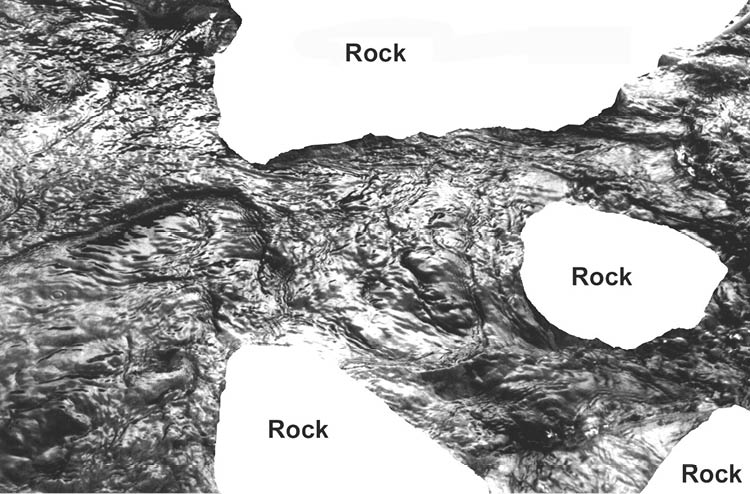

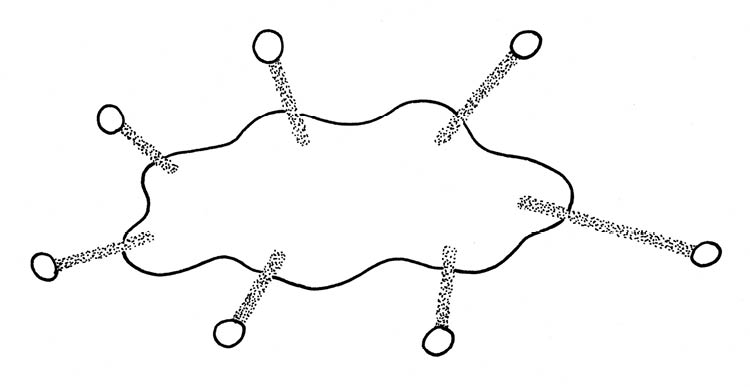

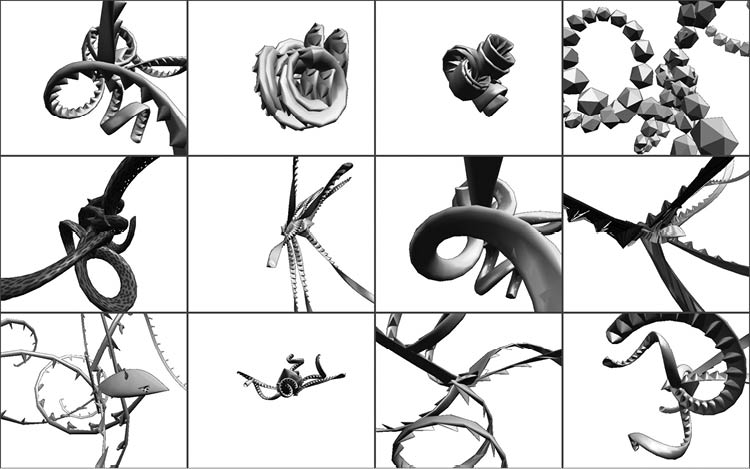

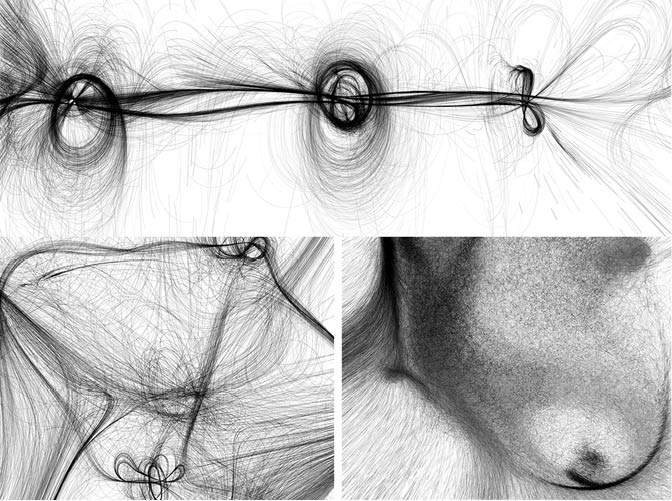

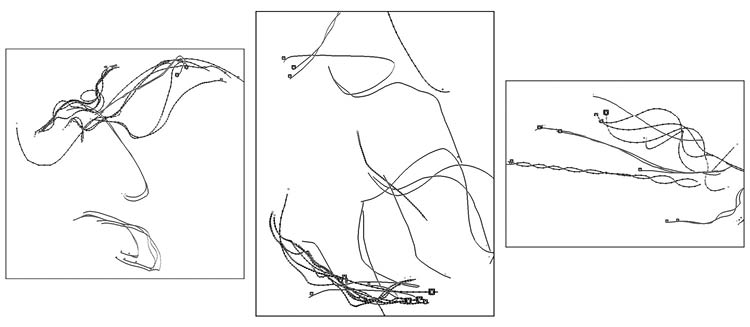

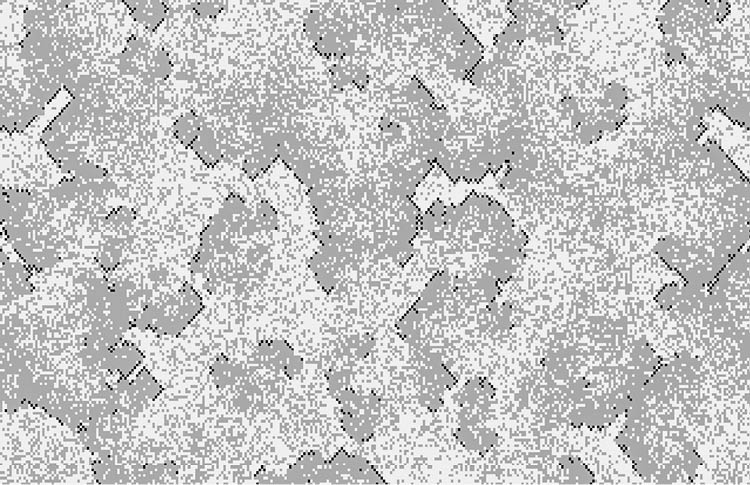

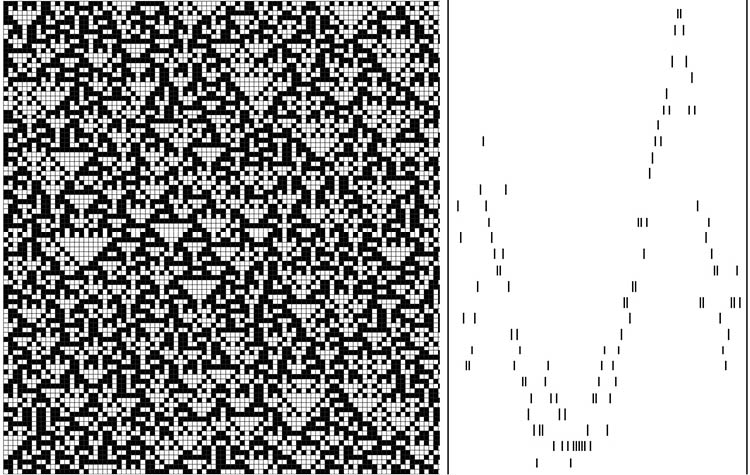

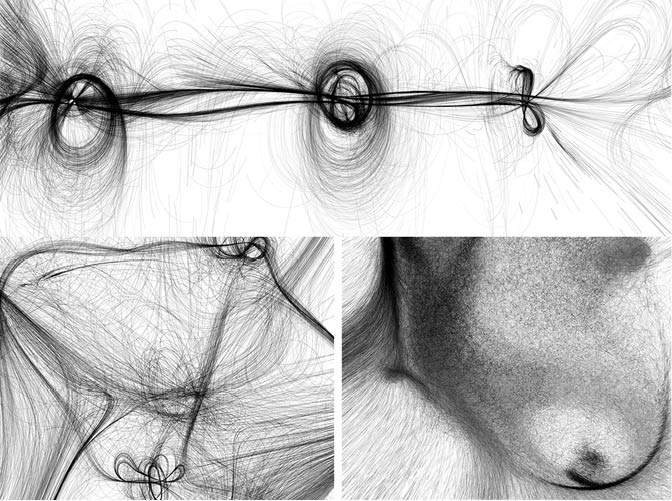

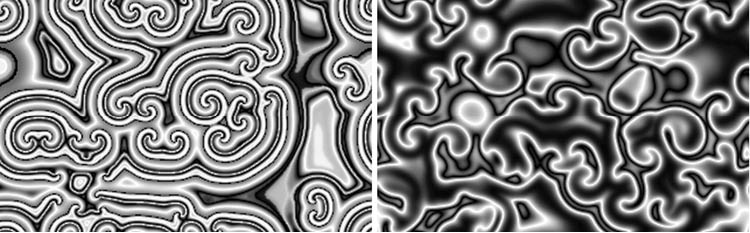

In the early 1980s, brain researcher Valentino Braitenberg had the notion of describing a series of thought experiments involving the motions of simple “vehicles” set into a plane with a few beacon lights.78 Consider for instance a vehicle with the reflex “approach any light in front of you until it gets too bright, then veer to the dimmer side.” If we were to set down a vehicle of this kind in a plane with three lights, we would see an interesting circling motion, possibly chaotic. Since Braitenberg’s time, many programmers have amused themselves either by building actual Braitenberg vehicles or by writing programs to simulate little worlds with Braitenberg vehicles moving around. If the virtual vehicles are instructed to leave trails on the computer screen, knotted pathways arise—not unlike the paths that pedestrians trample into new fallen snow. Figure 82 shows three examples of Braitenberg simulations.

Figure 82: Gnarly Braitenberg Vehicles

The artist and UCLA professor Casey Reas produced these images by simulating large numbers of Braitenberg vehicles that steer toward (or away from) a few “light beacons” placed in their plane worlds. These particular images are called Tissue, Hairy Red, and Path, and are based on the motions of, respectively, twenty-four thousand, two hundred thousand, and six hundred vehicles reacting to, respectively, three, eight, and three beacons. To enhance his images, Reas arbitrarily moves his beacons now and then. He uses his programs to create fine art prints of quite remarkable beauty (see www.groupc.net).

Although the Braitenberg patterns are beautiful, if you watch one particular vehicle for a period of time, you’ll normally see it repeating its behavior. In other words, these vehicles, each governed by a single reflex force, perform class two computations. What can we do to make their behavior more interesting?

I have often taught a course on programming computer games, in which students create their own interactive three-dimensional games.79 We speak of the virtual agents in the game as “critters.” A recurrent issue is how to endow a critter with interesting behavior. Although game designers speak of equipping their critters with artificial intelligence, in practice this can mean something quite simple. In order for a critter to have interesting and intelligent-looking behavior, it can be enough to attach two or, better, three reflex-driven forces to it.

Think back to our example in section 3.3: Surfing Your Moods of a magnet pendulum bob suspended above two or more repelling magnets. Three forces: gravity pulls the bob toward the center, and the two magnets on the ground push it away. The result is a chaotic trail.

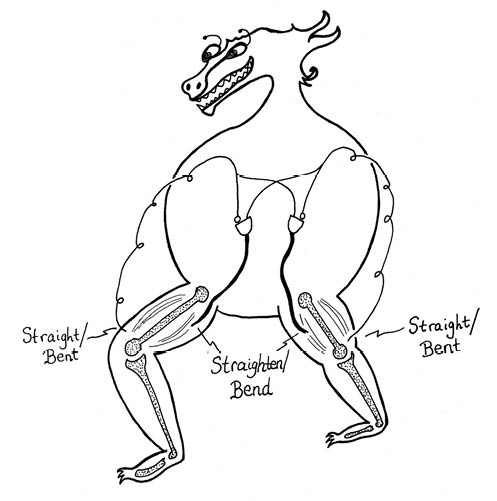

But now suppose you want more than chaos from your critter. Suppose you want it to actually be good at doing something. In this case the simplest reflexes might not be enough. The next step above the reflex is to allow the use of so-called logic gates that take one or more input signals and combine them in various ways. If we allow ourselves to chain together the output of one gate as the input for another gate, we get a logical circuit. It’s possible to create a logical circuit to realize essentially any way of mapping inputs into outputs. We represent a simple “walking” circuit in Figure 83.

Figure 83: Logic Gates for a Walker

The goblet-shaped gates are AND gates, which output a value of True at the bottom if and only both inputs at the top are True. The little circles separating two of the gate input lines from the gates proper are themselves NOT gates. A NOT gate returns a True if and only if its input is False. If you set the walker down with one leg bent and one leg straight, it will continue alternating the leg positions indefinitely.

And of course we can make the circuits more and more complicated, eventually putting in something like an entire PC or more.

But a logic circuit may not be the best way to model a mind.

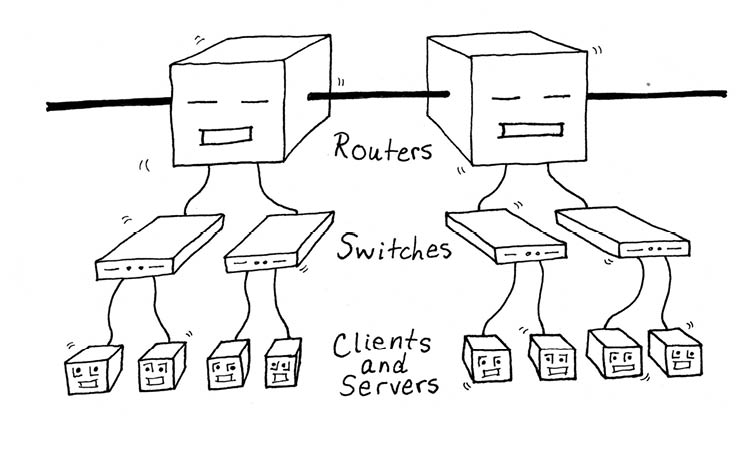

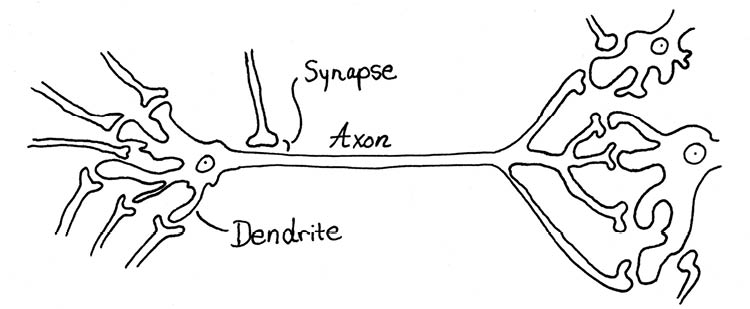

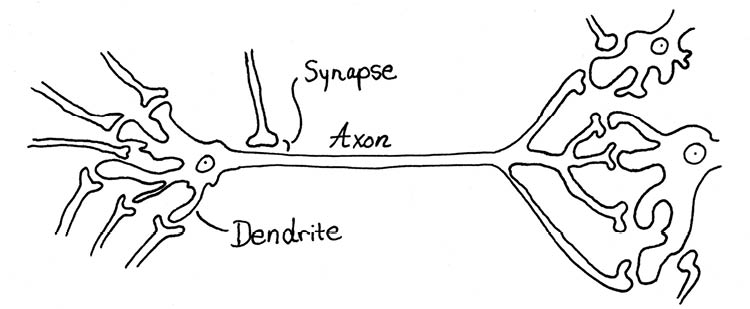

The actual computing elements of our brains are nerve cells, or neurons. A brain neuron has inputs called dendrites and a branching output line called an axon, as illustrated in Figure 84. Note, by the way, that an axon can be up to a meter long. This means that the brain’s computation can be architecturally quite intricate. Nevertheless, later in this chapter we’ll find it useful to look at qualitative CA models of the brain in which the axons are short and the neurons are just connected to their closest spatial neighbors.

Figure 84: A Brain Neuron

There are about a hundred billion neurons in a human brain. A neuron’s number of “input” dendrites ranges from several hundred to the tens of thousands. An output axon branches out to affect a few dozen or even a few hundred other cells.

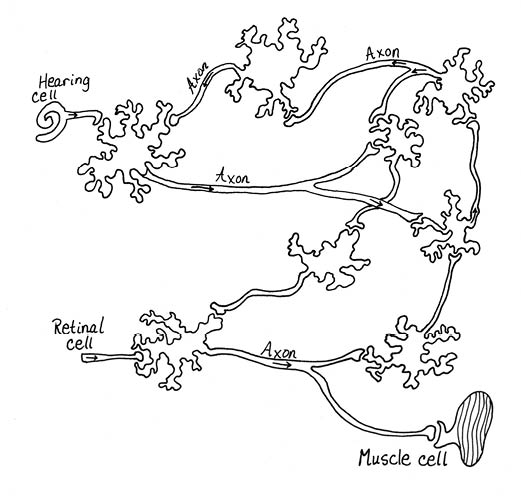

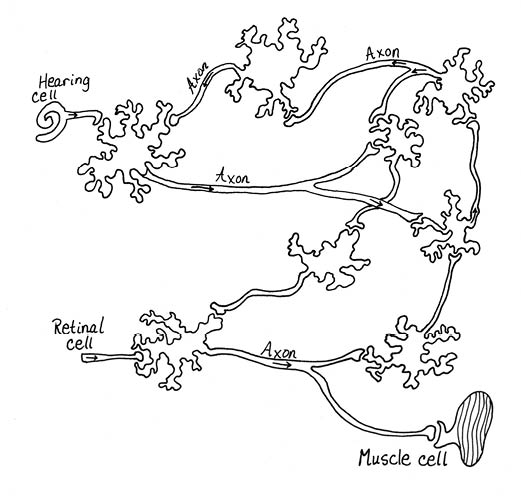

In accordance with the sensor-mind-effector model, the neuron dendrites can get inputs from sensor cells as well as from other neurons, and the output axons can activate muscle cells as well as other neurons, as indicated in Figure 85.

Normally a nerve cell sends an all-or-nothing activation signal out along its axon. Whether a given neuron “fires” at a given time has to do with its activation level, which in turn depends upon the inputs the neuron receives through its dendrites. Typically a neuron has a certain threshold value, and it fires when its activation becomes greater than the threshold level. After a nerve cell has fired, its activation drops to a resting level, and it takes perhaps a hundredth of a second of fresh stimulation before the cell can become activated enough to fire again.

The gap where axons meet a dendrite is a synapse. When the signal gets to a synapse it activates a transmitter chemical to hop across the gap and stimulate the dendrites of the receptor neuron. The intensity of the stimulation depends on the size of the bulb at the end of the axon and on the distance across the synapse. To make things a bit more complicated, there are in fact two kinds of synapse: excitatory and inhibitory. Stimulating an inhibitory synapse lowers the receptor cell’s activation level.

Most research on the human brain focuses on the neocortex, which is the surface layer of the brain. This layer is about an eighth of an inch thick. If you could flatten out this extremely convoluted surface, you’d get something like two square feet of neurons—one square foot per brain-half.

Figure 85: Brain Neurons Connected to Sensors and Effectors.

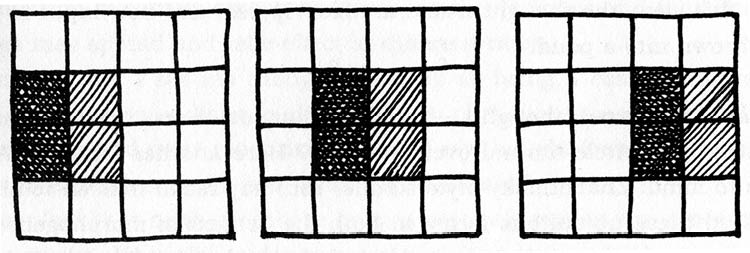

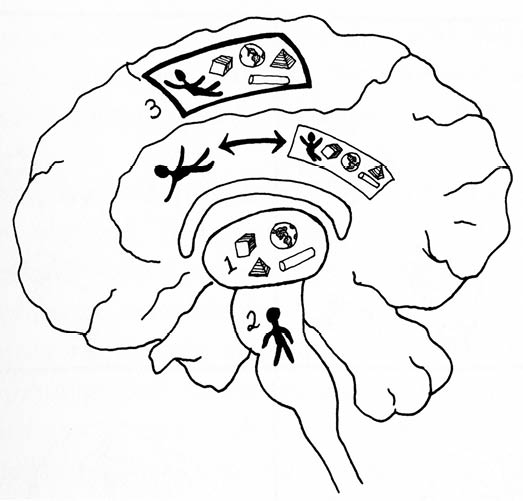

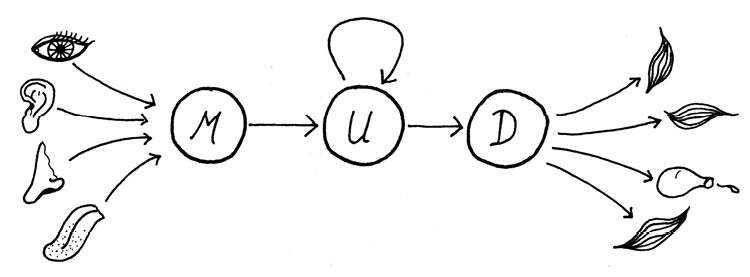

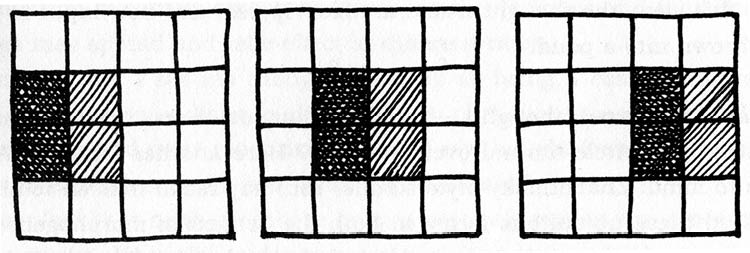

Our big flat neocortical sheets can be regarded as a series of layers. The outermost layer is pretty much covered with a tangle of dendrites and axons—kind of like the zillion etched connector lines you see on the underside of a circuit board. Beneath this superficial layer of circuitry, one can single out three main zones: the upper layers, the middle layers, and the deep layers, as illustrated in Figure 86.

Roughly speaking, sensory inputs arrive at the middle layer, which sends signals to the upper layer. The upper layer has many interconnections among its own neurons, but some of its outputs also trickle down to the deep layer. And the neurons in the deep layer connect to the body’s muscles. This is indicated in Figure 86, but we make the flow even clearer in Figure 87.

As usual in biology, the actual behavior of a living organic brain is funkier and gnarlier and much more complicated than any brief sketch. Instead of there being one kind of brain neuron, there are dozens. Instead of there being one kind of synapse transmission chemical, there are scores of them, all mutually interacting. Some synapses operate electrically rather than chemically, and thus respond much faster. Rather than sending a digital pulse signal, some neurons may instead send a graded continuous-valued signal. In addition, the sensor inputs don’t really go directly to the neocortex; they go instead to an intermediate brain region called the thalamus. And our throughput diagram is further complicated by the fact that the upper layers feed some outputs back into the middle layers and the deep layers feed some outputs back into the thalamus. Furthermore, there are any number of additional interactions among other brain regions; in particular, the so-called basal ganglia have input-output loops involving the deep layers. But for our present purposes the diagrams I’ve given are enough.

Figure 86: The Neocortex, Divided into Upper, Middle and Deep Layers.

A peculiarity of the brain-body architecture is that the left half of the brain handles input and output for the right side of the body, and vice versa.

When trying to create brainlike systems in their electronic machines, computer scientists often work with a switching element also called a neuron—which is of course intended to model a biological neuron. These computer neurons are hooked together into circuits known as neural nets or neural networks.

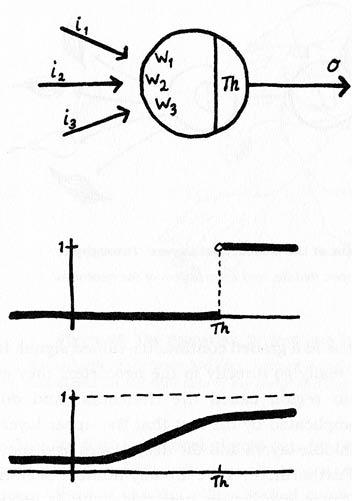

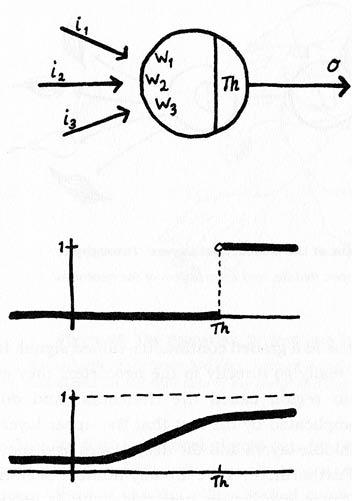

As drawn in Figure 88, we think of the computer neuron as having some number of input lines with a particular weight attached to each input. The weights are continuous real numbers ranging from negative one to positive one. We think of negatively weighted inputs as inhibiting our artificial neurons and positive values as activating them. At any time, the neuron has an activation level that’s obtained by summing up the input values times the weights. In addition, each neuron has a characteristic threshold value Th. The neuron’s output depends on how large its activation level is relative to its threshold. This is analogous to the fact that a brain neuron fires an output along its axon whenever its activation is higher than a certain value.

Figure 87: A Simplified Diagram of the Neocortical Layers’ Throughput

U, M, and D are, respectively, the upper, middle, and deep layers of the neocortex.

Computer scientists have actually worked with two different kinds of computer neuron models. In the simple version, the neuron outputs are only allowed to take on the discrete values zero and one. In the more complex version, the neuron outputs can take on any real value between zero and one. Our figure indicates how we might compute the output values from the weighted sum of the inputs in each case.

Most computer applications of neural nets use neurons with continuous-valued outputs. On the one hand this model is more removed from the generally discrete-valued-output behavior of biological neurons—given that a typical brain neuron either fires or doesn’t fire, its output is more like a single-bit zero or one. On the other hand, our computerized neural nets use so many fewer neurons than does the brain that it makes sense to get more information out of each neuron by using the continuous-valued outputs. In practice, it’s easier to tailor a smallish network of real-valued-output neurons to perform a given task.80

One feature of biological neurons that we’ll ignore for now is the fact that biological neurons get tired. That is, there is a short time immediately after firing when a biological neuron won’t fire again, no matter how great the incoming stimulation. We’ll come back to this property of biological neurons in the following section 4.3: Thoughts as Gliders and Scrolls.

In analogy to the brain, our artificial neural nets are often arranged in layers. Sensor inputs feed into a first layer of neurons, which can in turn feed into more layers of neurons, eventually feeding out to effectors. The layers in between the sensors and the final effector-linked layers are sometimes called hidden layers. In the oversimplified model of the neocortex that I drew in Figures 86 and 87, the so-called upper layer would serve as the hidden layer.

What makes neural nets especially useful is that it’s possible to systematically tweak the component neurons’ weights in order to make the system learn certain kinds of task.

Figure 88: Computer Neuron Models

A neuron with a threshold value computes the weighted sum of its inputs and uses this sum to calculate its output. In this figure, the threshold is Th, and the activation level Ac is the weighted sum of the inputs is w1•i1 + w2•i2 + w3•i3. Below the picture of the neuron we graph two possible neuron response patterns, with the horizontal axes representing the activation level Ac and the vertical axes representing the output value o. In the upper “discrete” response pattern, the output is one if Ac is greater than Th, and zero otherwise. In the lower “continuous” response pattern, the output is graded from zero to one, depending on where Ac stands relative to Th.

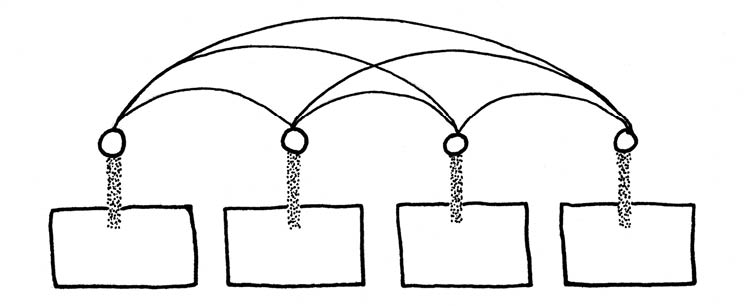

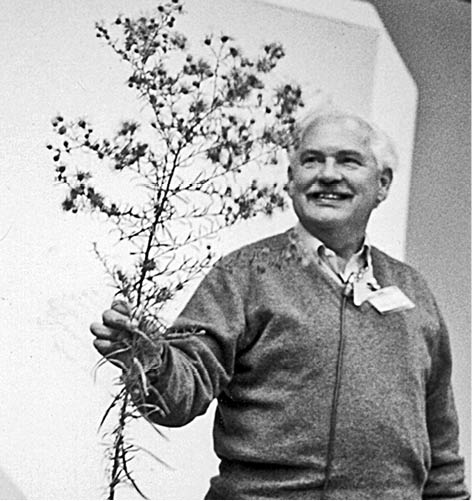

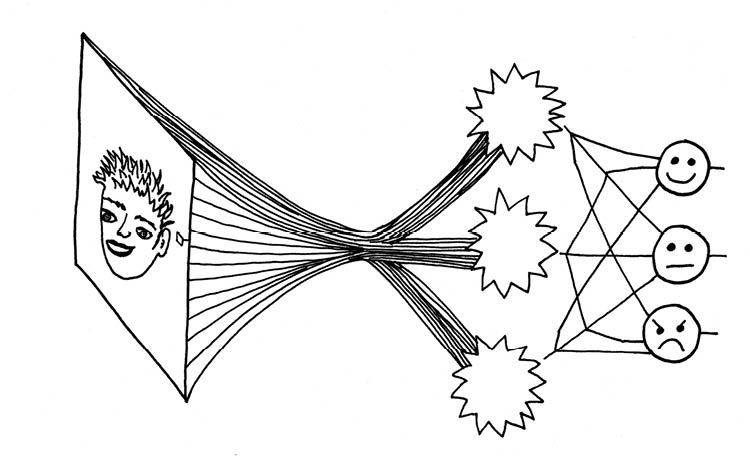

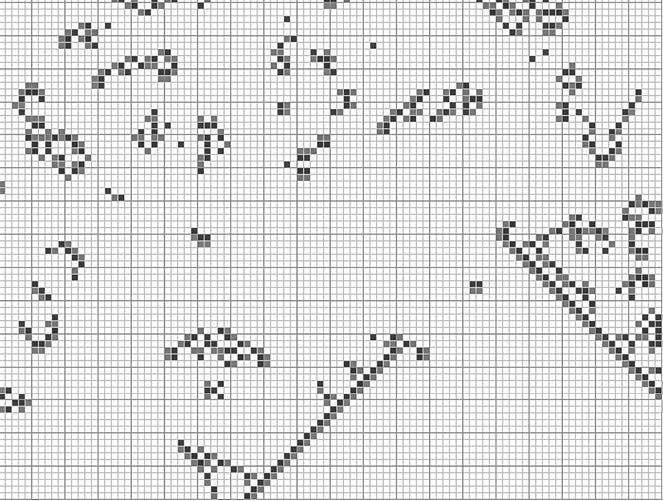

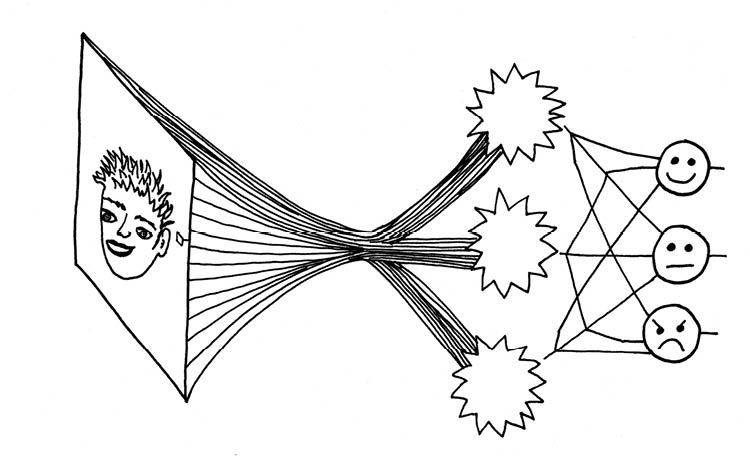

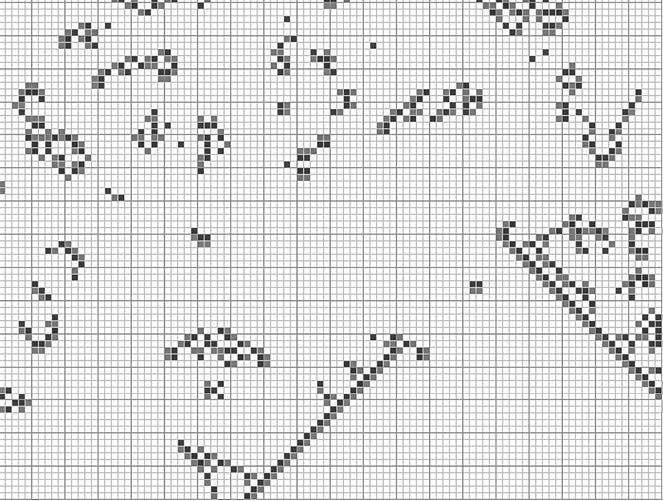

In order to get an idea of how neural nets work, consider a face-recognition problem that I often have my students work on when I teach a course on artificial intelligence. In this experiment, which was devised by the computer scientist Tom Mitchell of Carnegie Mellon University, we expose a neural network to several hundred small monochrome pictures of people’s faces.81 To simplify things, the images are presented as easily readable computer files that list a grayscale value for each pixel. The goal of the experiment, as illustrated in Figure 89, is for the network to recognize which faces are smiling, which are frowning, and which are neutral.

As it turns out, there’s a systematic method for adjusting the weights and thresholds of the neurons. The idea is that you train the network on a series of sample inputs for which the desired target output is known. Suppose you show it, say, a test face that is to be known to be smiling. And suppose that the outputs incorrectly claim that the face is frowning or neutral. What you then do is to compute the error term of the outputs. How do I define the error? We’ll suppose that if a face is frowning, I want an output of 0.9 from the “frown” effector, and outputs of 0.1 from the other two—and analogous sets of output for the neutral and smiling cases. (Due to the S-shaped nature of the continuous-valued-output neurons’ response curve, it’s not practical to expect them to actually produce exact 0.0 or 1.0 values.)

Given the error value of the network on a given face, you can work your way backward through the system, using a so-called back-propagation algorithm to determine how best to alter the weights and thresholds on the neurons in order to reduce the error. Suppose, for instance, the face was frowning, but the smile output was large. You look at the inputs and weights in the smile neuron to see how its output got so big. Perhaps one of the smile neuron inputs has a high value, and suppose that the smile neuron also has a high positive weight on that input. You take two steps to correct things. First of all, you lower the smile neuron’s weight for that large-valued input line, and second, you go back to the hidden neuron that delivered that large value to the smile neuron and analyze how that hidden neuron happened to output such a large value. You go over its input lines and reduce the weights on the larger inputs. In order for the process of back propagation to work, you do it somewhat gently, not pushing the weights or thresholds too far at any one cycle. And you iterate the cycle many times.

So you might teach your neural net to recognize smiles and frowns as follows. Initialize your network with some arbitrary small weights and thresholds, and train it with repeated runs over a set of a hundred faces you’ve already determined to be smiling, frowning, or neither. After each face your back-propagation method computes the error in the output neurons and readjusts the network weights that are in some sense responsible for the error. You keep repeating the process until you get to a run where the errors are what you consider to be sufficiently small—for the example given, about eighty runs might be enough. At this point you’ve finished training your neural net. You can save the three thousand or so trained weights and thresholds into a file.

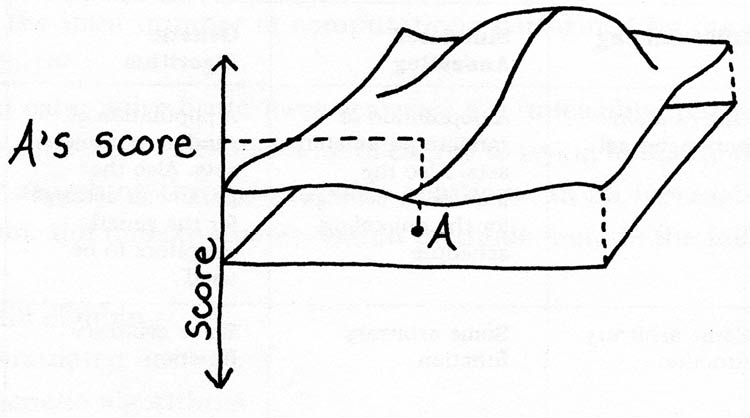

You may be reminded of the discussion in section 3.6: How We Got So Smart of search methods for finding peaks in a fitness landscape. The situation here is indeed similar. The “face weight” landscape example ranges over all the possible sets of neuron weights and thresholds. For each set of three thousand or so parameters, the fitness value measures how close the network comes to being a 100 percent right in discerning the expressions of the test faces. Obviously it’s not feasible to carry out an exhaustive search of this enormous parameter space, so we turn to a search method—in this case a method called back-propagation. As it turns out, back-propagation is in fact a special hill-climbing method that, for any given set of parameters, finds the best nearby set of parameters in the neural net’s fitness landscape.

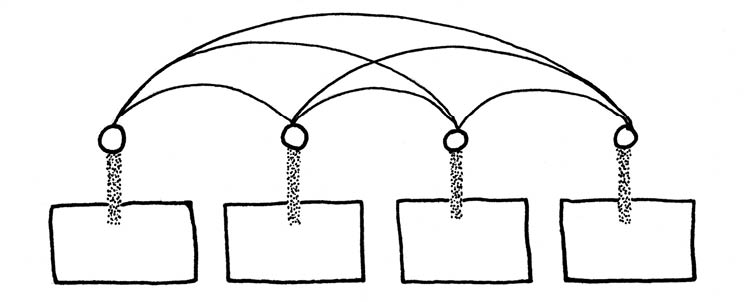

Figure 89: A Neural Net to Recognize Smiles and Frowns

We’ll use continuous-valued-output neurons, so at the right-hand side our net returns a real-number “likelihood” value for each of the three possible facial expressions being distinguished. The inputs on the left consist of rasterized images of faces, 30 x 32 pixels in size, with each of the 960 pixels containing a grayscale value ranging between zero and one. In the middle we have a “hidden layer” of three neurons. Each neuron takes input from each of the 960 pixels and has a single output line. On the right we have three effector neurons (drawn as three faces), each of which takes as input the outputs of the three hidden-layer neurons. For a given input picture, the network’s “answer” regarding that picture’s expression corresponds to the effector neuron that returns the greatest value. The total number of weights in this system is 3^960 + 33, or 2,889, and if we add in the threshold values for our six neurons, we get 2,895. (By the way, there’s no particular reason why I have three hidden neurons. The network might do all right with two or four hidden neurons instead.)

Now comes the question of how well your net’s training can generalize. Of course it will score fine on those hundred faces that it looked at eighty times each—but what happens when you give it new, previously unseen pictures of faces? If the faces aren’t radically different from the kinds of faces the net was trained upon, the net is likely to do quite well. The network has learned something, and the knowledge takes the form of some three thousand real numbers.

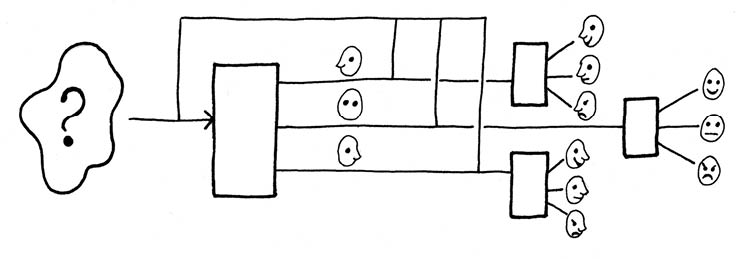

Figure 90: Generalized Face Recognizer

The first box recognizes the face’s position. The position information is fed with the face information to the three specialized expression recognizers, and the appropriate one responds.

But if, for instance, all the training faces were seen full-on, the network isn’t going to do well on profile faces. Or if all the training faces were of people without glasses, the network probably won’t be good at recognizing the facial expressions of glasses-wearers.

What to do? One approach is to build up deeper layers of neural networks. We might, for instance, use a preliminary network to decide which way a face is looking, sorting them into left-profile, full, and right-profile faces. And then we could train a separate expression-recognizing network for each of the three kinds of face positions. With a little cleverness with the wiring, the whole thing could be boxed up as a single multilayer neural net, as suggested by Figure 90.

Note here that it can actually be quite a job to figure out how best to break down a problem into neural-net-solvable chunks, and to then wire the solutions together. Abstractly speaking, one could simply throw a very general neural net at any problem—for instance, you might give the arbitrarily-positioned-face-recognition problem a neural net with perhaps two hidden layers of neurons, also allowing input lines to run from all the pixels into the neurons of the second hidden layer, and hope that with enough training and back-propagation the network will eventually converge on a solution that works as well as using the first layer to decide on the facial orientation and using the second layer’s neurons to classify the expression of each facial orientation. Training a net without a preconceived design is feasible, but it’s likelier to take longer than using some preliminary analysis and assembling it as described above.

Custom-designed neural nets are widely used—the U.S. Post Office, for instance, uses a neural net program to recognize handwritten ZIP codes. But the designers did have to put in quite a bit of thought about the neural net architecture—that is, how many hidden layers to use, and how many neurons to put in each of the hidden layers.

In the 2010s, software designers began using more than one hidden layer in their neural nets, as well as tailoring the connections for the targeted application. They’ve also begun using nonlinear response curves, that is, the effect of an input value might vary, say, with the square of the value, instead of with a simple multiple of the value. This arsenal of new techniques falls under the rubric of “deep learning.” Impressive strides forward are being made.

Computer scientists like to imagine building programs or robots that can grow up and learn and figure things out without any kind of guiding input. In so-called unsupervised learning, there’s no answer sheet to consult. If, for instance, you learn to play Ping-Pong simply by playing games against an opponent, your feedback will consist of noticing which of your shots goes bad and which points you lose. Nobody is telling you things like, “You should have tilted the paddle a little to the right and aimed more toward the other side’s left corner.” And, to make the situation even trickier, it may be quite some time until you encounter a given situation again. Tuning your neural net with unsupervised learning is a much harder search problem, and specifying the search strategy is an important part of the learning program design—typical approaches might include hill-climbing and genetic programming.

There’s also a metasearch issue of trying out various neural net architectures and seeing which works best. But as your problem domains get more sophisticated, the fitness evaluations get more time-consuming, particularly in the unsupervised learning environments where you essentially have to play out a whole scenario in order to see how well your agent will do. The search times for effective learning can be prohibitively long.

It’s worth noting here that a human or an animal is born with nearly all of the brain’s neural net architecture already in place. It’s not as if each of us has to individually figure out how to divide the brain’s hundred billion neurons into layers, how to parcel these layers into intercommunicating columns, and how to connect the layers to the thalamus, the sense organs, the spine, the basal ganglia, etc. The exceedingly time-consuming searches over the space of possible neural architectures is something that’s happened over millions of years of evolution—and we’re fortunate enough to inherit the results.

The somewhat surprising thing is how often a neural net can solve what had seemed to be a difficult AI problem. Workers in the artificial-intelligence field sometimes say, “Neural nets are the second-best solution to any problem.”82

Given a reasonable neural net architecture and a nice big set of training examples, you can teach a neural net to solve just about any kind of problem that involves recognizing standard kinds of situations. And if you have quite a large amount of time, you can even train neural nets to carry out less clearly specified problems, such as, for instance, teaching a robot how to walk on two legs. As with any automated search procedure, the neural net solutions emerge without a great deal of formal analysis or deep thought.

The seemingly “second-best” quality of the solution has to do with the feeling that a deep learning neural net solution is somewhat clunky, ad hoc, and brute-force. It’s not as if the designer has come up with an elegant, simple algorithm based upon a fundamental understanding of the problem in question. The great mound of network weights has an incomprehensible feel to it.

It could be that it’s time to abandon our scientific prejudices against complicated solutions. In the heroic nineteenth and twentieth centuries of science, the best solution of a problem often involved a dramatic act of fundamental understanding—one has only to think of the kinds of formulas that traditionally adorn the T-shirts of physics grad students: Maxwell’s equations for electromagnetism, Einstein’s laws for relativity, Schrodinger’s wave equation for quantum mechanics. In each case, we’re talking about a concise set of axioms from which one can, in a reasonable amount of time, logically derive the answers to interesting toy problems.

But the simple equations of physics don’t provide feasible solutions to many real-world problems—the laws of physics, for instance, don’t tell us when the big earthquake will hit San Jose, California, and it wouldn’t even help to know the exact location of all the rocks underground. Physical systems are computationally unpredictable. The laws provide, at best, a recipe for how the world might be computed in parallel particle by particle and region by region. But—unless you have access to some so-far-unimaginable kind of computing device that simulates reality faster than the world does itself—the only way to actually learn the results is to wait for the actual physical process to work itself out. There is a fundamental gap between T-shirt-physics-equations and the unpredictable and PC-unfeasible gnarl of daily life.

One of the curious things about neural nets is that our messy heap of weights arises from a rather simple deterministic procedure. Just for the record, let’s summarize the factors involved.

• The network architecture, that is, how many neurons we use, and how they’re connected to the sensor inputs, the effector outputs, and to one another.

• The specific implementation of the back-propagation algorithm to be used—there are numerous variants of this algorithm.

• The process used to set the arbitrary initial weights—typically we use a pseudorandomizer to spit out some diverse values, perhaps a simple little program like a CA. It’s worth noting that if we want to repeat our experiment, we can set the pseudorandomizer to the same initial state, obtaining the exact same initial weights and thence the same training process and the same eventual trained weights.

• The training samples. In the case of the expression-recognition program, this would again be a set of computer files of face images along with a specification as to which expression that face is considered to have.

In some sense the weights are summarizing the information about the sample examples in a compressed form—and compressed forms of information are often random-looking and incomprehensible. Of course it might be that the neural net’s weights would be messy even if the inputs were quite simple. As we’ve seen several times before, it’s not unusual for simple systems to generate messy-looking patterns all on their own—remember Wolfram’s pseudorandomizing cellular automaton Rule 30 and his glider-producing universally computing Rule 110.

We have every reason to suppose that, at least in some respects, the human brain functions similarly to a computer-modeled neural network. Although, as I mentioned earlier, much of the brain’s network comes preinstalled, we do learn things—the faces of those around us, the words of our native language, skills like touch-typing or bicycle-riding, and so on.

First of all, we might ask how a living brain goes about tweaking the weights of the synaptic connections between axons and dendrites. One possibility is that the changes are physical. A desirable synapse might be enhanced by having the axon’s terminal bulb grow a bit larger or move a bit closer to the receptor dendrite, and an undesirable synapse’s axon bulb might shrivel or move away. The virtue of physical changes is that they stay in place. But it’s also possible that the synapse tweaking is something subtler and more biochemical.

Second, we can ask how our brains go about deciding in which directions the synapse weights should be tweaked.

Do we use back-propagation, like a neural net? This isn’t so clear.

A very simple notion of human learning is called Hebbian learning, after Canadian neuropsychologist Donald O. Hebb, who published an influential book called The Organization of Behavior in 1949. This theory basically says that the more often a given synapse fires, the stronger its weight becomes. If you do something the right way over and over, that behavior gets “grooved in.” Practice makes perfect. It may be that when we mentally replay certain kinds of conversations, we’re trying to simulate doing something right.

This said, it may be that we do some back-propagation as well. In an unsupervised learning situation, such as when you are learning to play tennis, you note an error when, say, you hit the ball into the net. But you may not realize the error is precisely because you rotated your wrist too far forward as you hit. Rather than being able to instantly back-propagate the information to your wrist-controlling reflexes, you make some kind of guess about what you did wrong and back-propagate that.

More complicated skills—like how to get along with the people in your life—can take even longer to learn. For one thing, in social situations the feedback is rather slow. Do you back-propagate the error term only the next day when you find out that you goofed, at that time making your best guess as to which of your behaviors produced the bad results?

As well as using Hebbian learning or back-propagation, we continually carry out experiments, adjusting our neural weights on the fly. To an outsider, this kind of activity looks like play. When puppies romp and nip, they’re learning. If you go off and hit a ball against a wall a few hundred times in a row, you’re exploring which kind of stroke gives the best results. What children learn in school isn’t so much the stuff the teachers say as it is the results of acting various different ways around other people.

In terms of Wolfram’s fourfold classification, what kinds of overall computation take place as you adjust the weights of your brain’s neural networks?

Clear-cut tasks like learning the alphabet are class one computations. You repeat a deterministic process until it converges on a fixed point.

But in many cases the learning is never done. Particularly in social situations, new problems continue to arise. Your existing network weights need to be retuned over and over. Your network-tuning computation is, if you will, a lifelong education. The educational process will have aspects of class two, three, and four.

Your life education is class two, that is, periodic, to the extent that you lead a somewhat sheltered existence, perhaps by getting all your information from newspapers or, even more predictably, from television. In this kind of life, you’re continually encountering the same kinds of problems and solving them in the same kinds of ways.

If, on the other hand, you seek out a wider, more arbitrary range of different inputs, then your ongoing education is more of a class three process.

And, to the extent that you guide yourself guided along systematic yet gnarly paths, you’re carrying out a class four exploration of knowledge. Note that in this last case, it may well be that you’re unable to consciously formulate the criteria by which you guide yourself—indeed, if your search results from an unpredictable class four computation, this is precisely the case.

The engaged intellectual life is a never-ending journey into the unknown. Although the rules of our neuronal structures are limited in number, the long-term consequences of the rules need not be boring or predictable.

• See book's home page for info and buy links.

• Go to web version's table of contents.

4.3: Thoughts as Gliders and Scrolls

So far we’ve only talked about situations in which a brain tries to adjust itself so as to deal with some external situation. But the life of the mind is much more dynamic. Even without external input, the mind’s evolving computations are intricate and unpredictable.

Your brain doesn’t go dark if you close your eyes and lie down in a quiet spot. The thoughts you have while driving your car don’t have much to do with the sensor-effector exigencies of finding your way and avoiding the other vehicles.

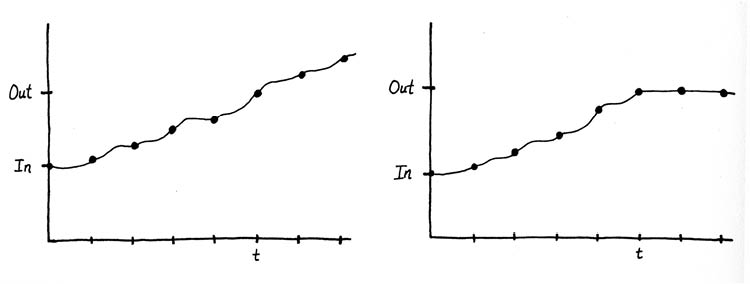

A little introspection reveals that we have several different styles of thought. In particular, I’d like to distinguish between two modes that I’ll call trains of thought and thought loops.

By trains of thought, I mean the free-flowing and somewhat unpredictable chains of association that the mind produces when left on its own. Note that trains of thoughts need not be formulated in words. When I watch, for instance, a tree branch bobbing in the breeze, my mind plays with the positions of the leaves, following them and automatically making little predictions about their motions. And then the image of the branch might be replaced by a mental image of a tiny man tossed up high into the air. His parachute pops open and he floats down toward a city of lights. I recall the first time I flew into San Jose and how it reminded me of a great circuit board. I remind myself that I need to see about getting a new computer soon, and then in reaction I think about going for a bicycle ride. The image of the bicyclist on the back of the Rider brand of playing cards comes to mind, along with a thought of how Thomas Pynchon refers to this image in Gravity’s Rainbow. I recall the heft of Pynchon’s book, and then think of the weights that I used to lift in Louisville as a hopeful boy forty-five years ago, the feel and smell of the rusty oily weight bar still fresh in my mind.

By thought loops, I mean something quite different. A thought loop is some particular sequence of images and emotions that you repeatedly cycle through. Least pleasant—and all too common—are thought loops having to do with emotional upsets. If you have a disagreement with a colleague or a loved one, you may find yourself repeating the details of the argument, summarizing the pros and cons of your position, imagining various follow-up actions, and then circling right back to the detailed replay of the argument. Someone deep in the throes of an argument-provoked thought loop may even move their lips and make little gestures to accompany the remembered words and the words they wish they’d said.

But many thought loops are good. You often use a thought loop to solve a problem or figure out a course of action, that is, you go over and over a certain sequence of thoughts, changing the loop a bit each time. And eventually you arrive at a pattern you like. Each section of this book, for instance, is the result of some thought loops that I carried around within myself for weeks, months, or even years before writing them down.

Understanding a new concept also involves a thought loop. You formulate the ideas as some kind of pattern within your neurons, and then you repeatedly activate the pattern until it takes on a familiar, grooved-in feel.

Viewing a thought loop as a circulating pattern of neuronal activation suggests something about how the brain might lay down long-term memories. Certainly a long-term memory doesn’t consist of a circulating thought loop that never stops. Long-term memory surely involves something more static. A natural idea would be that if you think about something for a little while, the circulating thought-loop stimulation causes physical alterations in the synapses that can persist even after the thought is gone. This harks back to the Hebbian learning I mentioned in the section 4.2: The Network Within, whereby the actual geometry of the axons and dendrites might change as a result of being repeatedly stimulated.

Thus we can get long-term memory from thought loops by supposing that a thought loop reinforces the neural pathway that it’s passing around. And when a relevant stimulus occurs at some later time, the grooved-in pathway is activated again.

Returning to the notion of free-running trains of thought, you might want to take a minute here and look into your mind. Watching trains of thought is entertaining, a pleasant way of doing nothing. But it’s not easy.

I find that what usually breaks off my enjoyment of my thought trains is that some particular thought will activate one of my thought loops to such an extent that I put all my attention into the loop. Or it may be that I encounter some new thought that I like so much that I want to savor it, and I create a fresh thought loop of repeating that particular thought to myself over and over. Here again my awareness of the passing thought trains is lost.

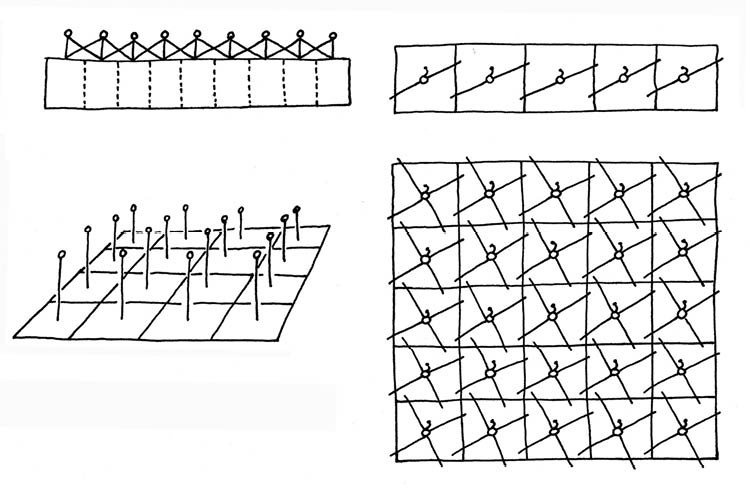

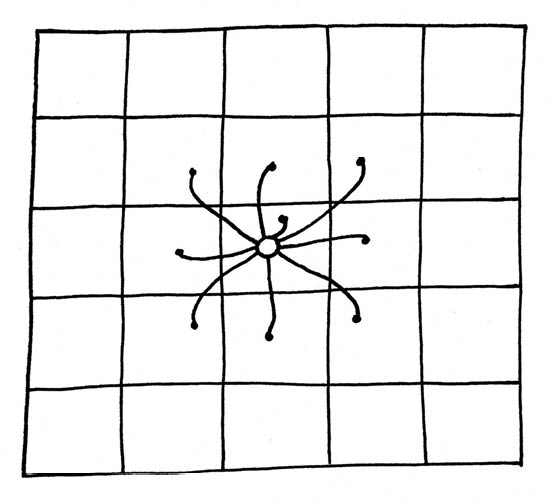

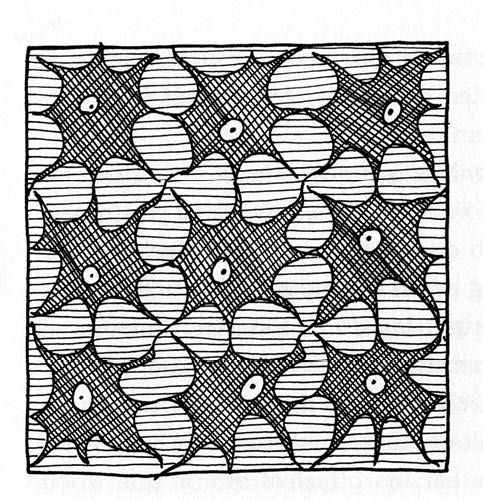

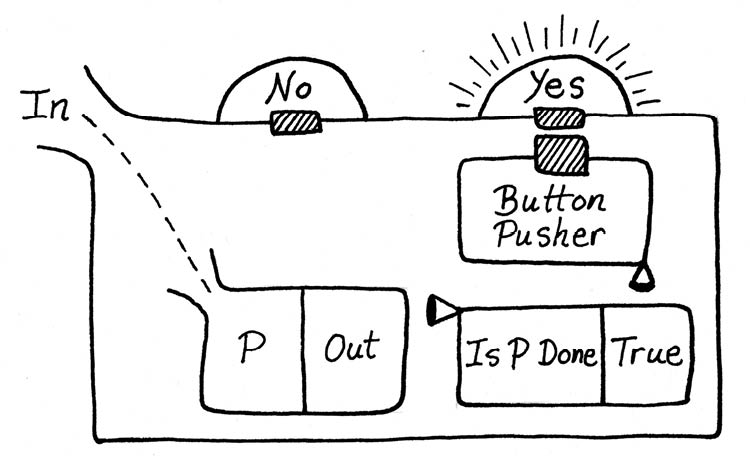

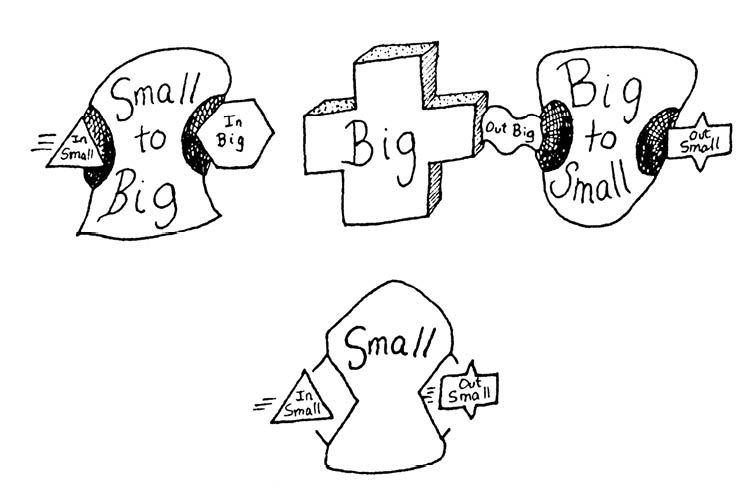

Figure 91: CA Made of Neurons

The dark, curvy eight-pointed stars are the neurons

This process is familiar for students of the various styles of meditation. In meditation, you’re trying to stay out of the thought loops and to let the trains of thought run on freely without any particular conscious attachment. Meditators are often advised to try to be in the moment, rather than in the past or the future—brooding over the past and worrying about the future are classic types of thought loops. One way to focus on the present is to pay close attention to your immediate body sensations, in particular to be aware of the inward and outward flow of your breath.

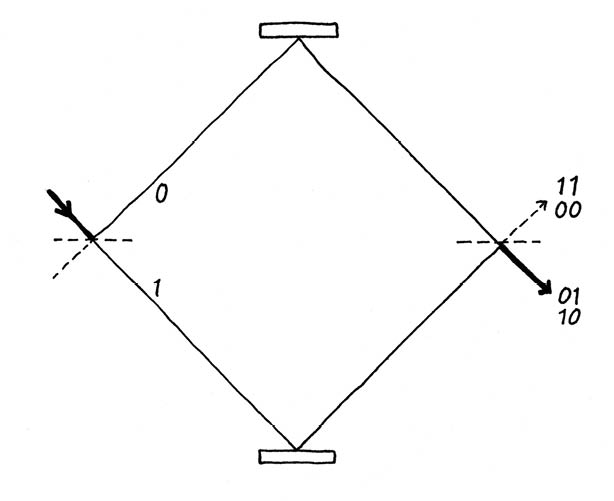

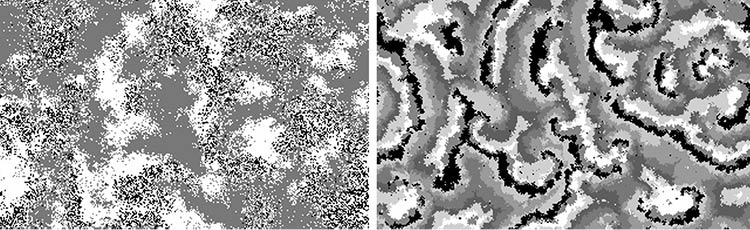

In understanding the distinction between trains of thought and thought loops, it’s useful to consider some computational models.

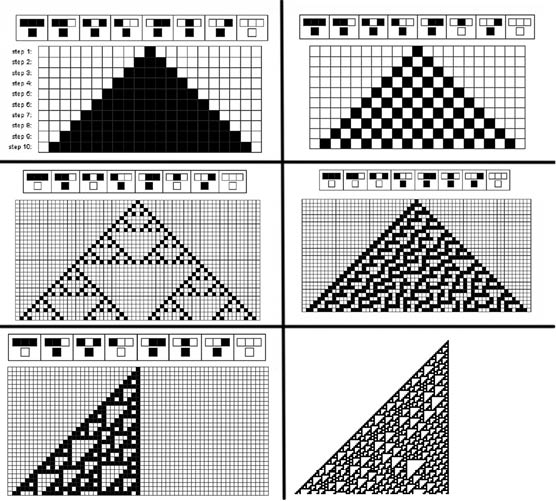

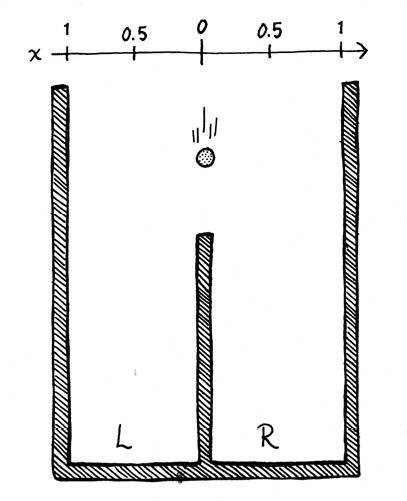

One of the nicest and simplest computer models of thought trains is a cellular automaton rule called Brian’s Brain. It was invented by the cellular automatist and all-around computer-fanatic Brian Silverman.

Brian’s Brain is based on a feature of the brain’s neurons that, for simplicity’s sake, isn’t normally incorporated into the computer-based neural nets described in section 4.2. This additional feature is that actual brain neurons can be tired, out of juice, too pooped to pop. If a brain neuron fires and sends a signal down its axon, then it’s not going to be doing anything but recuperating for the next tenth of a second or so.

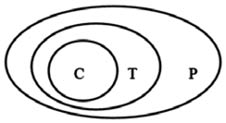

With this notion in mind, Silverman formulated a two-dimensional CA model based on “nerve cells” that have three states: ready, firing, and resting. Rather than thinking of the cells as having distinct input and output lines, the CA model supposes that the component cells have simple two-way links; to further simplify things, we assume that each cell is connected only to its nearest neighbors, as indicated in Figure 91.

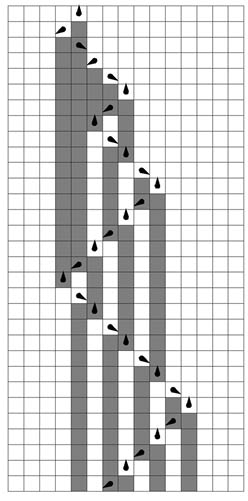

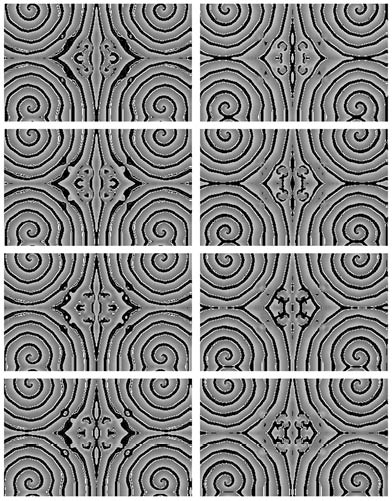

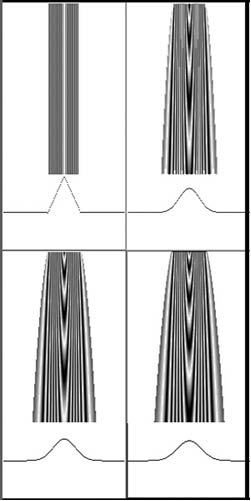

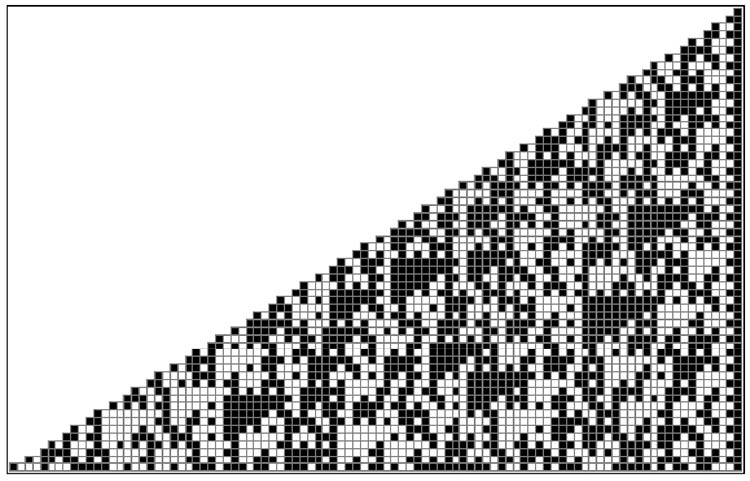

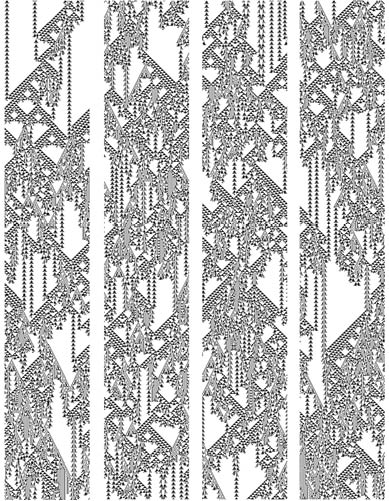

Figure 92: A Glider in the Brian’s Brain Rule

The Brian’s Brain rule updates all the cells in parallel, with a given cell’s update method depending on which state the cell happens to be in.

• Ready. This is the ground state where neurons spend most of their time. At each update, each cell counts how many (if any) of its eight immediate neighbors is in the firing state. If exactly two neighbors are firing, a ready cell switches to the firing state at the next update. In all other cases, a ready cell stays ready.

• Firing. This corresponds to the excited state where a neuron stimulates its neighbors. After being in a firing state, a cell always enters the resting state at the following update.

• Resting. This state follows the firing state. After one update spent in the resting state, a cell returns to the ready state.

The existence of the resting-cell state makes it very easy for moving patterns of activation to form in the Brian’s Brain rule. Suppose, for instance, that we have two light-gray firing cells backed up by two dark-gray resting cells. You might enjoy checking that the pattern will move in the direction of the firing cells, as indicated in Figure 92.

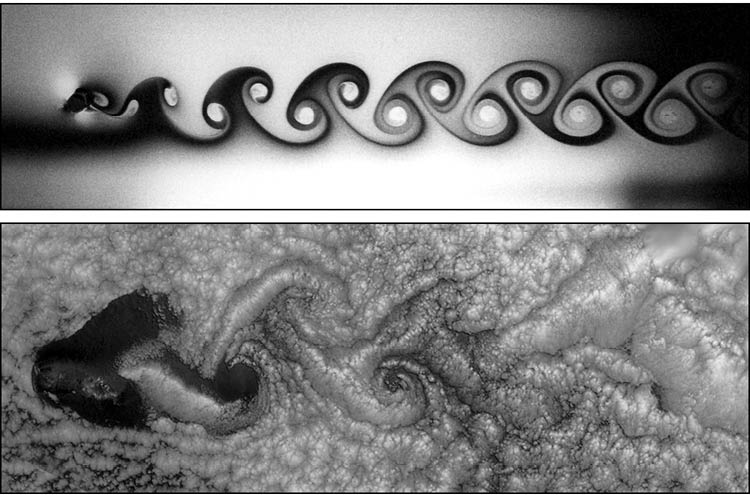

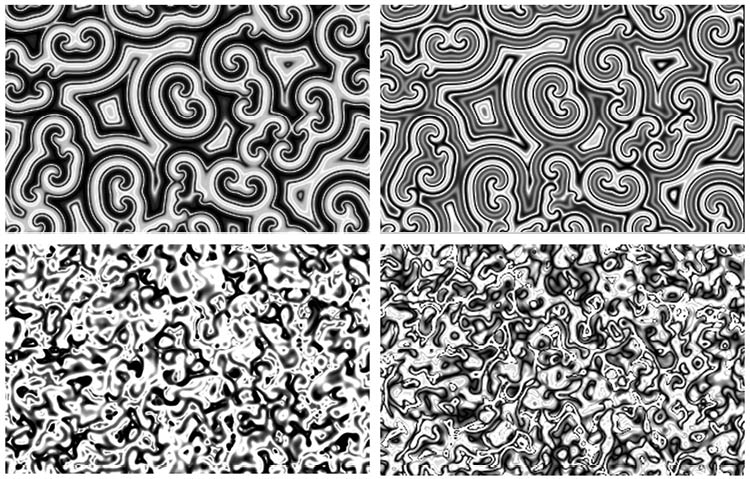

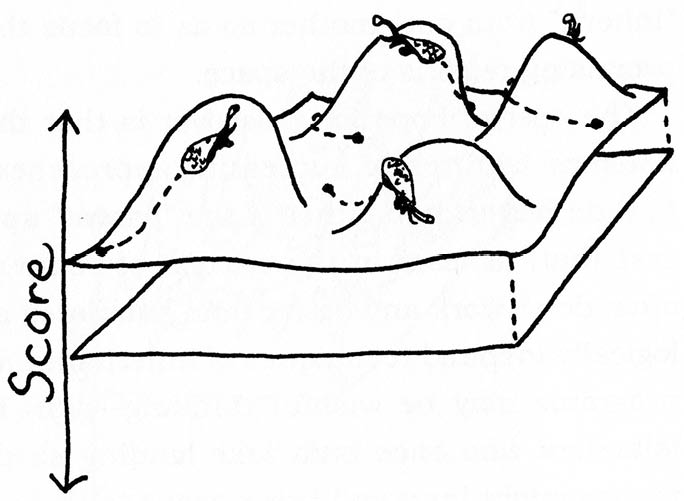

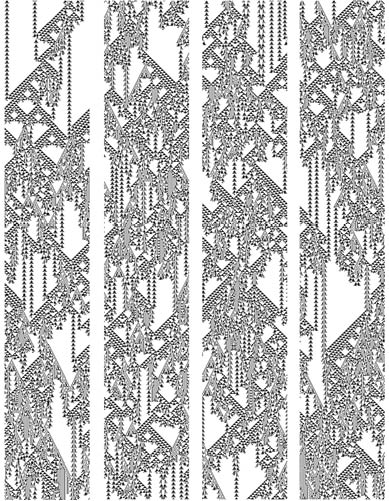

Brian’s Brain is rich in the moving patterns that cellular automatists call gliders. It’s a classic example of a class four computation and is nicely balanced between growth and death. Silverman himself once left a Brian’s Brain simulation running untended on an early Apple computer for a year—and it never died down. The larger gliders spawn off smaller gliders; occasionally gliders will stretch out a long thread of activation between them. As suggested by Figure 93, we find gliders moving in all four of the CA space’s directions, and there are in fact small patterns called butterflies that move diagonally as well.

I don’t think it’s unreasonable to suppose that the thought trains within your brain may result from a process somewhat similar to the endless flow of the gliding patterns in the Brian’s Brain CA. The trains of thought steam along, now and then colliding and sending off new thought trains in their wake.

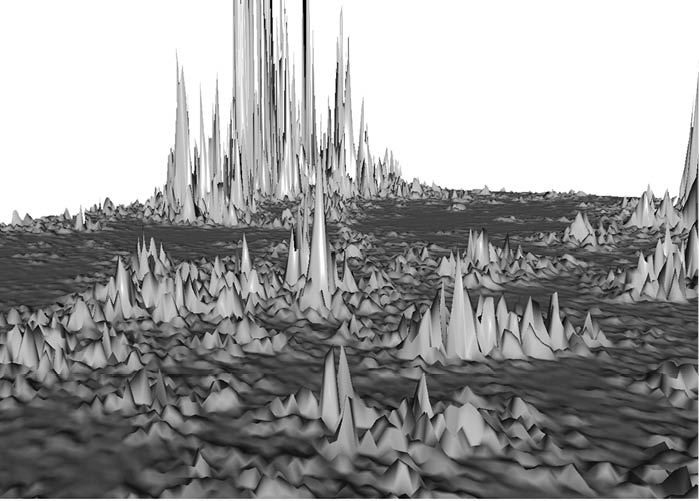

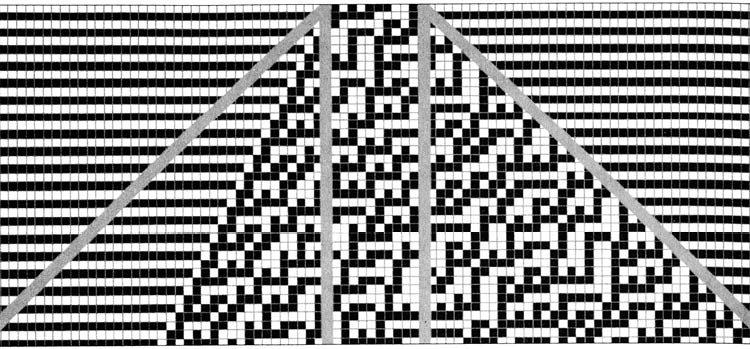

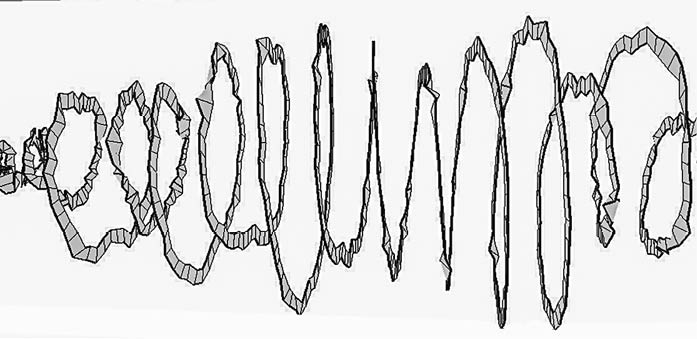

Figure 93: The Brian’s Brain Cellular Automaton

The white, light gray, and dark gray cells are, respectively, in the ready, firing, and resting states. If the picture were animated, you would see the patterns moving horizontally and vertically, with the light gray edges leading the dark gray tails, and with new firing cells dying and being born.83

When some new sensation comes in from the outside, it’s like a cell-seeding cursor-click on a computer screen. A few neurons get turned on, and the patterns of activation and inhibition flow out from there.

A more complicated way to think of thought trains would be to compare the brain’s network of neurons to a continuous-valued CA that’s simulating wave motion, as we discussed back in section 2.2: Everywhere at Once. Under this view, the thought trains are like ripples, and new input is like a rock thrown into a pond.

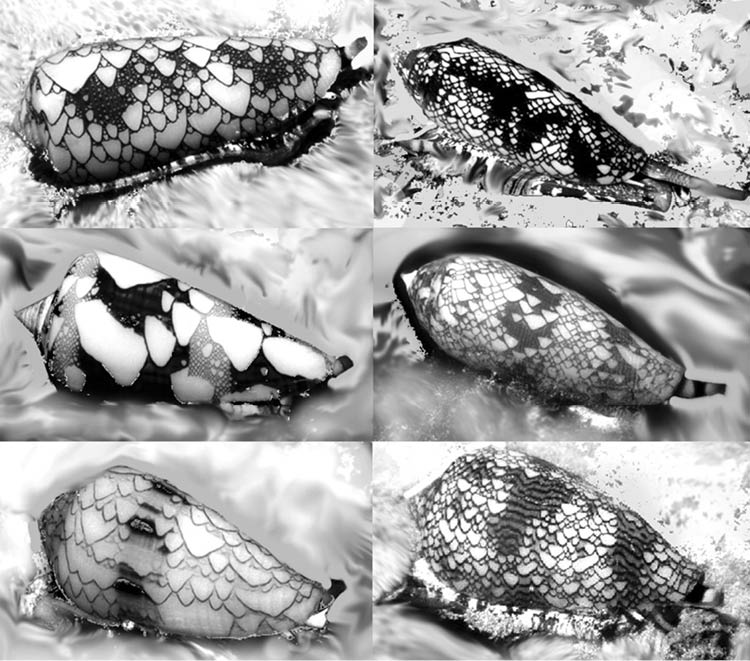

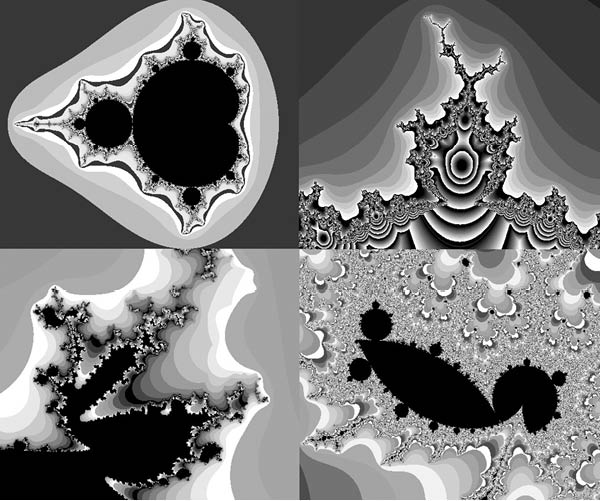

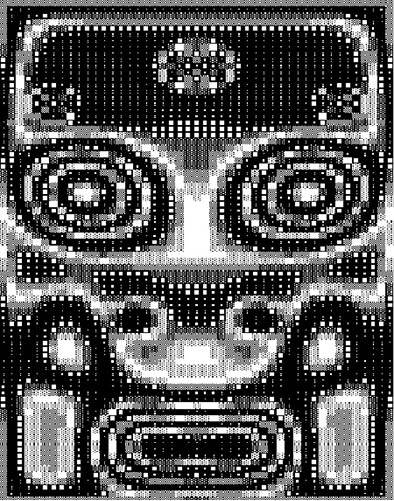

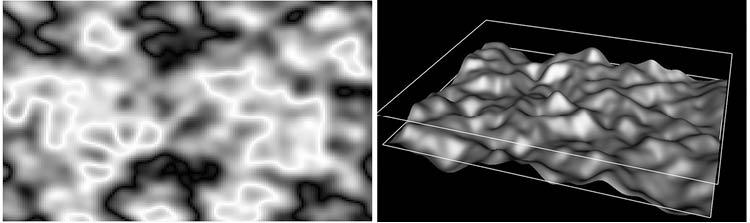

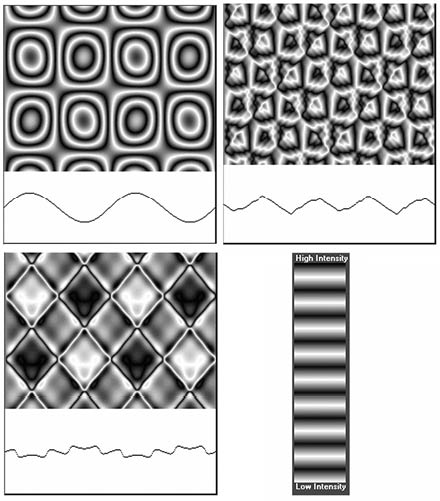

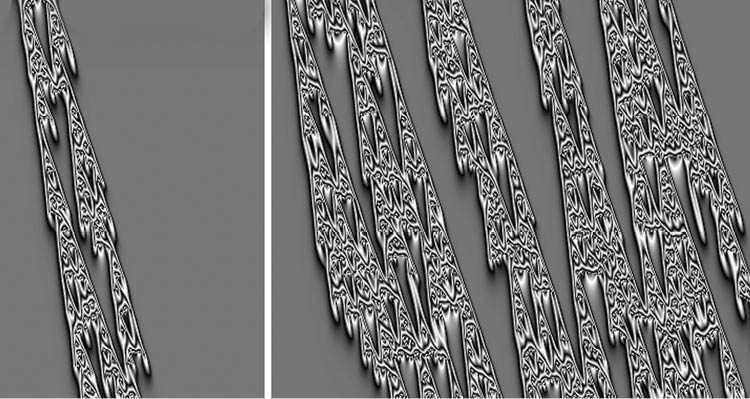

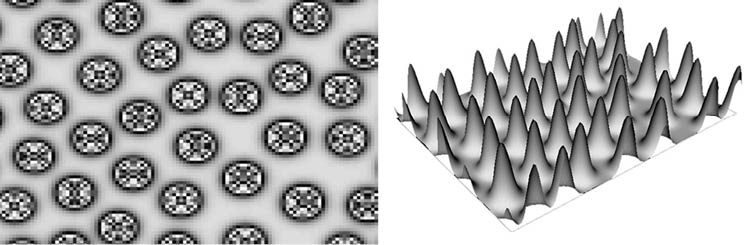

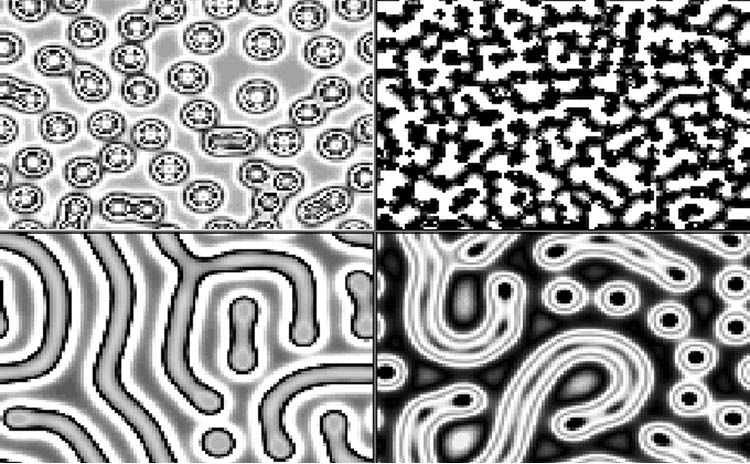

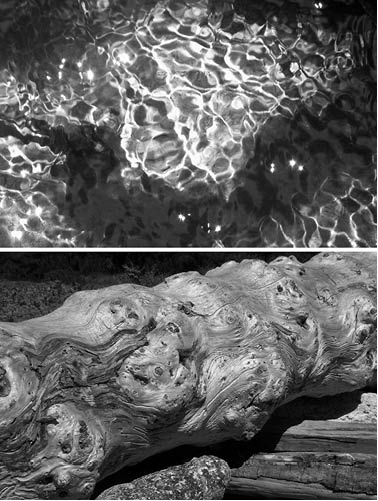

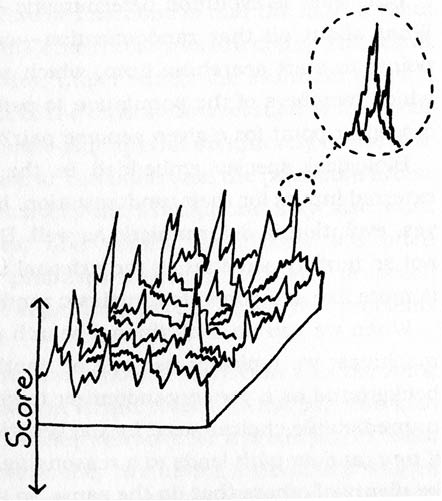

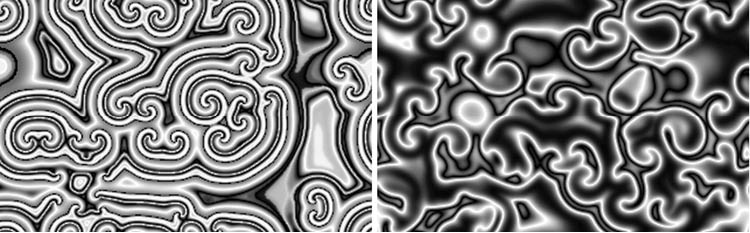

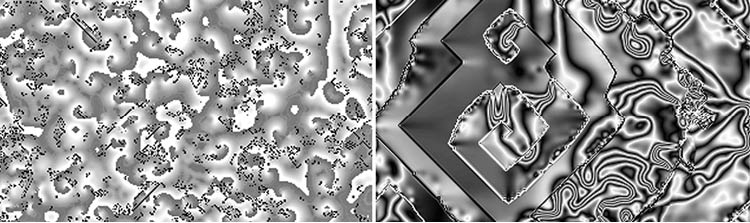

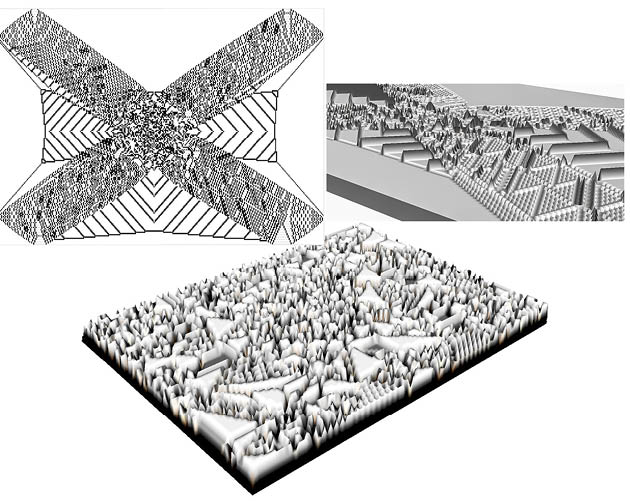

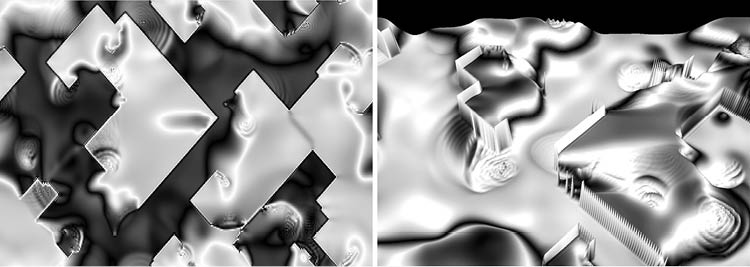

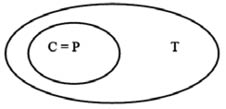

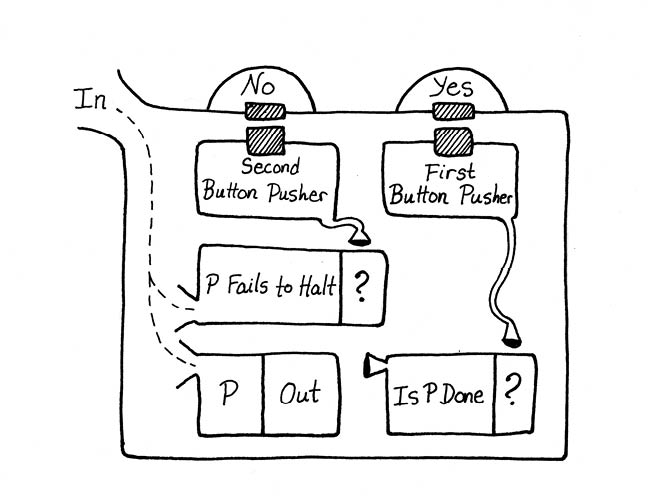

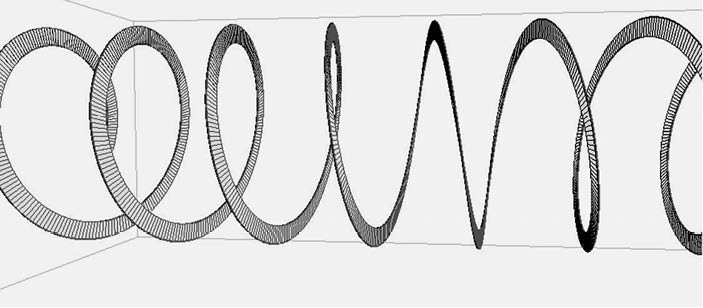

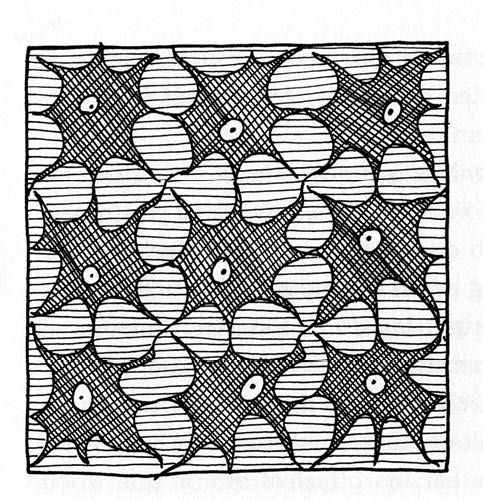

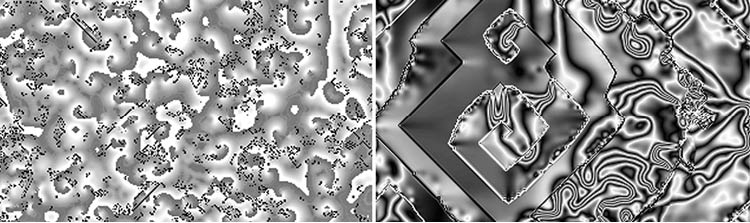

What about recurrent thoughts—the topics that you obsess upon, the mental loops that you circle around over and over? Here another kind of CA rule comes to mind: Zhabotinsky-style scrolls. You may recall that we’ve already discussed these ubiquitous forms in both the context of morphogenesis as well as in connection with ecological simulations of population levels. I show yet another pair of these images in Figure 94.

Figure 94: More CA Scroll Patterns

These two images were generated using variations of continuous-valued activator-inhibitor rules suggested by, respectively, Arthur Winfree and Hans Meinhardt.

Scroll-type patterns often form when we have an interaction between activation and inhibition, which is a good fit for the computations of the brain’s neurons. And, although I haven’t yet mentioned the next fact, it’s also the case that scroll patterns most commonly form in systems where the individual cells can undergo a very drastic change from a high level of activation to a low level—which is also a good fit for neuronal behavior. A neuron’s activation levels rise to a threshold value, it fires, and its activation abruptly drops.

Recall that when CAs produce patterns like Turing stripes and Zhabotinsky scrolls, we have the activation and inhibition diffusing at different rates. I want to point out that the brain’s activation and inhibition signals may also spread at different rates. Even if all neural activation signals are sent down axons at the same rate, axons of different length take longer to transmit a signal. And remember that there’s a biochemical element to the transmission of signals across synapses, so the activator and inhibitor substances may spread and take effect at different rates.

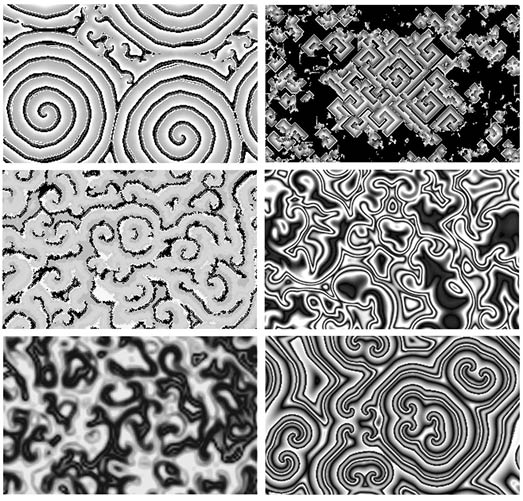

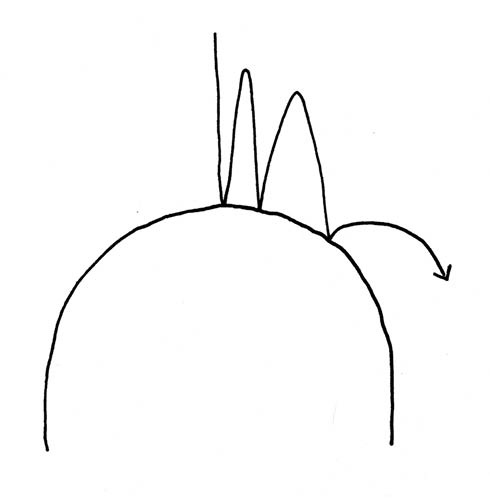

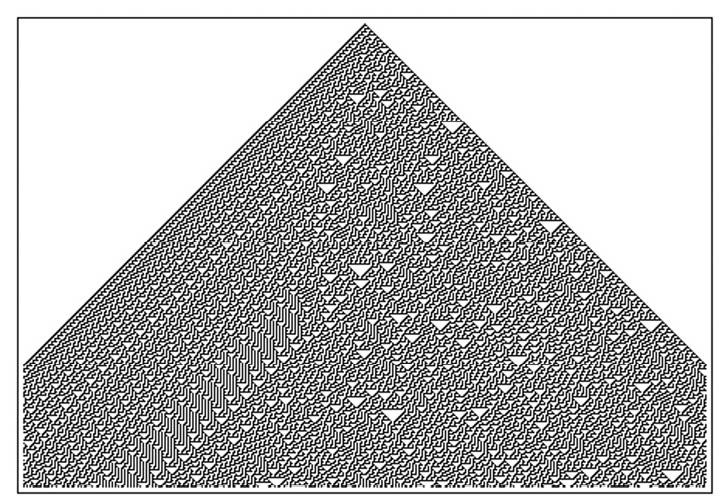

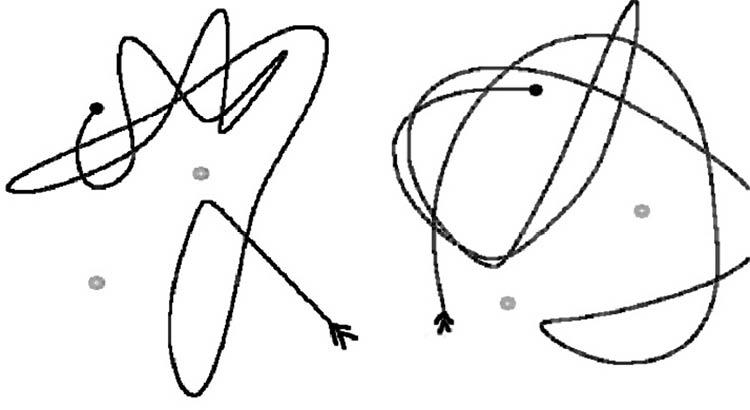

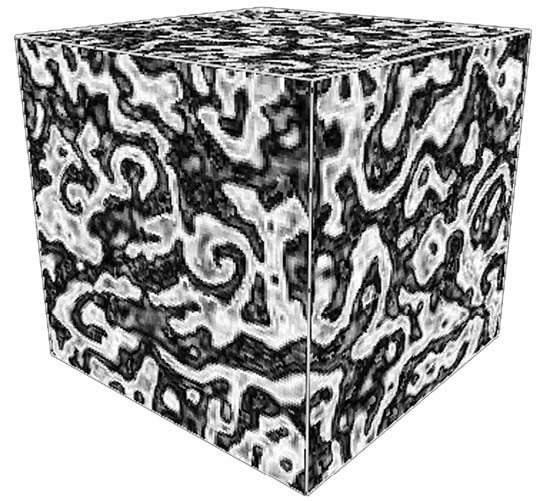

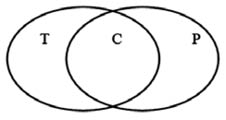

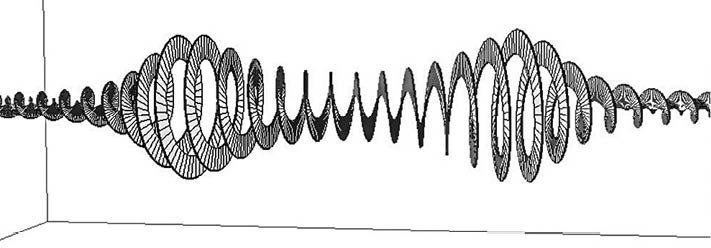

Summing up, I see my thought patterns as being a combination of two types of processes: a discrete gliderlike flow of thought trains overlaid upon the smoother and more repetitive cycling of my thought loops. The images in Figure 95 capture something of what I have in mind.

Figure 95: Cellular Automaton Patterns Like a Mind with Thoughts and Obsessions

The first image shows the Brain Hodge rule, where discrete Brian’s Brain gliders cruise across a sea of Hodgepodge scrolls. The cells hold two activators, one for each rule, and the Brain activator stimulates the Hodgepodge activator. The second image shows the Boiling Cubic Wave rule. Here we have a nonlinear wave-simulating rule that makes ripples akin to thought loops. The nonlinearity of the wave is a value that varies from cell to cell and obeys a driven heat rule, producing a “boiling” effect like moving trains of thought layered on top of the wavy thought loops. As it so happens, the thought trains have the ability to bend around into square scrolls.

I’ll confess that neither of these images precisely models my conception of brain activity—I’d really prefer to see the gliders etching highways into the scrolls and to see the dense centers and intersections of the scrolls acting as seed points for fresh showers of gliders. But I hope the images give you a general notion of what I have in mind when I speak of thought trains moving across a background of thought loops.

I draw inspiration from the distinction between fleeting trains of thought and repeating thought loops. When I’m writing, I often have a fairly clear plan for the section I’m working on. As I mentioned above, this plan is a thought loop that I’ve been rehearsing for a period of time. But it sometimes happens that once I’m actually doing the writing, an unexpected train of thought comes plowing past. I treat such unexpected thoughts as gifts from the muse, and I always give them serious consideration.

I remember when I was starting out as a novelist, I read an advice-to-writers column where an established author said something like, “From time to time, you’ll be struck with a completely crazy idea for a twist in your story. A wild hair that totally disrupts what you had in mind. Go with it. If the story surprises you, it’ll surprise the reader, too.” I never forgot that advice.

This said, as a practical matter, I don’t really work in every single oddball thought I get, as at some point a work can lose its coherence. But many of the muse’s gifts can indeed be used.

Who or what is the muse? For now, let’s just say the muse is the unpredictable but deterministic evolution of thought trains from the various inputs that you happen to encounter day to day. The muse is a class four computation running in your brain.

People working in any kind of creative endeavor can hear the muse. You might be crafting a novel, an essay, a PowerPoint presentation, a painting, a business proposal, a computer program, a dinner menu, a decoration scheme, an investment strategy, or a travel plan. In each case, you begin by generating a thought loop that describes a fairly generic plan. And over time you expand and alter the thought loop. Where do the changes come from? Some of them are reached logically, as predictable class two computations closely related to the thought loop. But the more interesting changes occur to you as unexpected trains of thought. Your plan sends out showers of gliders that bounce around your neuronal space, eventually catching your attention with new configurations. Straight from the muse.

One seeming problem with comparing thoughts to moving patterns in cellular automata is that the space of brain neurons isn’t in fact structured like a CA. Recall that axons can be up to a meter long, and information flow along an axon is believed to be one-way rather than two-way.

But this isn’t all that big a problem. Yes, the connectivity of the brain neurons is more intricate than the connectivity of the cells in a CA. But our experiences with the universality of computational processes suggests that the same general kinds of patterns and processes that we find in CAs should also occur in the brain’s neural network. We can expect to find class one patterns that die out, class two patterns that repeat, chaotic class three patterns, rapidly moving gliderlike class four patterns, and the more slowly moving scroll-like class four patterns.

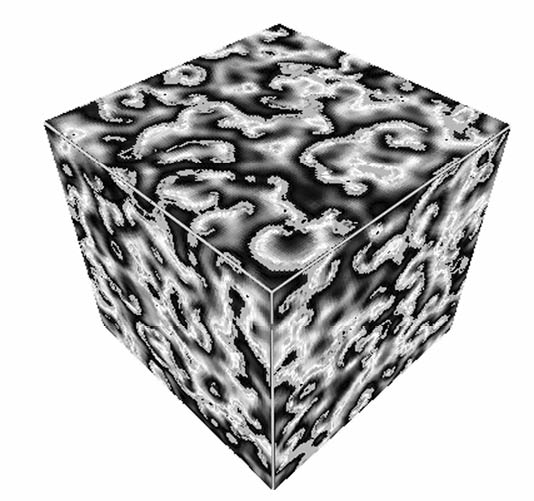

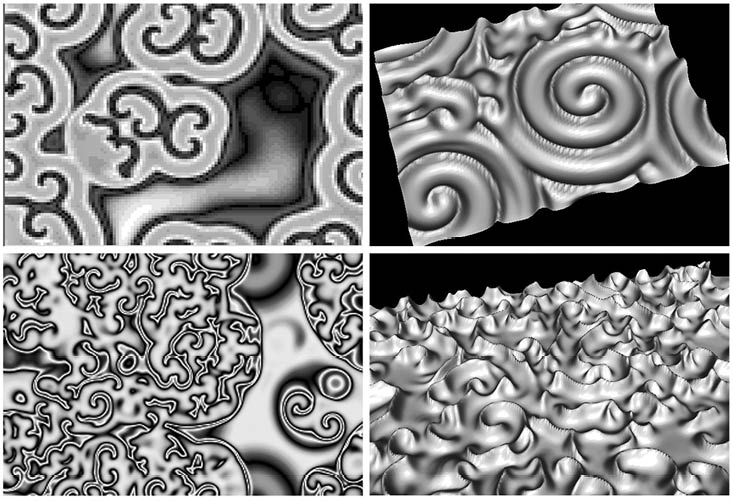

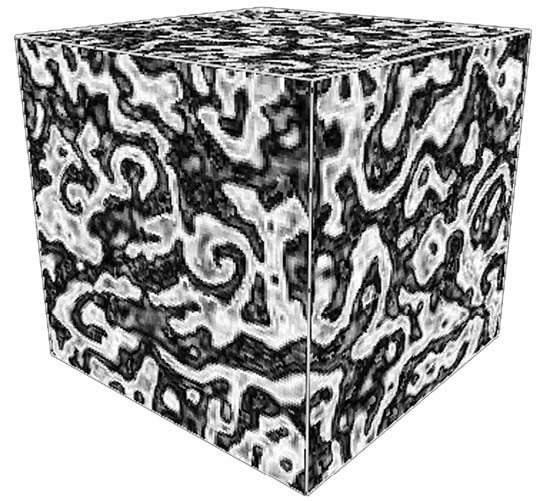

Visualize a three-dimensional CA running a CA rule rich in gliders and scrolls (see Figure 96). Think of the cells as nodes connected by strings to their neighbors. Now stretch a bunch of the strings and scramble things around, maybe drop the mess onto a tabletop, and shuffle it. Then paste the tangle into a two-foot-square mat that’s one-eighth of an inch thick. Your neocortex! The glider and scroll patterns are still moving around in the network, but due to the jumbled connections among the cells, a brain scan won’t readily reveal the moving patterns. The spatial arrangement of the neurons doesn’t match their connectivity. But perhaps some researchers can notice subtler evidences of the brain’s gliders and scrolls.

Figure 96: Another Three-Dimensional CA with Scrolls

Here’s a Winfree-style CA running on a three-dimensional array of cells. The surface patterns change rather rapidly, as buried scrolls boil up and move across it. Gnarly, dude!

At this point we’re getting rather close to the synthesizing “Seashell” element of The Lifebox, the Seashell, and the Soul. To be quite precise, I’m proposing that the brain is a CA-like computer and that the computational patterns called gliders and scrolls are the basis of our soulful mental sensations of, respectively, unpredictable trains of thought and repetitive thought-loops.

If this is true, does it make our mental lives less interesting? No. From whence, after all, could our thoughts come, if not from neuronal stimulation patterns? From higher-dimensional ectoplasm? From telepathic dark matter? From immortal winged souls hovering above the gross material plane? From heretofore undetected subtle energies? It’s easier to use your plain old brain.

Now, don’t forget that many or perhaps most complex computations are unpredictable. Yes, our brains might be carrying out computations, but that doesn’t mean they’ll ever cease surprising us.

I’m not always as happy as I’d like to be, and the months when I’ve been working on this chapter have been especially challenging. My joints and muscles pain me when I program or write, I had the flu for a solid month, my wife’s father has fallen mortally ill, my country’s mired in war, I’m anxious about finding a way to retire from the grind of teaching computer science, and frankly I’m kind of uptight about pulling off this rather ambitious book. I could complain for hours! I’m getting to be an old man.

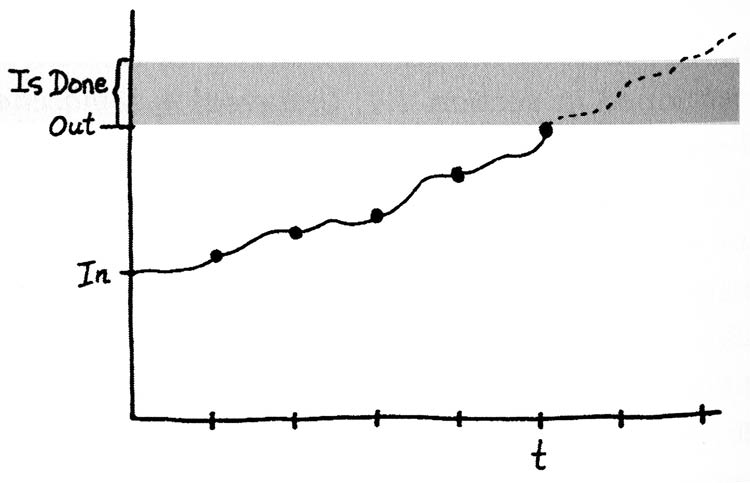

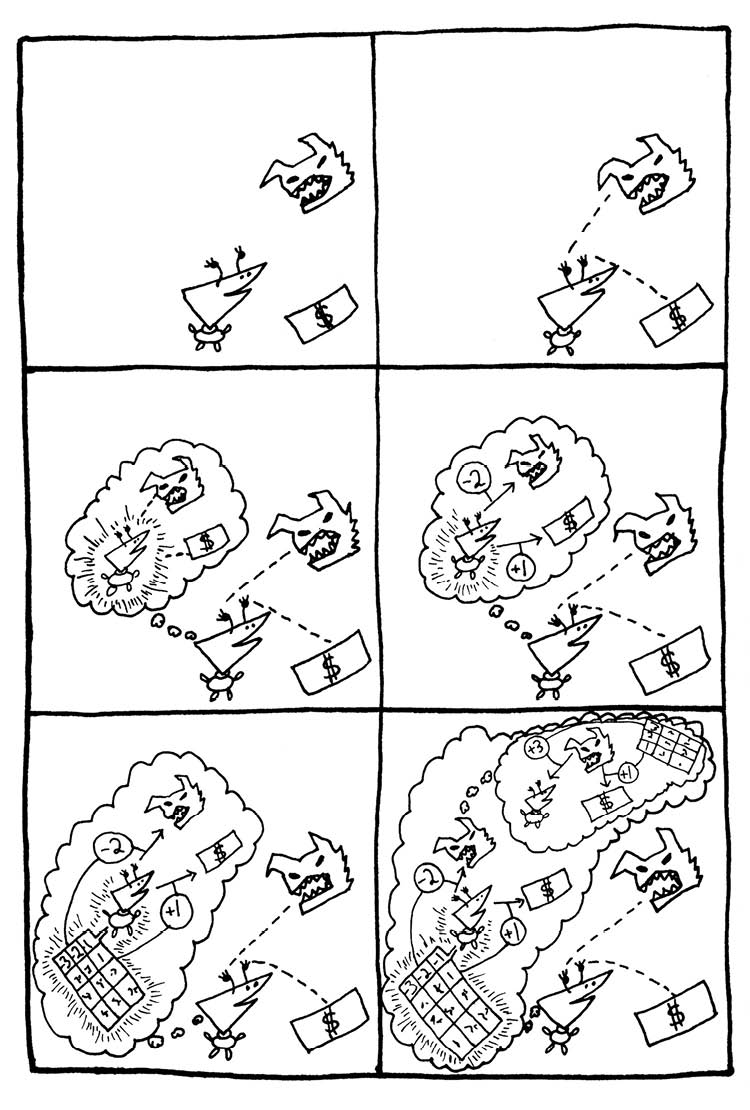

Scientist that I am, I dream that a deeper understanding of the mind might improve my serenity. If I had a better model of how my mind works, maybe I could use my enhanced understanding to tweak myself into being happy. So now I’m going to see if thinking in terms of computation classes and chaotic attractors can give me any useful insights about my moods. I already said a bit about this in section 3.3: Surfing Your Moods, but I want to push it further.

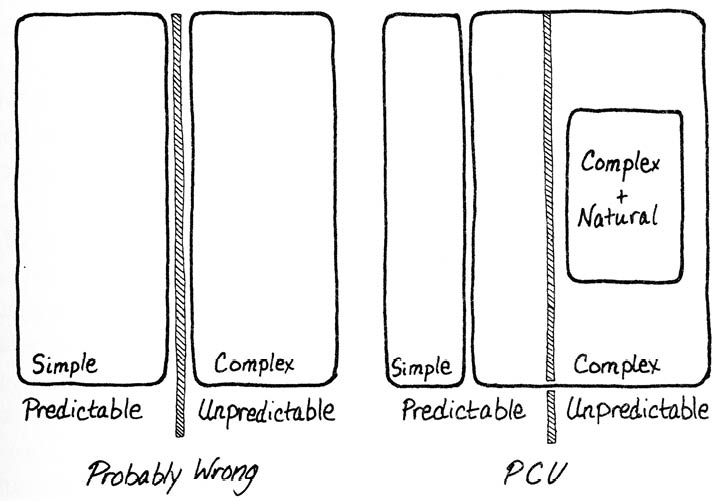

Rather than calling the mind’s processes class four computations, we can also refer to them as being chaotic. Although a chaotic process is unpredictable in detail, one can learn the overall range of behaviors that the system will display. This range is, again, what we call a chaotic attractor.

A dynamic process like the flow of thought wanders around a state space of possibilities. To the extent that thought is a computation, the trajectory differs from random behavior in two ways. First of all, the transitions from one moment to the next are deterministic. And secondly, at any given time the process is constrained to a certain range of possibilities—the chaotic attractor. In the Brian’s Brain CA, for instance, the attractor is the behavior of having gliders moving about with a characteristic average distance between them. In a scroll, the attractor is the behavior of pulsing out rhythmic bands.

As I mentioned in section 2.4: The Meaning of Gnarl, a class one computation homes in on a final conclusion, which acts as a point-like attractor. A class two computation repeats itself, cycling around on an attractor that’s a smooth, closed hypersurface in state space. Class three processes are very nearly random and have fuzzy attractors filling their state space. Most relevant for the analysis of mind are our elaborate class four trains of thought, flickering across their attractors like fish around tropical reefs.

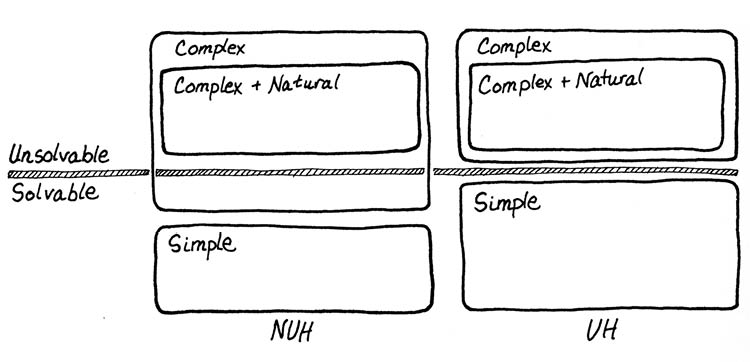

If you happen to be faced with a problem that actually has a definite solution, your thought dynamics can in fact can take on a class one form, closing in on the unique answer. But life’s more galling problems are in fact insoluble, and grappling with a problem like this is likely to produce a wretched class two cycle.

Taking a fresh look at a thought loop gets you nowhere. By way of illustration, no matter what brave new seed pattern you throw into a scroll-generating CA, the pattern will always be eaten away as the natural attractor of the loop reasserts itself.

So how do we switch away from unpleasant thoughts? The only real way to escape a thought loop is to shift your mind’s activity to a different attractor, that is, to undergo a chaotic bifurcation, as I put it in section 2.4. We change attractors by altering some parameter or rule of the system so as to move to a different set of characteristic behaviors. With regard to my thoughts, I see two basic approaches: reprogramming or distraction.

Reprogramming is demanding and it takes time. Here I try to change the essential operating rules of the processes that make my negative thought-loops painful. Techniques along these lines include: learning to accept irremediable situations just as they are; anticipating and forestalling my standard emotional reactions to well-known recurring trigger events; letting go of my expectations about how the people around me ought to behave; and releasing my attachment to certain hoped-for outcomes. None of these measures comes easily.

I always imagine that over time, by virtue of right thinking and proper living, I’ll be able to inculcate some lasting changes into my synapses or neurotransmitters. I dream that I’ll be able to avoid the more lacerating thought-loops for good. But my hard-won equilibrium never lasts. Eventually I fall off the surfboard into the whirlpools.

Our society periodically embraces the belief that one might attain permanent happiness by taking the right drugs—think of psychedelics in the sixties and antidepressants in the Y2K era. Drugs affect brain’s computations, not by altering the software, but by tweaking the operational rules of the underlying neural hardware. The catch is that, given the unpredictable nature of class four computation, the effects of drugs can be different from what they’re advertised to be. At this point in my life, my preference is to get by without drugs. My feeling is that the millennia-long evolution of the human brain has provided for a rich enough system to produce unaided any state I’m interested in. At least in my case, drugs can in fact lead to a diminished range of possibilities. This said, I do recognize that, in certain black moods, any theorizing about attractors and computation classes becomes utterly beside the point, and I certainly wouldn’t gainsay the use of medication for those experiencing major depression.

Distraction is an easier approach. Here you escape a problem by simply forgetting about it. And why not? Why must every problem be solved? Your mind’s a big place, so why limit your focus to its least pleasant corners? When I want to come to my senses and get my attention away from, say, a mental tape of a quarrel, I might do it by hitching a ride on a passing thought train. Or by paying attention to someone other than myself. Altruism has its rewards. Exercise, entertainment, or excursions can help change my mood.

Both reprogramming and distraction reduce to changing the active attractor. Reprogramming myself alters the connectivity or chemistry of my brain enough so that I’m able to transmute my thought loops into different forms with altered attractors. Distracting myself and refocusing my attention shifts my conscious flow of thoughts to a wholly different attractor.

If you look at an oak tree and a eucalyptus tree rocking in the wind, you’ll notice that each tree’s motion has its own distinct set of attractors. In the same way, different people have their own emotional weather, their particular style of response, thought, and planning. This is what we might call their sensibility, personality, or disposition.

Having raised three children, it’s my impression that, to a large degree, their dispositions were fairly well fixed from the start. For that matter, my own basic response patterns haven’t changed all that much since I was a boy. One’s mental climate is an ingrained part of the body’s biochemistry, and the range of attractors available to an individual brain is not so broad as one might hope.

In one sense, this is a relief. You are who you are, and there’s no point agonizing about it. My father took to this insight in his later life and enjoyed quoting Popeye’s saying: “I yam what I yam.” Speaking of my father, as the years go by, I often notice aspects of my behavior that remind me of him or of my mother—and I see the same patterns yet again in my children. Much of one’s sensibility consists of innate hereditary patterns of the brain’s chemistry and connectivity.

In another sense, it’s disappointing not to be able to change one’s sensibility. We can tweak our moods somewhat via reprogramming, distraction, or psychopharmacology. But making a radical change is quite hard.

As I type this on my laptop, I’m sitting at my usual table in the Los Gatos Coffee Roasting cafe. On the cafe speakers I hear Jackson Browne singing his classic road song, “Take It Easy,” telling me not to let the sound of my own wheels drive me crazy. It seems to fit the topic of thought loops, so I write it in. This gift from the outside reminds me that perhaps there’s a muse bigger than anything in my own head. Call it God, call it the universe, call it the cosmic computation that runs forward and backward through all of spacetime.

Just now it seems as if everything’s all right. And so what if my exhilaration is only temporary? Life is, after all, one temporary solution after another. Homeostasis.

• See book's home page for info and buy links.

• Go to web version's table of contents.

4.4: “I Am”

In section 4.1: Sensational Emotions, I observed that our brain functions are intimately related to the fact that we have bodies living in a real world, in section 4.2: The Network Within, I discussed how responses can be learned in the form of weighted networks of neural synapses, and in section 4.3: Thoughts as Gliders and Scrolls, I pointed out that the brain’s overall patterns of activation are similar to the gliders and scrolls of CAs.

In this section I’ll talk about who or what is experiencing the thoughts. But before I get to that, I want to say a bit about the preliminary question of how it is that a person sees the world as made of distinct objects, one of which happens to be the person in question.

Thinking in terms of objects gives you an invaluable tool for compressing your images of the world, allowing you to chunk particular sets of sensations together. The ability to perceive objects isn’t something a person learns; it’s a basic skill that’s hardwired into the neuronal circuitry of the human brain.

We take seeing objects for granted, but it’s by no means a trivial task. Indeed, one of the outstanding open problems in robotics is to design a computer vision program that can take camera input and reliably pick out the objects in an arbitrary scene. By way of illustration, when speaking of chess-playing robots, programmers sometimes say that playing chess is the easy part and recognizing the pieces is the hard part. This is initially surprising, as playing chess is something that people have to laboriously learn—whereas the ability to perceive objects is something that people get for free, thanks to being born with a human brain.

In fact, the hardest tasks facing AI involve trying to emulate the fruits of evolution’s massive computations. Putting it a bit differently, the built-in features of the brain are things that the human genome has phylogenetically learned via evolution. In effect evolution has been running a search algorithm across millions of parallel computing units for millions of years, dwarfing the learning done by an individual brain during the pitifully short span of a single human life. The millennia of phylogenetic learning are needed because it’s very hard to find a developmental sequence of morphogens capable of growing a developing brain into a useful form. Biological evolution solved the problem, yes, but can our laboratories?

In limited domains, man-made programs do of course have some small success in recognizing objects. Machine vision uses tricks such as looking for the contours of objects’ edges, picking out coherent patches of color, computing the geometric ratios between a contour’s corners, and matching these features against those found in a stored set of reference images. Even so, it’s not clear if the machine approaches we’ve attempted are in fact the right ones. The fact that our hardware is essentially serial tends to discourage us from thinking deeply enough about the truly parallel algorithms used by living organisms. And using search methods to design the parallel algorithms takes prohibitively long.

In any case, thanks to evolution, we humans see the world as made up of objects. And of all the objects in your world, there’s one that’s most important to you: your own good self. At the most obvious level, you pay special attention to your own body because that’s what’s keeping you alive. But now let’s focus on something deeper: the fact that your self always seems surrounded by a kind of glow. Your consciousness. What is it?

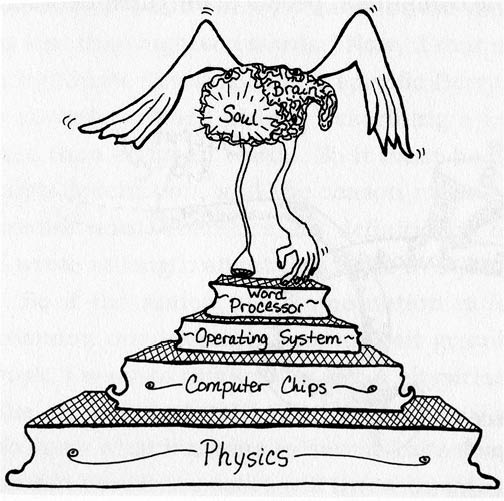

At first we might suppose that consciousness is a distinct extra element, with a person then being made of three parts:

• The hardware, that is, the physical body and brain.

• The software, including memories, skills, opinions, and behavior patterns.

• The glow of consciousness.

What is that “glow” exactly? Partly it’s a sense of being yourself, but it’s more than that: It’s a persistent visceral sensation of a certain specific kind; a warmth, a presence, a wordless voice forever present in your mind. I think I’m safe in assuming you know exactly what I mean.

I used to be of the opinion that this core consciousness is simply the bare feeling of existence, expressed by the primal utterance, “I am.” I liked the fact that everyone expresses their core consciousness in the same words: “I am. I am me. I exist.” This struck me as an instance of what my ancestor Hegel called the divine nature of language. And it wasn’t lost on me that the Bible reports that after Moses asked God His name, “God said to Moses, ‘I AM WHO I AM’; and He said, “Thus you shall say to the sons of Israel, I AM has sent me to you.’ ” I once discussed this with Kurt Gödel, by the way, and he said the “I AM” in Exodus was a mistranslation! Be that as it may. I used to imagine my glow of consciousness to be a divine emanation from the cosmic One, without worrying too much more about the details.84

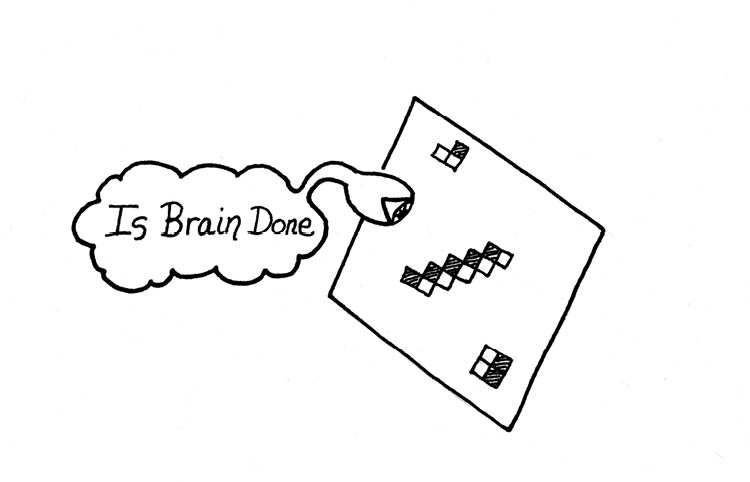

But let’s give universal automatism a chance. Might one’s glow of consciousness have some specific brain-based cause that we might in turn view as a computation?

In the late 1990s, neurologist Antonio Damasio began making a case that core consciousness results from specific phenomena taking place in the neurons of the brain. For Damasio, consciousness emerges from specific localized brain activities and is indeed a kind of computation. As evidence, Damasio points out that if a person suffers damage to a certain region of the brain stem, their consciousness will in fact get turned off, leaving them as a functional zombie, capable of moving about and doing things, but lacking that glowing sense of self.

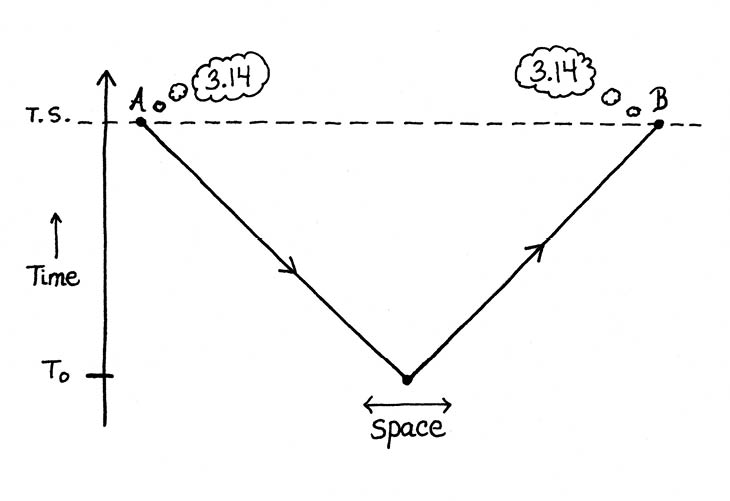

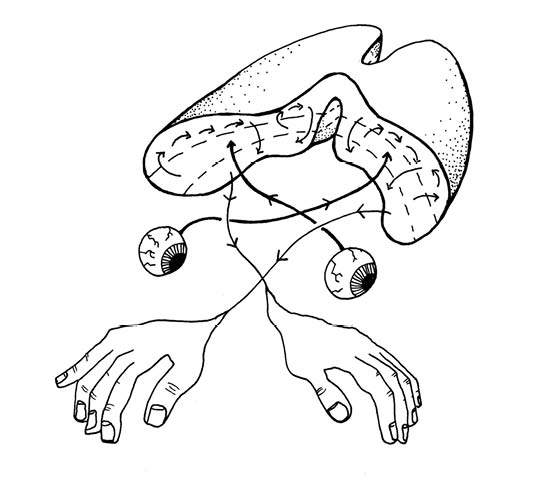

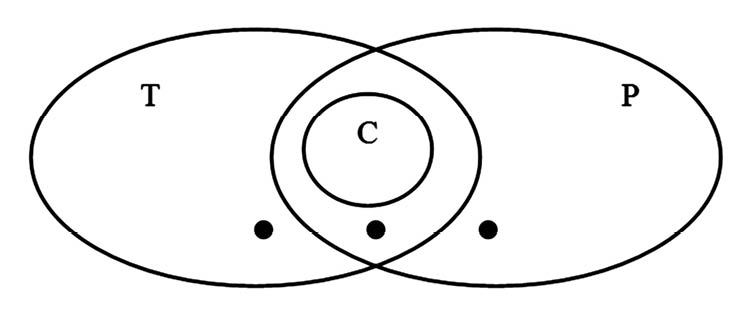

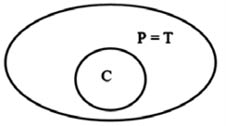

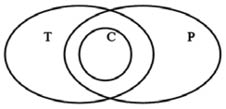

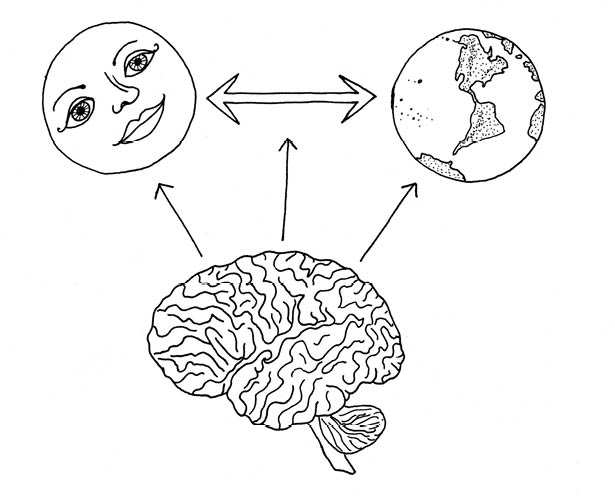

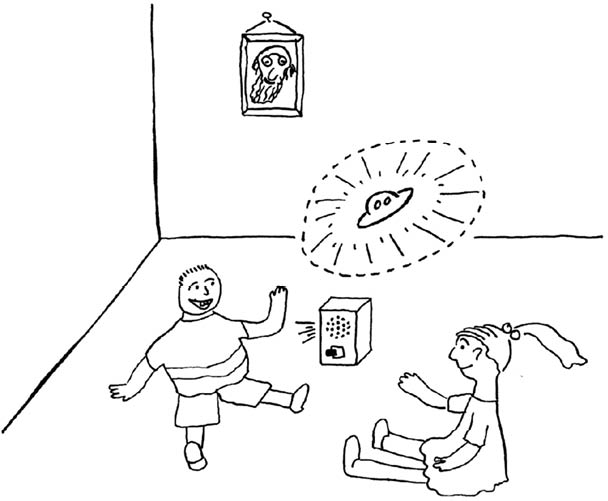

Figure 97: The Self, the World, and the Self Experiencing at the World

Your brain produces a “body-self” image on the left, an image of the world on the right, and, in the center, an image of the self interacting with the world. Damasio feels that consciousness lies in contemplating the interaction between the self and the world.

Damasio uses a special nomenclature to present his ideas. He introduces the term movie-in-the-brain to describe a brain’s activity of creating images of the world and of the body. The image of the body and its sensations is something that he calls the proto-self And he prefers the term core consciousness to what I’ve been calling the “I am” feeling.

Damasio believes that core consciousness consists of enhancing the movie-in-the-brain with a representation of how objects and sensations affect the proto-self. Putting it a little differently, he feels that, at any time, core consciousness amounts to having a mental image of yourself interacting with some particular image. It’s not enough to just have a first-order image of yourself in the world as an object among other objects—to get to core consciousness, you go a step beyond that and add on a second-order representation of your reactions and feelings about the objects you encounter. Figure 97 shows a shorthand image of what Damasio seems to have in mind.

In addition to giving you an enhanced sense of self, Damasio views core consciousness as determining which objects you’re currently paying attention to. In other words, the higher-order process involved in core consciousness has two effects: It gives you the feeling of being a knowing, conscious being, and it produces a particular mental focus on one image after another. The focusing aspect suggests that consciousness is being created over and over by a wordless narrative that you construct as you go along. Damasio describes his theory as follows:

As the brain forms images of an object—such as a face, a melody, a toothache, the memory of an event—and as the images of the object affect the state of the organism, yet another level of brain structure creates a swift nonverbal account of the events that are taking place in the varied brain regions activated as a consequence of the object-organism interaction. The mapping of the object-related consequences occurs in the first-order neural maps representing proto-self and object; the account of the causal relationship between object and organism can only be captured in second-order neural maps....One might say that the swift, second-order nonverbal account narrates a story: that of the organism caught in the act of representing is own changing state as it goes about representing something else. But the astonishing fact is that the knowable entity of the catcher has just been created in the narrative of the catching process....

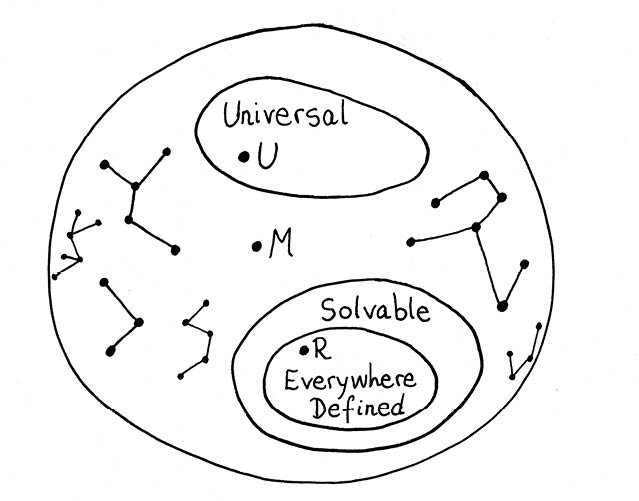

Most importantly, the images that constitute this narrative are incorporated in the stream of thoughts. The images in the consciousness narrative flow like shadows along with the images of the object for which they are providing an unwitting, unsolicited comment. To come back to the metaphor of the movie-in-the-brain, they are within the movie. There is no external spectator....