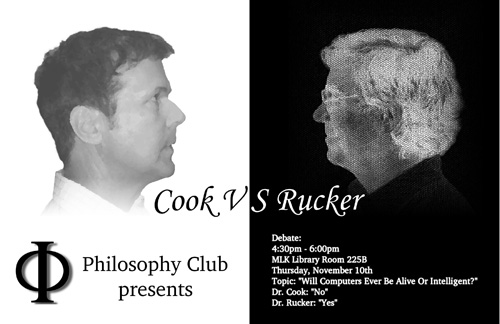

These are partial notes of my remarks during my debate with Noam Cook of the Philsophy Department, at San Jose State University, November 10, 2005. I forgot to bring my recorder, so I didn’t manage to tape it for podcast.

It was a nice event, with a big and enthusiastic audience, maybe 150 people, who asked a lot of good questions at the end. I was anxious about the event. They say that people have a phobia of public speaking — how about public speaking with a guy there to contradict everything you say! But Noam was a gentleman, and it went smoothly. I’ve incoporated some of my responses to his points in these notes.

Summary. I wish to argue that humans will eventually bring into existence computing machines that are as alive and intelligent as themselves.

After all, why shouldn’t there be alternate kinds of physical hardware which successfully emulate the behavior of humans? The only hard part is finding the right software for these systems. And even if the software is very hard to figure out, we have some hope of finding it by automated search methods.

Definition. A computation is a deterministic process that obeys a finitely describable rule. Saying that the process is deterministic means that identical inputs yield identical outputs. Saying that the rule is finitely describable means that the rule has finite description such as a program or a scientific theory.

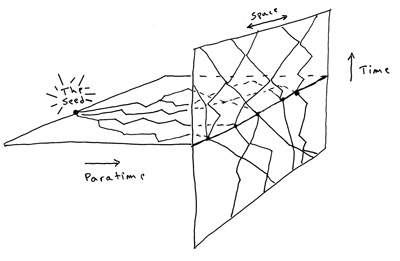

I believe in what I call universal automatism: It’s possible to view every naturally occurring process as a computation. For a universal automatist, all natural processes are deterministic and finitely describable — the weather, the stock market, the human mind, the course of the universe. The laws of nature are a kind of computer program.

In a broad sense, any object is a computer, but for this debate, let’s use “computer” in the narrow sense of being a manmade machine. Definition. A computer, or computing machine, is a device brought into being by humans using tools and used to carry out computations.

In arguing that we can eventually produce computers equivalent to humans, it’s useful to break my argument into three steps.

(1. Automatism) A human mind is a deterministic finitely complex process; that is, human consciousness is a computation carried out by the body and brain.

(2. Emulation) The human thought process can in principle be emulated on a man-made computer; that is, we can carry out equivalent computations on systems other than human bodies.

(3. Feasibility) We will in fact figure out a the design for such a computing system; that is, humans and their tools will eventually bring into existence such human-equivalent systems.

I realize that many people don’t want to accept that (1. Automatism) they are deterministic computations. This is the point I’ll I really have to argue for.

Looking ahead, if I accept that (1. Automatism) I’m a computation of some kind, then it’s relatively easy to believe that (2. Emulation) this same computation could be run on a man-made machine, for computers are so programmable and so flexible.

It’s also not so hard to believe the third step, which says (3. Feasibility) if its in principle possible to run a human-like computation on a machine, then eventually we fiddling monkeys will figure out a way to do it. It’s only a matter of time; my guess is a hundred years.

1. On Automatism. In arguing for the idea that our mind is a kind of computation, note that our psychology rests on our biology which rests upon physics. And physics itself is, I believe, a large, parallel, deterministic computation.

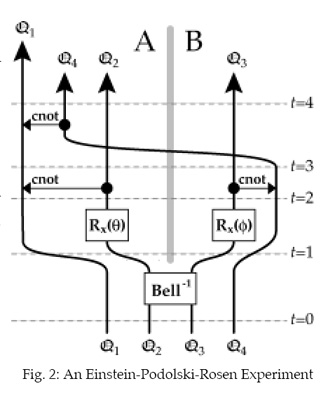

The uncertainties of quantum mechanics aren’t a lasting problem, by the way; the present-day interpretation of quantum mechanics is simply a scrim of confusion and misinterpretation overlaying a crystalline deterministic substrate which will eventually come clear.

So if we grant that human consciousness is a particular kind of physical process occurring in human bodies, and if we grant that physics is made up of deterministic computations, then we have to conclude that consciousness is a kind of computation.

Let me forestall three objections.

Free Will Objection: If I’m a deterministic computation, why can’t anyone predict what I’m going to do?

Answer to Free Will Objection: When I say the human mind can be regarded as a deterministic computation, I am not denying the experiential fact that our minds are unpredictable. The fact that you can’t predict what you’ll be doing tomorrow or next year is fully consistent with the fact that you are deterministic.

The impossibility of predicting your future results from two factors. Most obviously, my future is hard to predict because I can’t know what kind of inputs I’m going to receive. But, and this is a key point, I’d be unable to predict the workings of my mind even if all my upcoming inputs were known to me. I’d be unpredictable even if, for instance, I were to be placed in a sensory deprivation tank for a few hours.

A gnarly computation such as is carried out by a human mind is irreducibly complex; it doesn’t allow for any rapid shortcuts, not even in principle. This is a fact that computer scientists have only recently begun talking about; see Stephen Wolfram’s book A New Kind of Science, and my own book, The Lifebox, the Seashell, and the Soul.

The mind is a deterministic computation, but here are no simple formulas to predict the mind.

“Chinese Room” Objection: A computer can be programmed to emulate all of human behavior, it is still only putting on an act, and it has no internal understanding, knowledge, or intentionality. Consider, for instance the IBM chess-playing program Deep Blue. It excels at playing chess, but it doesn’t “know” anything about chess.

Answer to the “Chinese Room” Objection. To the extent that we can give a precise descriptions of our psychological states, we can create AI programs to emulate them. A goal becomes a target state the program wants to reach. A focus of attention is a particular pointer the program can aim at a simulation object. An emotional makeup becomes a system of weights attached to various internal states. Conscious knowledge something may involve a kind of self-reflexive behavior, in which the system models the world, a self-symbol, the relationship between the world and the self-symbol, and the self-symbol considering the relationship between the world and the self-symbol. To the extent that this can be made precise it can be modeled. Present day AI programs lack many of the internal aspects of human psychology simply because these aspects have not yet been well-enough described. But in principle, it can all be modeled.

Supernaturalism Objection: Given that humans are the Crown of Creation, God surely loves us so much that we’ve been equipped with some vital essence that wholly transcends the petty, deterministic bookkeeping of computational physics.

Putting much the same notion more secularly, we might say that there are oddball as-yet-unknown physical forces involved in life and in consciousness; perhaps quantum computation has something to do with this, or dark energy, or instatons on D-branes in Calabi-Yau spaces.

Answer to the Supernaturalism Objection: We are already on the point of building physical quantum computers. In the long run, any possible kind of physics should be something that we can put into the devices we make. And who’s to say that God’s special vital essence doesn’t dribble our devices as well. Zen Buddhists tell the story of a monk who asks the sage, “Does a stone have Buddha-nature?” The sage answers, “The universal rain moistens all creatures.”

2. On Emulation. The second step of my argument is very easy to defend; we’ve known since the 1940s that there is not an endless staircase of more and more sophisticated computation. Relatively simple devices such as a desktop computer are already “universal computers,” meaning that, in principle, your desktop machine can emulate the behavior of any other system. It’s just a matter of equipping your computer with a lot of extra memory, getting it run fast, and giving it the right software.

I estimate the actual computational power of the human brain as being on the order of a quintillion primitive operations per second using a quintillion bytes of memory. In scientific nomenclature, this would be an exaflop exabyte machine..

Extrapolating from present trends, we may well have desktop computers of this power by the year 2060. Of course the hard part is figuring out how to write the human-emulation software for the exaflop exabyte machine.

3. On Feasibility There are all sorts of ways of making computers, and some hardware designs are better for certain kinds of problems than others. In making a computer that emulates humans, we face two interlocking problems: finding the best kind of hardware to use; finding appropriate software to run on the hardware.

These are exceedingly hard problems. My guess is that it will take at least another hundred years for full parity between humans and certain machines. Possibly I’m too pessimistic.

There are certain limitative logic theorems, such as Gödel’s incompleteness theorem, suggesting that it’s in principle impossible to write software equivalent to a human mind. But these theorems do not rule out the possibility of managing to evolve or to stumble upon human-equivalent software. All that is ruled out is the ability to truly understand how the software works.

Evolution, also known as genetic programming, is a widely used technique in computer science. Although artificial evolution doesn’t find the very best algorithms, it is able to find acceptably good algorithms, often in a reasonable amount of time.

It may be that we don’t need to use an evolutionary process. Wolfram argues that whenever you can find a complicated program to do something, you can also find a concise and simple program to do much the same thing. If we had a better idea about the kinds of programs that might generate human-level AI, we might achieve a rapid success simply by doing an exhaustive search through the first, say, trillion possible such programs.

It may in fact be that human-style mentation is something that nature “likes” to produce; it could be a ubiquitous pattern like cycles or vortices or pairs of scrolls. In this case the search might not take so long after all.