Lifebox for Telepathy and Immortality

I’m posting drafts of two presentations are to be delivered at the IOHK Summit in Miami Beach, Florida, April 18, 2019.

1.Talk: “Cyberpunk Use Cases”

2. Workshop: “Lifebox for Telepathy and Immortality”

This is a draft of the second, “workshop” presentation by Rudy Rucker.

Lifebox for Telepathy and Immortality

Lifebox

What is a Lifebox?

In the next few years we’ll see consumer products that allow people to make convincing emulations of themselves. I call a system like this a lifebox. A lifebox has three layers.

- Data. A large and rich data base with a person’s writings, plus videos of them, and recorded interviews.

- Search. An interactive search engine. You ask the lifebox a question, it does a search on the data, and it comes up with a relevant answer.

- AI. A veneer of AI. The lifebox remembers a given user’s search history and inputs, so as to piece together a semblance of a continuing conversation, or even a friendship. And, of course, and animated head and body of the lifebox creator.

Producing basic lifeboxes is well within our current abilities. And over time the AI layers may evolve to pass the Turing test. A lifebox is somewhat like a personal website—but larger, more densely hyperlinked, and with a sophisticated interface.

The links among the lifebox items are important—because the links express the author’s sensibility, that is, the person’s characteristic way of jumping from one thought to the next.

The lifebox gives users the impression of having a conversation with the author. The user inputs serve as search terms to locate hits of lifebox info. And the AI interface confabulates the hits into sentences and anecdotes and repartee.

More than that, over time, a lifebox will track the ongoing conversations with each particular user, creating a sense of friendships.

Training a Lifebox

How do you train your lifebox? Certainly you can input your writings, your emails, your social media posts, your photos, and the like.

Beyond this the lifebox can interview you, prompting you to tell it stories. Using voice-recognition, the lifebox links your anecdotes via the words and phrases you use. And the lifebox asks simple follow-up questions about the things you say.

For ongoing neural-net-style training, the lifebox AI can listen in on your conversations, and tweak its weights to better match the things that you say.

Lifebox Use Case: Interactive Memoir

The initial market for the lifebox is simple. Old people want to write down their life stories, and with a lifebox they don’t have to write, they can get by with just talking. The lifebox software is smart enough to organize the material into a shapely whole. Like a ghostwriter.

The hard thing about creating your life story is that your recollections aren’t linear; they’re a tangled banyan tree of branches that split and merge.

The lifebox uses hypertext links to hook together everything you say. Your eventual users will carve their own paths through your stories—interrupting and asking questions.

I imagine a white-haired old duffer named Ned. Ned is pacing in his small backyard—a concrete slab with some beds of roses—he’s talking and gesturing, wearing a headset. and with the lifebox in his shirt pocket. The lifebox speaks to him in a woman’s pleasant voice.

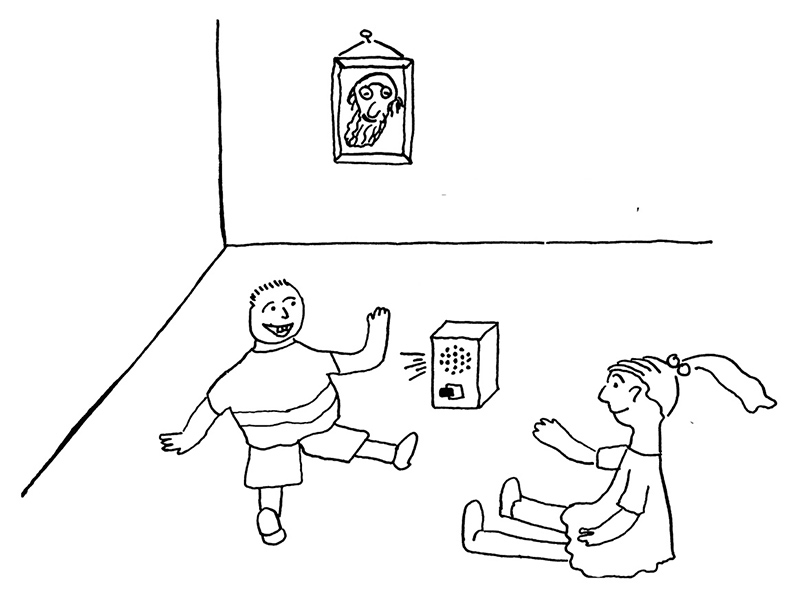

At some point Ned dies. But he’s trained his lifebox. His grandchildren, little Billy and big Sis, play with Ned’s lifebox. Being kids, they mock it, not putting on the polite faces that children are expected to show.

Little Billy asks the Grandpa-lifebox about his first car, and the lifebox starts talking about Grandpa’s electric-powered Flurble and about how he used the car for dates. Big Sis asks the lifebox about the first girl Grandpa dated, and the lifebox goes off on that for a while, and then Sis looks around to make sure Mom’s not in earshot.

The coast is clear, so Sis asks naughty questions. “Did you and your dates do it? In the car? Did you use a rubber?” Shrieks of laughter.

“You’re a little too young to hear about that,” Grandpa-lifebox calmly says. “Let me tell you more about the car.”

Lifebox Use Case: Natural Language Recognition

In the intimate verbal conversations that you have with a lover, spouse, or close friend, spoken language feels as effortless as singing or dancing. The ideas flow and the minds merge. In these empathetic exchanges, each of you draws on a clear sense of your partner’s history and core consciousness.

By way of enhancing traditional text and image communications, people might use lifeboxes to introduce themselves to each other. Like studying someone’s home page before meeting them.

A lifebox would serve as a conversational context. Sharing lifebox contexts replaces the mass of common memories and cultural referents that you depend on with friends.

If an AI agent has access to your lifebox, it will do much better at understanding the content of your speech. We could finally gain traction on the intractable AI problem of getting a deep understanding of natural language.

Telepathy

When we use language our words act as instructions for assembling thoughts. But telepathy could work differently. By way of analogy, think about three different ways you might tell a person about something you saw.

- Text. Give a verbal description of the image.

- Image. Show them a photo.

- Link. Give them a link to the photo on your webpage.

Let’s suppose now that we come up with something like a brain-wave-based cell phone. I call such a device an uvvy. An uvvy might instead be like a removable plastic leech that perches on the back of a user’s neck.

(Note in passing that one would never want to have anything to do with an implanted device of this nature. Malware, pwning, crashes, limpware upgrades? No thanks.!)

The most obvious use of an uvvy would be to use it like a videophone, sending words and images. But I want you to imagine people sharing direct links into each others’ minds!

I refer to this type of advanced telepathy by the word teep.

Lifebox Use Case: Understanding Teep

A possible problem with brain-link teep is that you might have trouble deciphering the intricate structures of someone else’s thoughts—seen from the inside.

Sharing lifebox contexts could help make sense of another person’s internal brain links.

This is a variant of the problem of understanding natural language.

I’m saying that, as well as using the ethereal brain-wave-type signals, you’ll want to use hyperlinks into the other user’s lifebox context. The combination of the two channels can make the teep comprehensible.

Lifebox Use Case: Blocking Ads and Impersonation.

It would be very bad to be getting ads and spam via teep.

And it would be bad to have someone impersonating me and teeping things to other poeple.

I’m groping for some kind of safety filter. A person might use their lifebox as a transducer during brain-to-brain teep contact. Rather than you reaching directly into my brains, you might channel the requests through the my lifebox.

How to track the legit lifeboxes? Track them with a blockchain?

Immortality

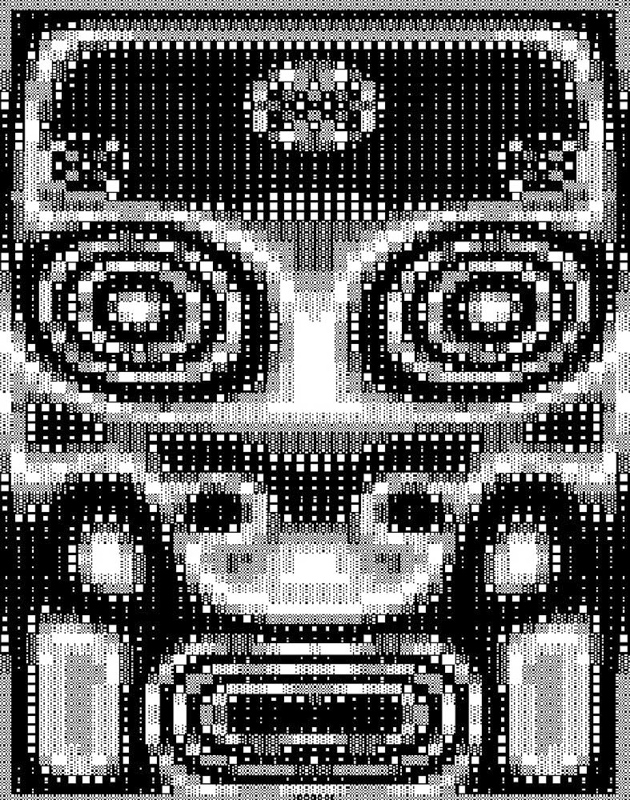

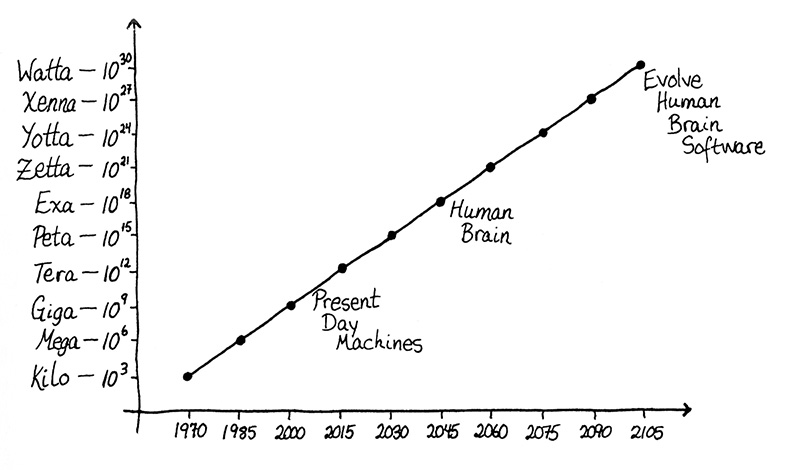

If what my brain does is to carry out computation-like deterministic processes, then in principle there ought to be a computer of some kind that can emulate it.

Yes, the brain is analog rather than digital, but perhaps a highly fine-grained digital computer would suffice. Like a pixelized photo.

Alternately, the computers of the future may be analog devices as well—one thinks of biocomputation and quantum computation.

In trying to produce humanlike constructs, we have four requirements.

- Hardware. Device with a computation rate and memory space that’s comparable to a human brain. Not terminally out of reach.

- Software. An operating system that allows the device to behave like a human mind. The most likely option is to beat the problem to death by training multi-layer neural nets.

- Data. A lifetime’s worth of memories. Lifeboxes!

- Consciousness. How?

Recipe for Consciousness.

Short answer:

Consciousness = “I am.

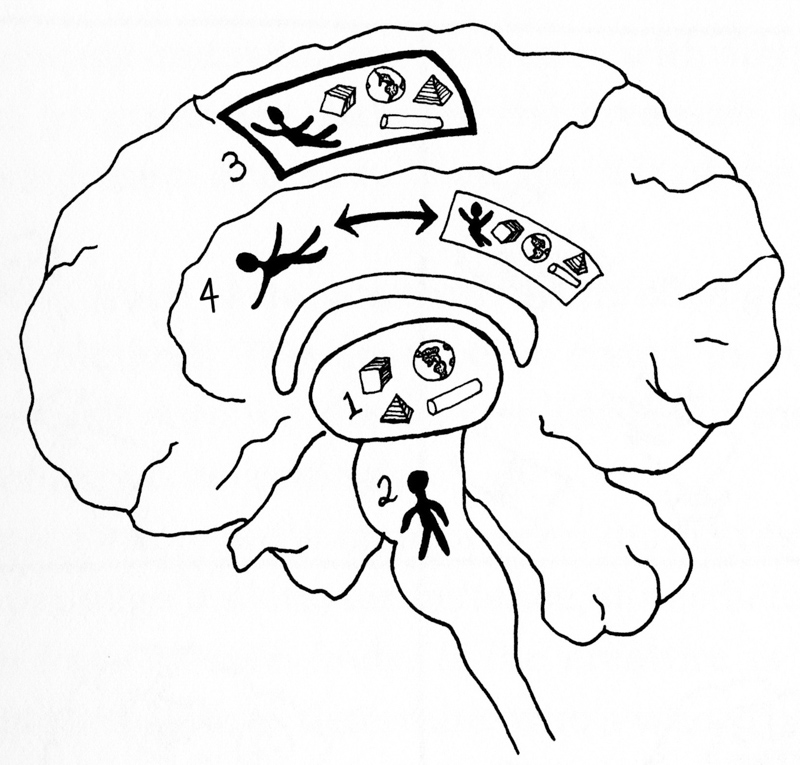

Long answer, from Antonio Damasio, as explianed in my Lifebox tome :

- Images of objects.

- Image of self.

- Movie-in-the-brain.

- Consciousness = Watch your self watching your movie-in-the-brain.

Lifebox Use Case: Juicy Ghosts

Suppose we can copy a personality to a lifebox, and that the lifebox has such a strong AI that it enjoys self-awareness, and it feels it is a copy of the original person. Call such a construct a ghost.

You don’t want Apple, Microsoft, Google, Facebook or their like to own your ghost. You want your ghost to be a free agent. How can you afford a sufficiently powerful device to run your personality? Let’s suppose we’ve got biocomputing working. Port yourself onto a dog.

So you might be a juicy ghost—living in the brain of a dog, a bird, or a rat. A big win in having a living body is that you then have sense organs, mobility, and an ability to act in the world.

If people can spawn off juicy ghosts, we have problems with ownership, inheritance, culpability, and liability. It would be best to only allow one ghost version of a person at a time. How to register which device or organism is your ghost. Again it seems like blockchain could play a role…

References

I discussed the lifebox at some length in my futurological novel, Saucer Wisdom, and in my nonfiction tome,The Lifebox, the Seashell, and the Soul>.

See also my online lifebox prototype, “Search Rudy’s Lifebox,” at www.rudyrucker.com/blog/rudys-lifebox