MIND TOOLS

THE FIVE LEVELS OF

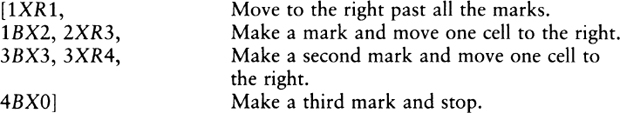

MATHEMATICAL REALITY

RUDY RUCKER

This is a free online webpage browsing edition.

Purchase paperback and ebook editions from

Dover Books or Amazon or B&N

Copyright © 1987 by Rudy Rucker

All rights reserved.

1st Edition, Houghton Mifflin Company, Boston, 1987.

Unabridged republication, Dover Books, 2018

For Sylvia, with love

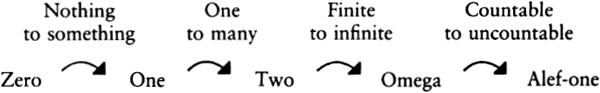

INTRODUCTION THE FIVE MODES OF THOUGHT

Psychological Roots of Mathematical Concepts

Numerology, Numberskulls, and Crowds

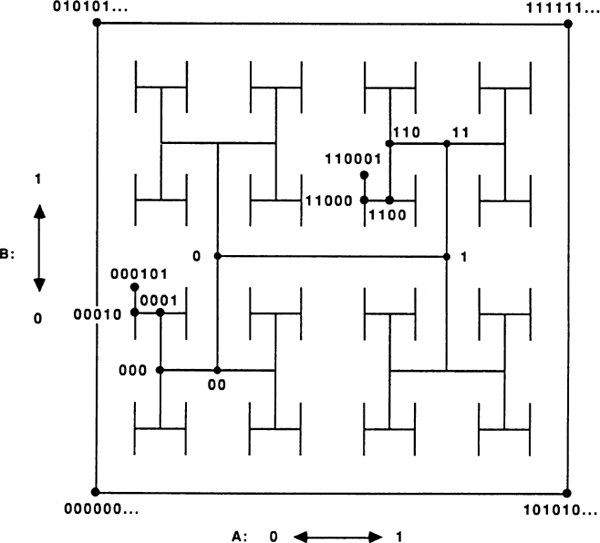

Tiles, Cells, Pixels, and Grids

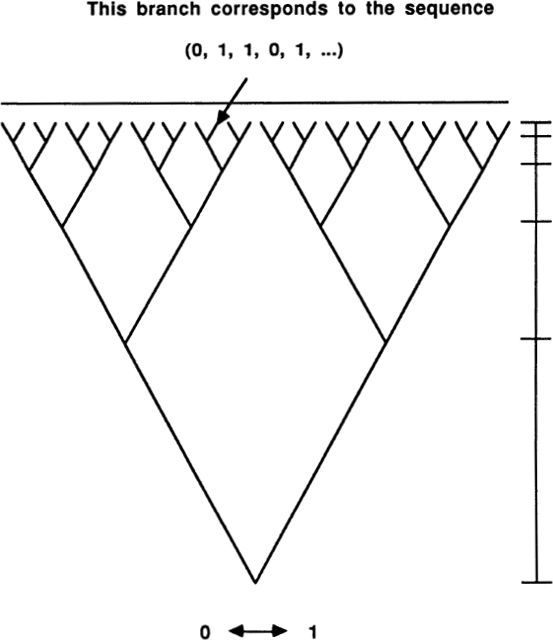

Life Is a Fractal in Hilbert Space

INTRODUCTION

THE FIVE MODES

OF THOUGHT

The world is colors and motion, feelings and thought… and what does math have to do with it? Not much, if “math” means being bored in high school, but in truth mathematics is the one universal science. Mathematics is the study of pure pattern, and everything in the cosmos is a kind of pattern.

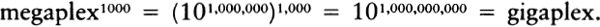

The patterns of mathematics can be roughly grouped into five archetypes: Number, Space, Logic, Infinity, and Information. Mind Tools is primarily about information, the newest of these archetypes. The book consists of this Introduction and a chapter each about information in terms of number, space, logic, and infinity.

Just to give an idea of what we’ll be talking about, let me pick a specific object and show how it can be thought of, as a mathematical pattern, in five different ways. Let’s use your right hand.

1. Hand as Number. At the most superficial level, a hand is an example of the number 5. Looking at details, you notice that your hand has a certain number of hairs and a certain number of wrinkles. The fingers have specific numerical lengths in millimeters. The area of each of your fingernails can be calculated, as can its mass. Internal measurements on your hand could produce a lot more numbers: temperatures, blood flow rates, electrical conductivity, salinity, etc. Your hand codes up a whole lot of numbers.

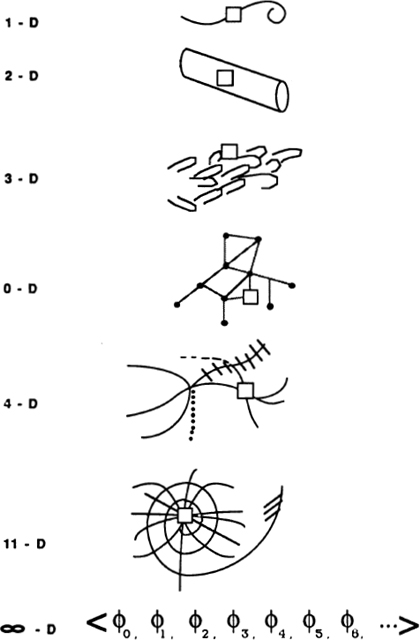

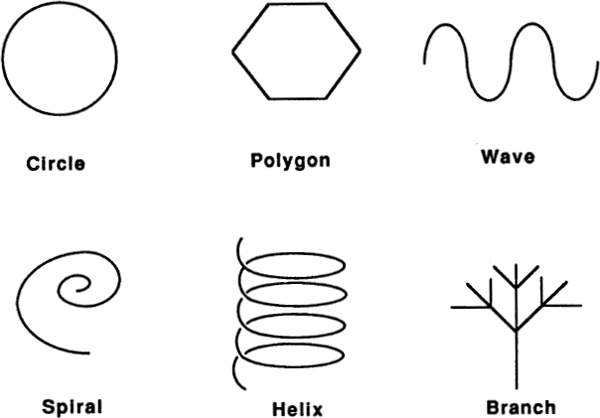

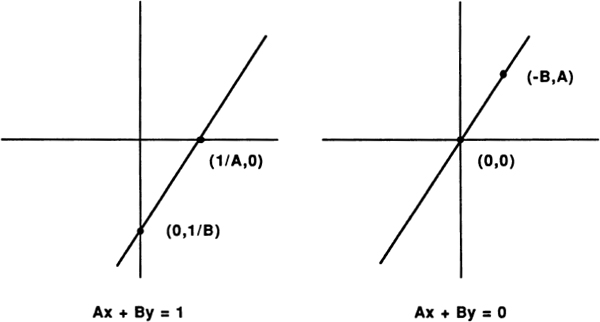

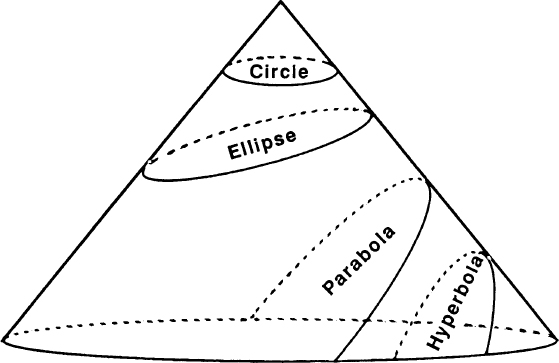

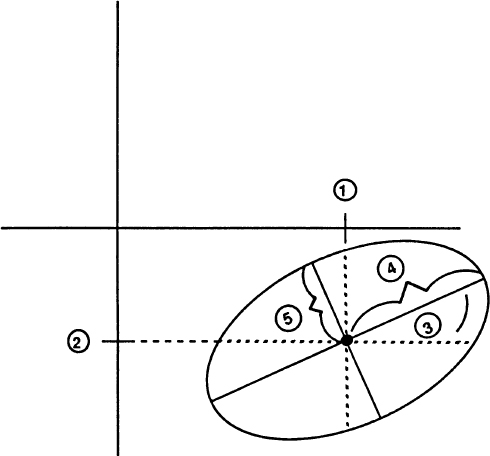

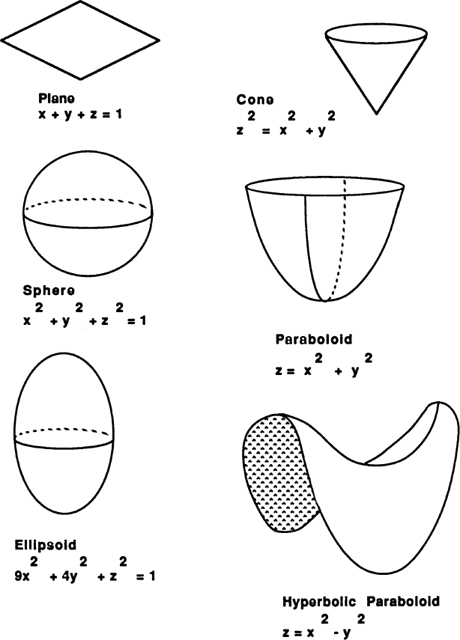

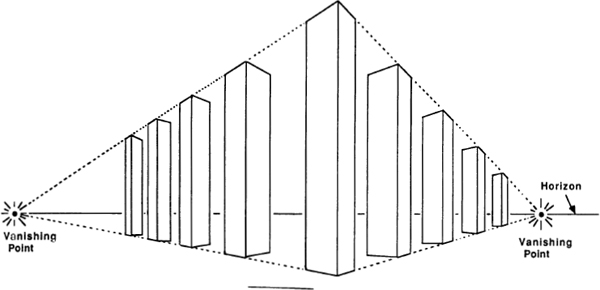

2. Hand as Space. Your hand is an object in three-dimensional space. It has no holes in it, and it is connected to your body. The skin’s curved, two-dimensional surface is convex in some regions and concave in others. The hand’s blood vessels form a branching one-dimensional pattern. The bulge of your thumb muscle is approximately ellipsoidal, and your fingers resemble the frustums of cones. Your fingernails are flattened paraboloids, and your epithelial cells are cylindrical. Your hand is a sample case of space patterns.

3. Hand as Logic. Your hand’s muscles, bones, and tendons make up a kind of machine, and machines are special sorts of logical patterns. If you pull this tendon here, then that bone over there moves. Aside from mechanics, the hand has various behavior patterns that fit together in a logical way. If your hand touches fire, it jerks back. If it touches a bunny, it pets. If it clenches, its knuckles get white. If it digs in dirt, its nails get black. Your logical knowledge about your hand could fill a hefty Owner’s Manual.

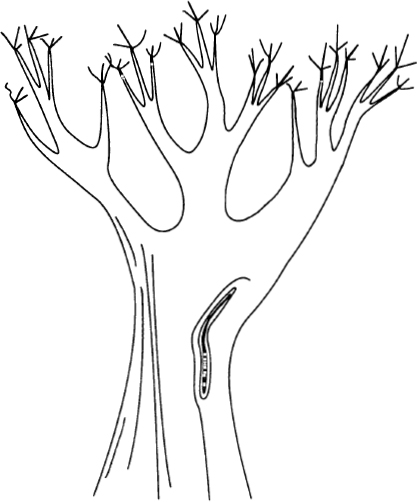

4. Hand as Infinity. Abstractly speaking, your hand takes up infinitely many mathematical space points. As a practical matter, smaller and smaller size scales reveal more and more structure. Close up, your skin’s surface is an endlessly complex pattern of the type known as “fractal.” What you know about your hand relates to what you know about a ramifying net of other concepts — it is hard to disentangle your hand from the infinite sea of all knowledge. Another kind of infinitude arises from the fact that your hand is part of you, and a person’s living essence is closely related to the paradoxical infinities of set theory (the mathematician’s version of theology).

5. Hand as Information. Your hand is designed according to certain instructions coded up in your DNA. The length of these instructions gives a measure of the amount of information in your hand. During the course of its life, your hand has been subject to various random influences that have left scars, freckles, and so on; we might want to include these influences in our measure of your hand’s information. One way to do this would be to tie your hand’s information content to the number of questions I have to ask in order to build a replica of it. Still another way of measuring your hand’s information is to estimate the length of the shortest computer program that would answer any possible question about your hand.

You can think of your hand as made of numbers, of space patterns, of logical connections, of infinite complexities, or of information bits. Each of these complementary thought modes has its use. In the rest of this Introduction I will explain how and why mathematics has evolved the five modes — number, space, logic, infinity, and information — and I will be talking about how these five modes relate to the five basic psychological activities: perception, emotion, thought, intuition, and communication.

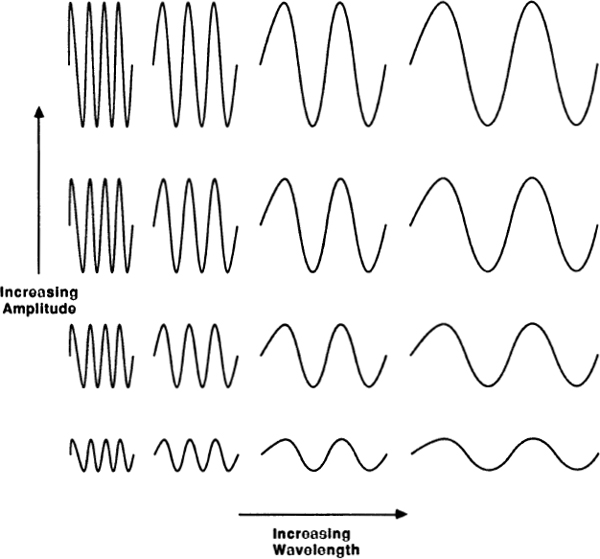

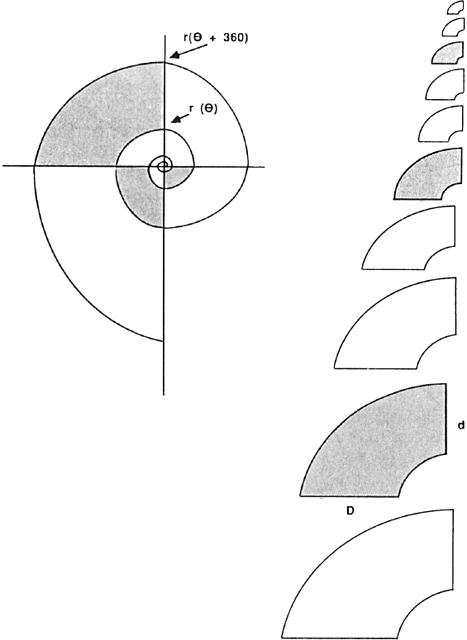

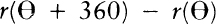

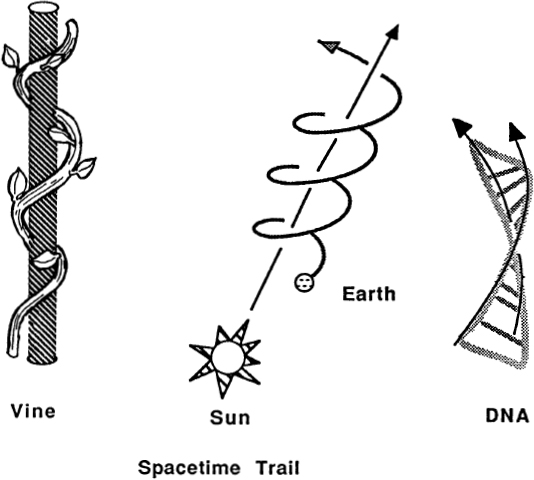

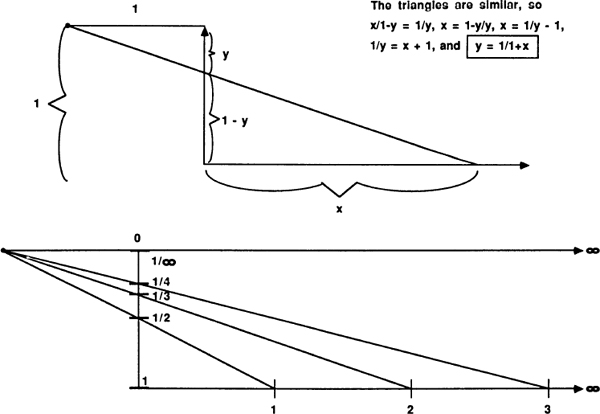

Some things vary in a stepwise fashion — the number of people in a family, the number of sheep in a flock, the number of pebbles in a pouch. These are groups of discrete things about which we can ask, “How many?” Other things vary smoothly — distance, age, weight. Here the basic question is, “How much?”

The first kind of magnitude might be called spotty and the second kind called smooth. The study of spotty magnitudes leads to numbers and arithmetic, while the study of smooth magnitudes leads to notions of length and geometry. Counting up spots leads to the mathematical realm known as “number”; working with smooth quantities leads to the kingdom called “space.” The classic example of these two kinds of patterns is the night sky: Staring upward, we see the stars as spots against the smooth black background. If we focus on the individual stars we are thinking in terms of number, but if we start “connecting the dots” and seeing constellations, then we are thinking in terms of space.

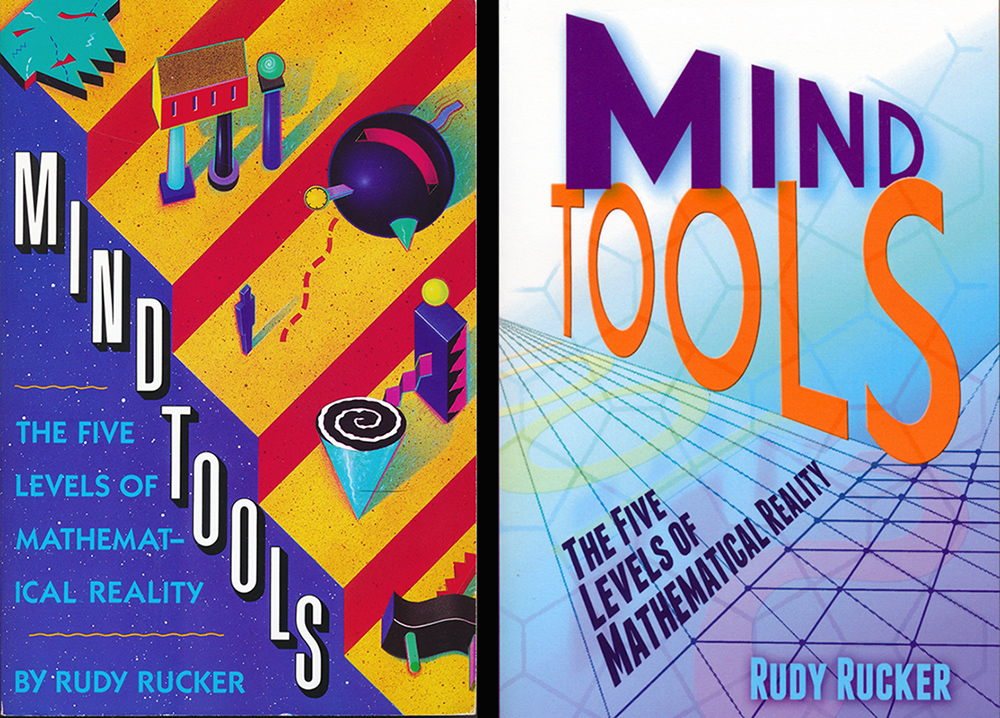

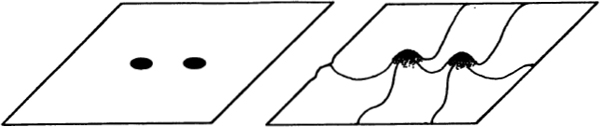

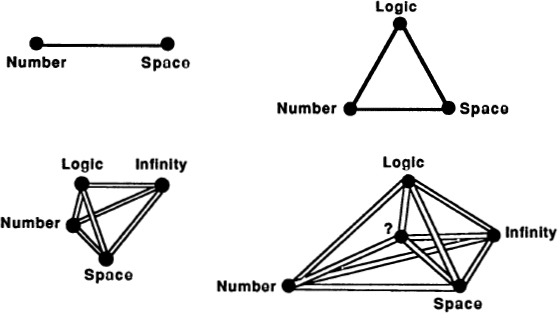

Fig. 1 A triangle as three dots versus a triangle as a piece of space.

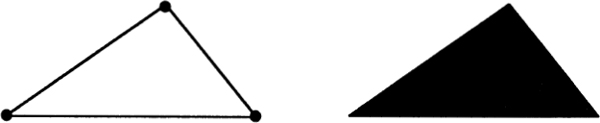

The number-space distinction is extremely basic. Together the pair make up what the Greeks called a “dyad,” or pair of opposing concepts. In Fig. 2 I have made up a table of some dyads related to the number—space dyad. It is easy to think of other distinctions that seem to fall into the same basic pattern. In human relations you can emphasize either the roles of various individuals, or the importance of the overall society. In Psychology we can talk about either various elementary perceptions or the emotions that link them. A mountainside is covered with trees or by a forest. A piece of music can be thought of as separate notes, or as a flowing melody. The world can be viewed as a collection of distinct things or as a single organic whole.

Fig. 2 A table of opposing dyads.

Which takes intellectual priority, number or space? Neither. Smooth-seeming matter is said to be made up of atoms, scattered about like little spots, but the chunky little atoms can be thought of as bumps in the smooth fabric of space. Pushing still further, we find some thinkers breaking smooth space into distinct quanta, which are in turn represented as smooth mathematical functions. The smooth underlies the spotty, and the spotty underlies the smooth. Distinct objects are located in the same smooth space, but smooth space is made up of distinct locations. There is no real priority; the two modes of existence are complementary aspects of reality.

The word “complementarity” was first introduced into philosophy by the quantum physicist Niels Bohr. He used this expression to sum up his belief that basic physical reality is both spotty and smooth. An electron, according to Bohr, is in some respects like a particle (like a number) and in some respects like a wave (like space). At the deepest level of physical reality, things are not definitely spotty or definitely smooth. The ambiguity is a result of neither vagueness nor contradiction. The ambiguity is rather a result of our preconceived notions of “particle” and “wave” not being wholly appropriate at very small size scales.

Fig. 3 particles as lumps versus particles as bumps.

One might also ask whether a person is best thought of as a distinct individual or as a nexus in the web of social interaction. No person exists wholly distinct from human society, so it might seem best to say that the space of society is fundamental. On the other hand, each person can feel like an isolated individual, so maybe the number-like individuals are fundamental. Complementarity says that a person is both individual and social component, and that there is no need to try to separate the two. Reality is one, and language introduces impossible distinctions that need not be made.

Bohr was so committed to the idea of complementarity that he designed himself a coat of arms that includes the yin-yang symbol, in which dark and light areas enfold each other and each contains a part of the other at its core. Bohr’s strong belief in complementarity led him to make a singular statement: “A great truth is a statement whose opposite is also a great truth.”

Bohr thought of the number-space dyad as being an essential part of reality. Given a dyad, there is always the temptation to believe that if we could only dig a little deeper, we could find a way of explaining one half of the dyad in terms of the other, but the philosophy of complementarity says that there doesn’t have to be any single fundamental concept. Some aspects of the world are spread out and spotty, like the counting numbers; some aspects of the world are smooth and connected, like space. Complementarity tells us not to try to make the world simpler than it actually is.

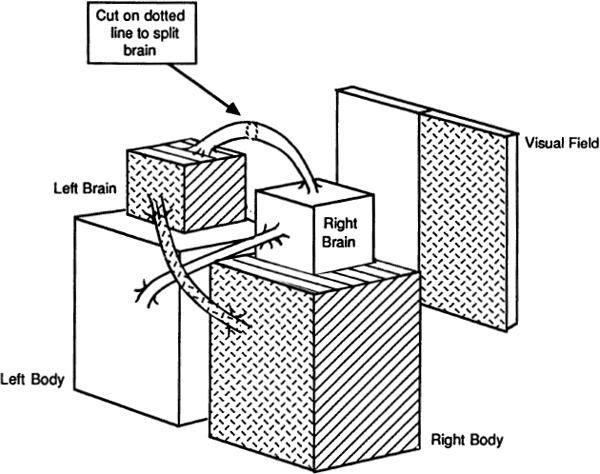

It is interesting to realize that two complementary world views seem to be built into our brains. I am referring here to the human brain’s allocation of different functions to its two halves.

Fig. 4 Niels Bohr’s coat of arms.

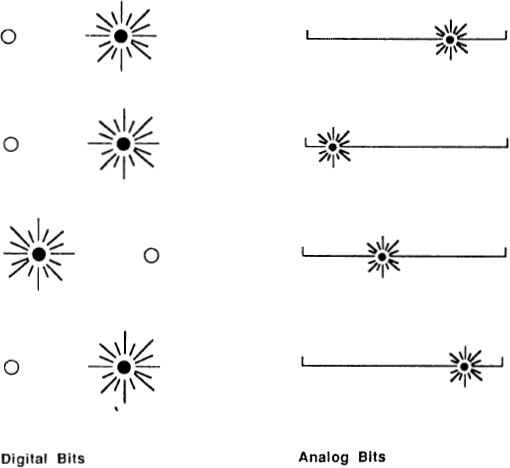

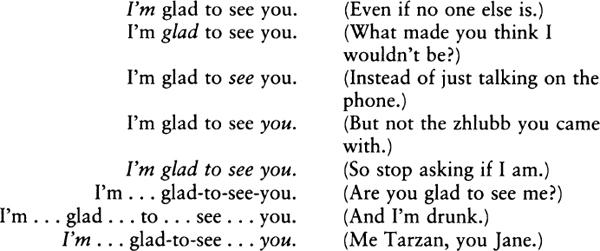

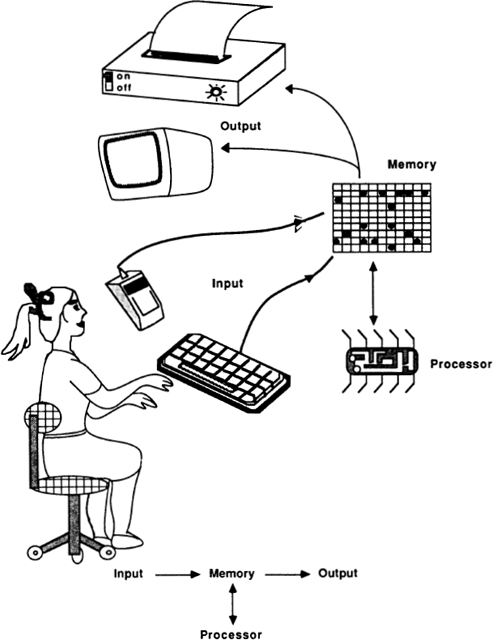

A computer is said to be digital if it works by manipulating distinct chunks of information. This is opposed to an analog computer, which works by the smooth interaction of physical forces. The most familiar examples of analog and digital computers are the two kinds of wrist watches. The old-fashioned analog watch uses a system of gears to move its hands in smooth sweeps, analogous to the flow of time. Loosely speaking, an analog watch is a kind of scale model of the solar system. The newer digital watches count the vibrations of a small crystal, process the count through a series of switches, and display the digits of a number which names the time.

Many of the intellectual tasks a brain performs can be thought of as primarily digital or primarily analog. Arithmetic is certainly a digital activity, and spelling out a printed word is also a basically digital activity. Each of these activities is a “step-at-a-time” process, involving such steps as reading a symbol, finding a meaning for the symbol, and combining two symbols. Singing a song to music, on the other hand, is an analog activity — the speech organs are continuously adjusted to produce tones matching the smoothly varying music. (The digital note patterns of sheet music are but the sluices through which performance flows.) Recognizing a scene in a photograph is also believed to be an analog activity of the brain; the brain seems to see the picture “all at once” rather than to divide it up into lumps of information.

In the 1960s, a variety of experiments involving people with various kinds of brain injuries suggested that, as a rule, the left brain is in charge of digital manipulations and the right brain is in charge of analog activities. Recently this phenomenon has been directly observed by means of PET (Positron Emission Tomography) scans that show that the left brain’s metabolism speeds up for digital tasks, while the right brain’s activity increases during analog tasks. In other words, the left half of your brain thinks in terms of number, and the right half of your brain thinks in terms of space. (See Fig. 5.)

The actual muscles of the body’s right half are controlled by the analytical left brain, while the synthesizing right brain controls the body’s left side. Most full-face photographs of people show significant differences between the face’s two sides. As a rule, a face’s right side will have a more tightly controlled and socially acceptable expression; a face’s left side often looks somewhat out of it.

Is it the internal division of brain function that causes us to see the world in terms of the thesis—antithesis pattern of spotty and smooth? Perhaps, but I think the converse is more likely. That is, I think it is more likely that the number-space split is a fundamental feature of reality, and that our brains have evolved so as to be able to deal with both modes of existence.

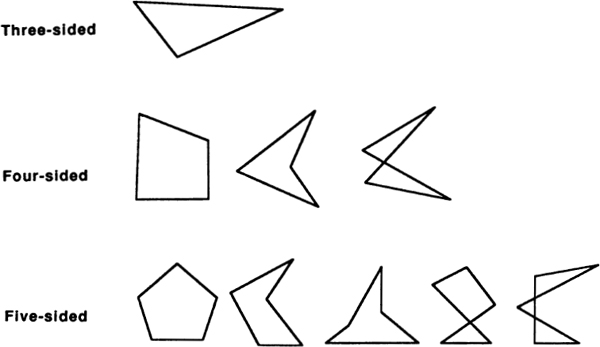

Mathematics is a universal language, so it is not surprising that in mathematics we find both the spotty and the smooth — more technically known as the discrete and the continuous. A pattern is discrete if it is made up of separate, distinct bits. It is continuous if its parts blend into an indivisible whole. Viewed as three dots, a triangle is discrete; but viewed as three lines, a triangle is continuous. What gives mathematics so much of its power is that it contains a variety of tools for bridging the gap between space and number. The two most important of these tools are logic and infinity.

Fig. 5 Our double brain.

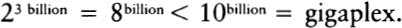

“Infinity” is a hypnotic word, suggesting starships, immortality, The Five Modes of Thought and endlessness. In older writings it is not unusual for authors to say “The Infinite,” where they mean “God.” The word “logic” also has a number of colorful associations: cavemen outwitting mastodons; monks analyzing a passage from Aristotle; Ulam and Von Neumann inventing the H-bomb; a robot brokenly asking, “What is Love?”

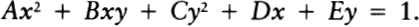

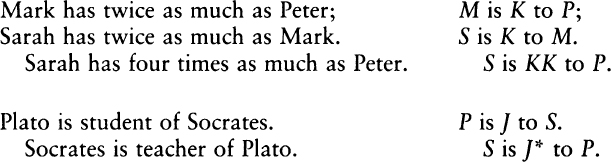

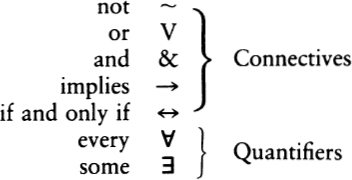

In reality, logic simply has to do with the idea of letting one general pattern stand for a whole range of special cases. The use of logical techniques enables us to move back and forth between lumpy formulas and smooth mathematical shapes. By a kind of idealization, logic lets a single symbol stand for an ineffably complex reality.

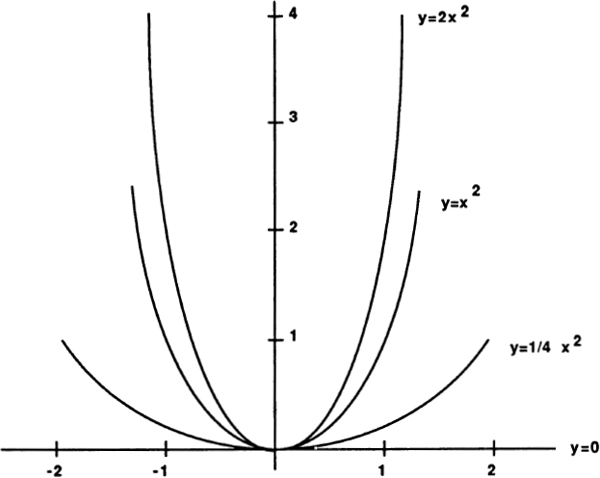

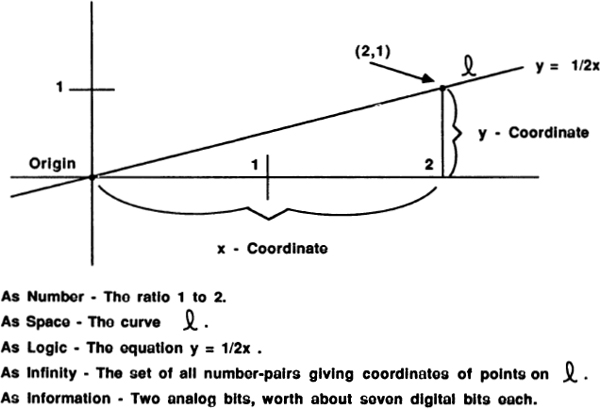

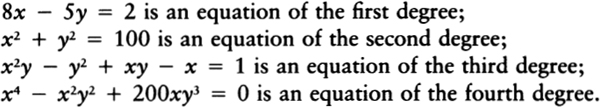

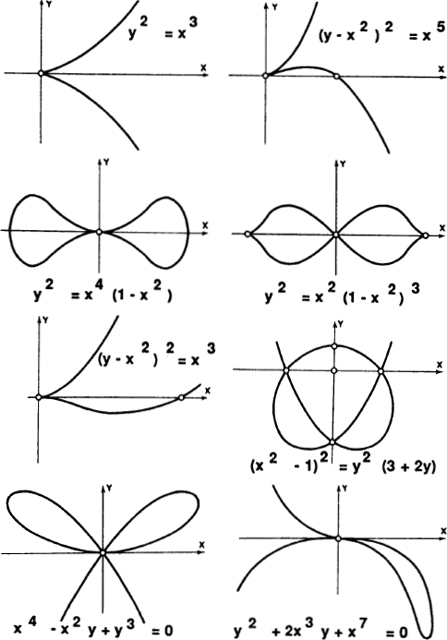

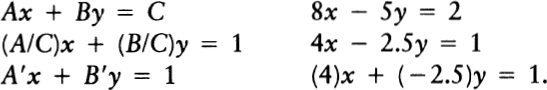

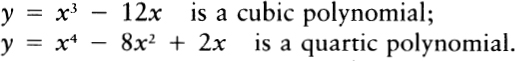

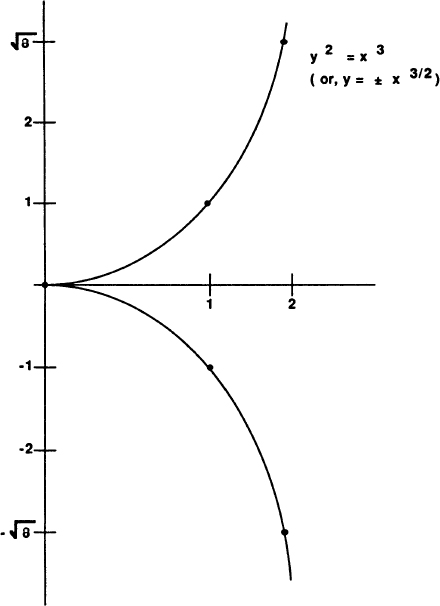

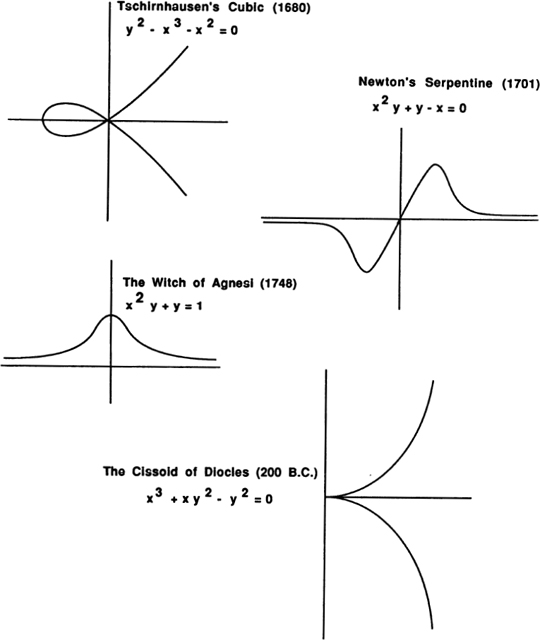

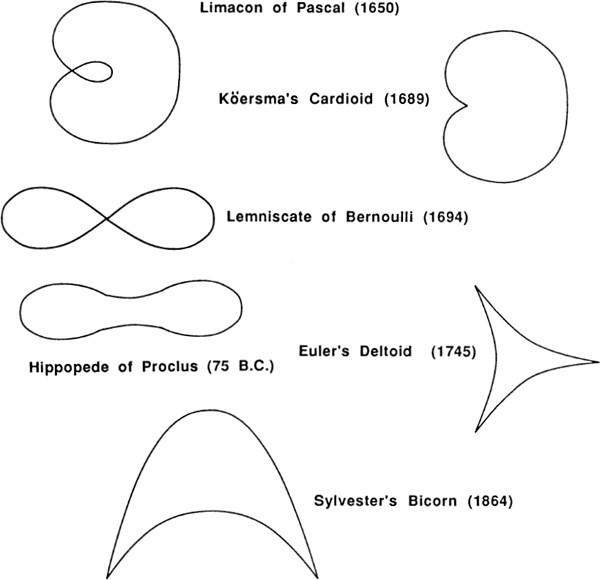

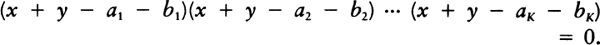

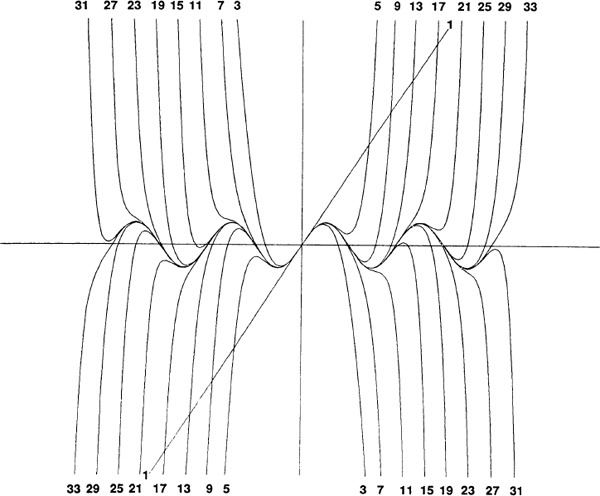

At the lowest level, the logic of mathematics involves using the special symbols for which mathematics is so well known. The equations of algebra are a good example of this kind of low-level mathematical logic. “Algebra” is a wonderful-sounding word for a wonderful skill. Knowing algebra is like knowing some magical language of sorcery — a language in which a few well-chosen words can give one mastery over the snakiest of curves. Like many magical and mathematical words, “algebra” comes from the Arabic, for it was the Arabs who kept the Greek mathematical heritage alive during the Dark Ages. “Algebra” comes from the expression al-jabara, meaning “binding things together,” or “bone-setting.” Algebra provides a way for logic to connect the continuous and the discrete. On the one hand, a parabola, say, is a smooth curve in space, yet mathematical reasoning shows us that the parabola can equally well be thought of as a simple algebraic equation — a discrete set of specific symbols.

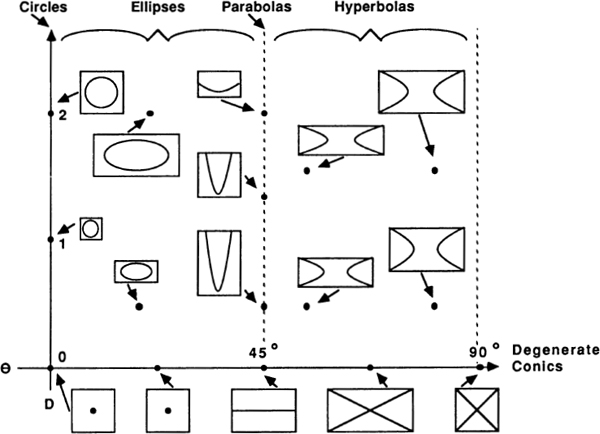

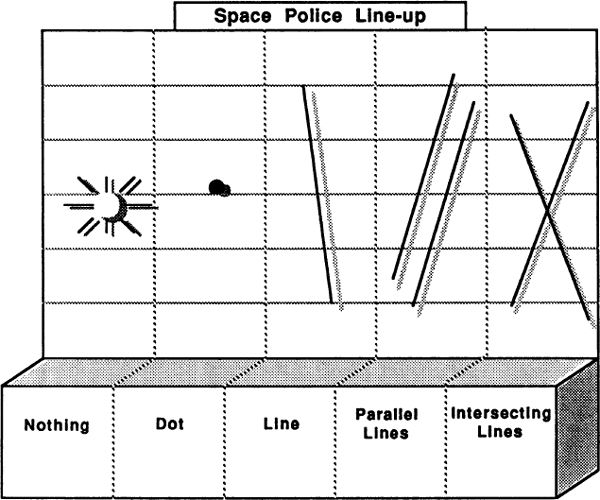

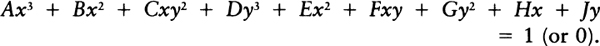

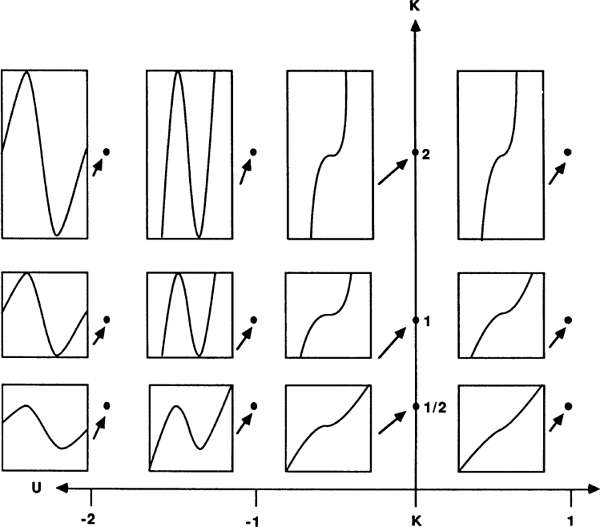

Fig. 6 Curves and formulas.

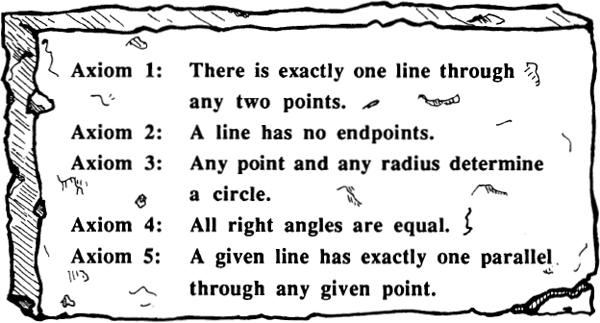

At a higher level, mathematical logic works through tying individual sentences together into mathematical theories. If we think of a plane filled with points and lines, the initial impression is one of chaos. Once we set down Euclid’s five laws (or axioms) about points and lines, however, we have captured a great deal of information about the plane. For purposes of reasoning, the continuous plane is captured by a few rows of discrete symbols.

Fig. 7 Euclid’s axioms.

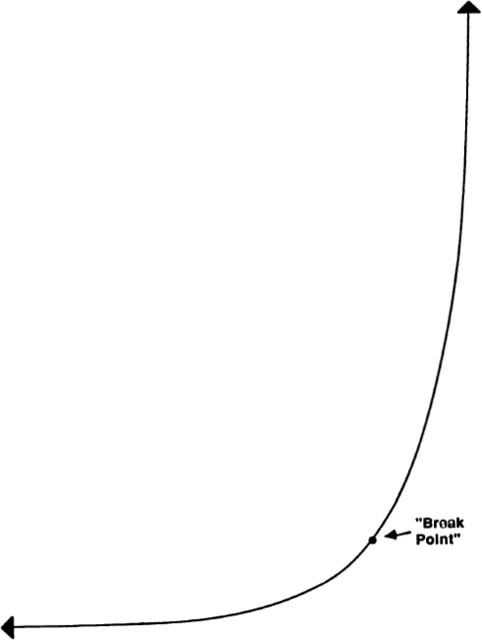

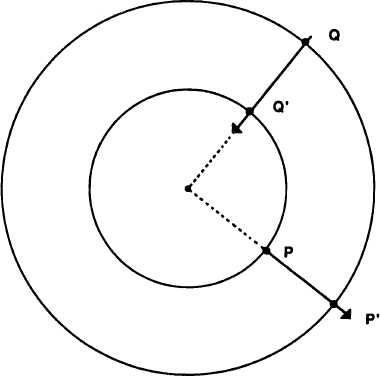

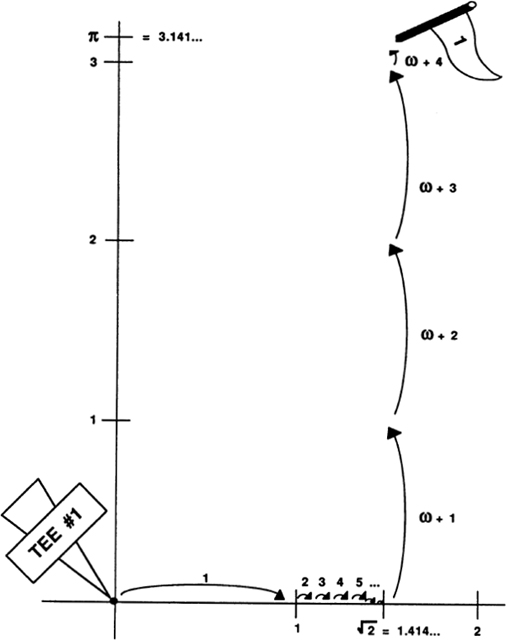

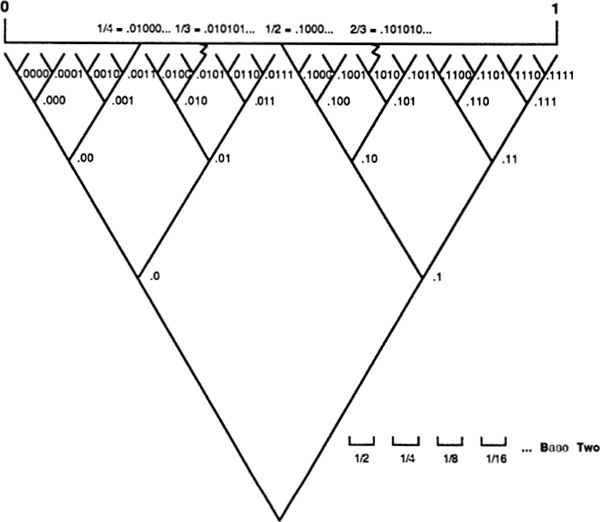

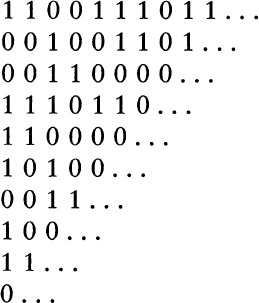

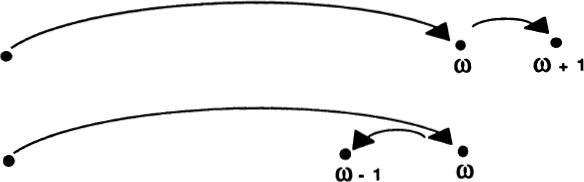

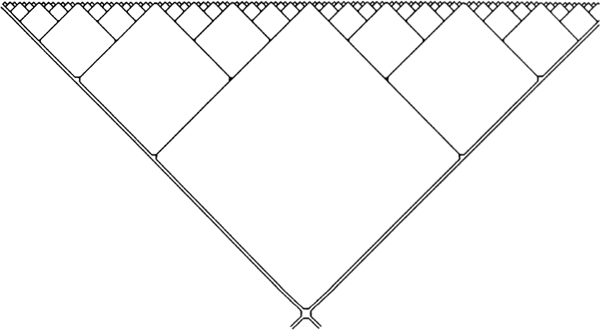

Logic synthesizes, but infinity analyzes. Logic combines all the facts about a space pattern into a few symbols; infinity connects number and space by breaking space up into infinitely many distinct points. Like logic, infinity provides a two-way bridge between the continuous and the discrete. We can start with discrete points and put infinitely many of them together to get a continuous space. Moving in the other direction, we can start with some continuous region of space and narrow down to a point by using an infinite nested sequence of approximations.

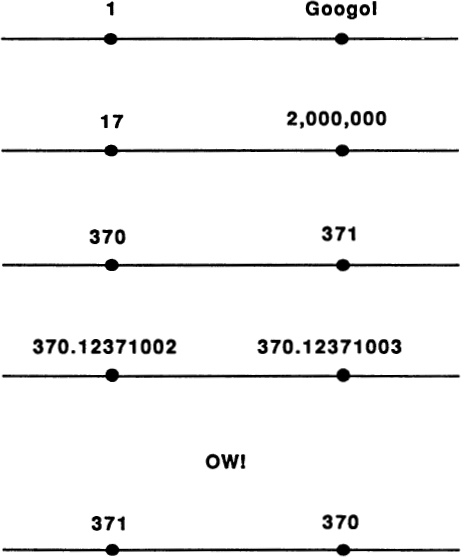

Fig. 8 Infinity is a two-way bridge between the discrete and the continuous.

These two concepts are incorporated into the construction known as the real number line. Although I have been talking a lot about the difference between discrete and continuous magnitudes, it is hard for a modern person to realize how really different the two basic kinds of magnitudes are. This is because, very early in our education, we are all taught to identify points on a line with the so-called real numbers. At some time during our high school education, we are taught to measure continuous magnitudes in decimals — to say things like, “My height in meters is 1.5463 …,” where, ideally, the “…” stands for an endless sequence of more and more precise measurements. The real number system is a concrete example of infinity being used to convert a line’s space into decimal numbers.

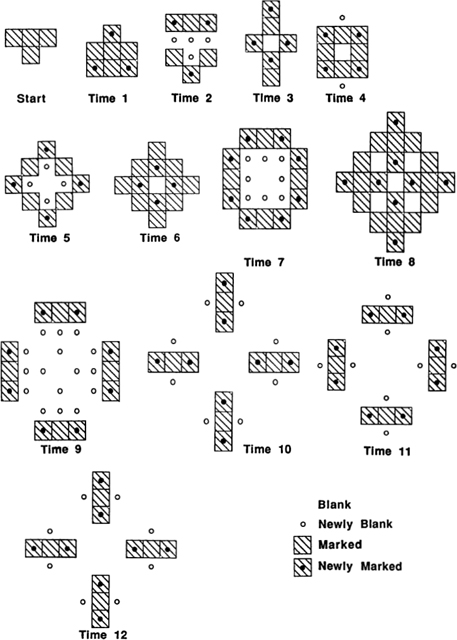

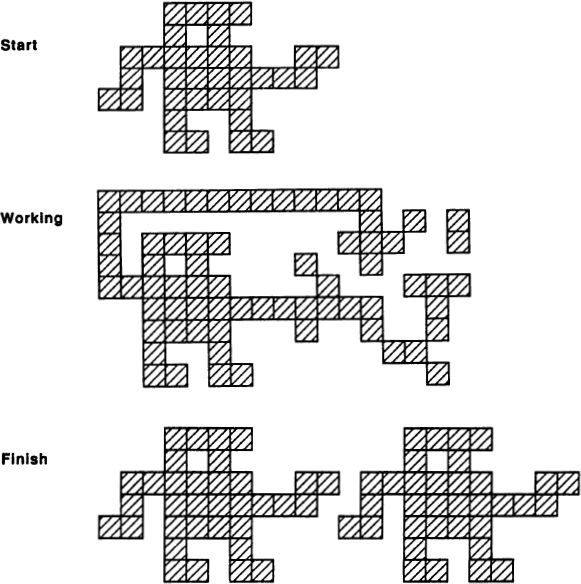

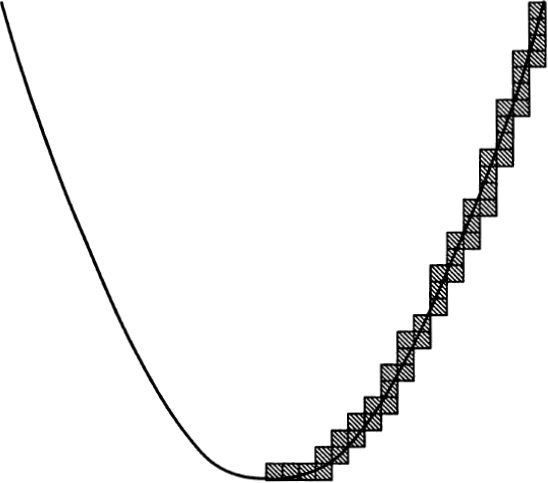

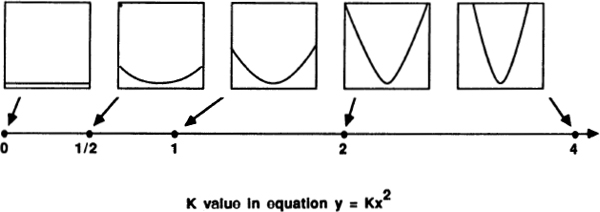

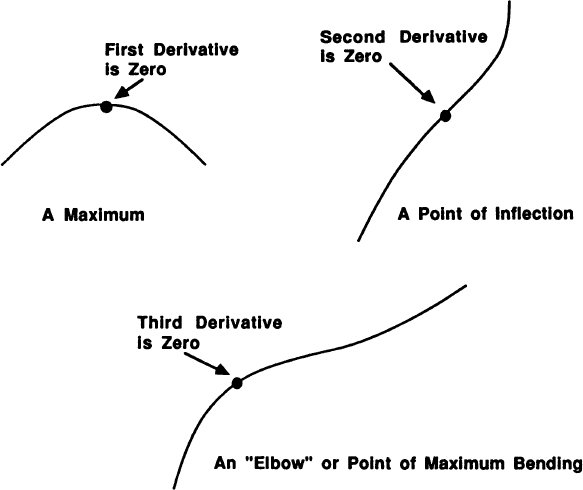

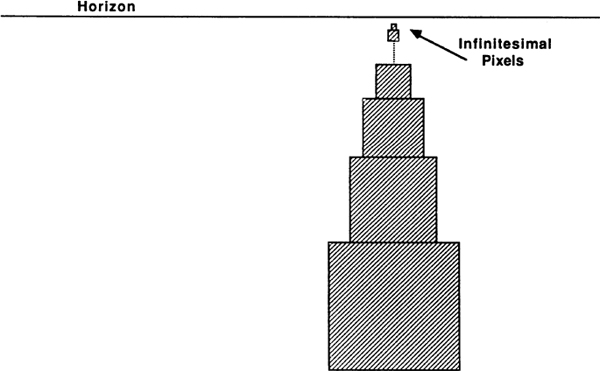

Calculus uses infinity constantly. Indeed, calculus is sometimes known as Infinitesimal Analysis, where “infinitesimal” means “infinitely small.” In calculus we learn to think of a smooth curve as being like a staircase with infinitely many tiny, discrete steps. Thinking of a curve this way makes it possible to define its steepness. This process is known as “differentiation.” Another use of infinity peculiar to calculus is the process known as “integration”: Given an irregular region whose area we wish to know, calculus finds the area by cutting the region into infinitely many infinitesimal rectangles. (See Fig. 9.)

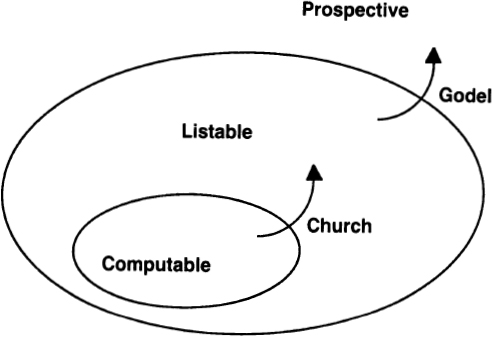

Although logic and infinity serve as bridges between the discrete and the continuous, looked at on a higher level, they also reflect the gap. Thinking logically is basically a digital, left-brain activity, while talking about infinity is an analog, right-brain process. The two modes of thought are complementary. Mathematicians often use discrete, logical axiom systems to describe various kinds of infinite structures. Logic can never fully encompass the riches of infinity, however. Kurt-Godel proved this in 1930, when he showed that no finite logical system can prove all of the true facts about the infinite set of natural numbers.

People often wonder why it is that mathematics is so effective in the sciences. Unlike chess or astrology, mathematics has the curious property of being an intellectual game that really matters. Mathematics helps people build computers and cars, TVs and skyscrapers. Mathematics helps predict when the sun will come up and what the weather will be tomorrow. Mathematics has sent people to the moon and back. Why does math work so well?

As I mentioned above, mathematics is a language whose form is universal. There is no such thing as Chinese mathematics or American mathematics; mathematics is the same for everyone. Mathematics consists of concepts imposed on us from without. The ideas of mathematics reflect certain facts about the world as human beings experience it. Just as our bodies have evolved in response to objective conditions imposed by the environment, our ideas have evolved in response to certain other fundamental features of reality.

Fig. 9 Differentiation and integration.

That distinctions among objects can be made leads to our perception of discreteness. Discreteness leads, in turn, to number. Things do come in lumps, and it is natural to count them.

That smooth transitions can be made leads to our perception of continuity. Things blend into each other, and it is natural to think of them as being in a space that we can measure.

That different kinds of things can resemble each other leads to our perception of similarity. Discussing similarity patterns leads to logic. Various kinds of forms recur, and it is natural to reason about them.

That the world has no obvious boundaries leads to our perception of endlessness. Endlessness leads to infinity. Reality seems inexhaustible, and it is natural to intuit this.

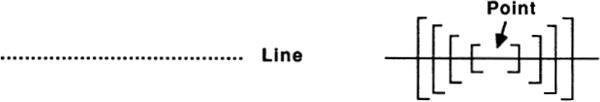

It is worth noting here that the four areas of mathematics — number, space, logic, and infinity — are all treated in most high schools. When students are drilled in arithmetic, they are learning how to manipulate number. Geometry is quite obviously a study of space. Algebra, as was mentioned above, is a type of logic, and calculus, which is often introduced in the twelfth grade, is really a study of infinity.

Number, space, logic, and infinity — the most basic concepts of mathematics. Why are they so fundamental? Because they reflect essential features of our minds and the world around us. Mathematics has evolved from certain simple and universal properties of the world and the human brain. That our mathematics is effective for manipulating concepts is perhaps no more surprising than that our legs are good at walking.

Fig. 10 Mathematical modes of thought.

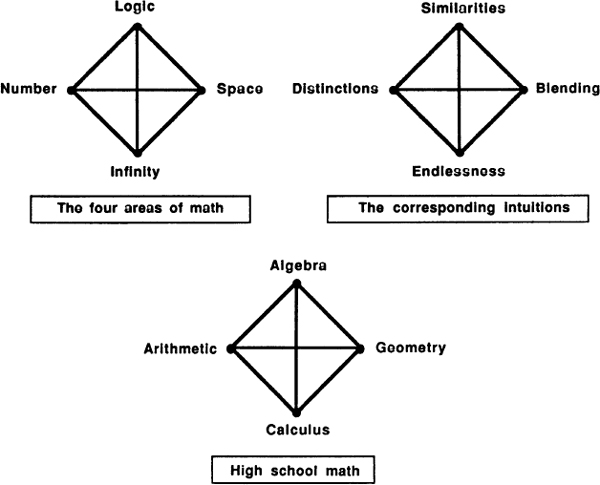

Moving outward, we can use mathematics to change the world around us. Moving inward, we can use mathematics as a guidebook to our own psyches. The early Greeks frequently organized their thoughts in terms of dyads — again, a pair of opposing concepts. The Pythagoreans (who made up one of the earliest schools of mathematics) often drew up “Tables of Opposites” listing related dyads.

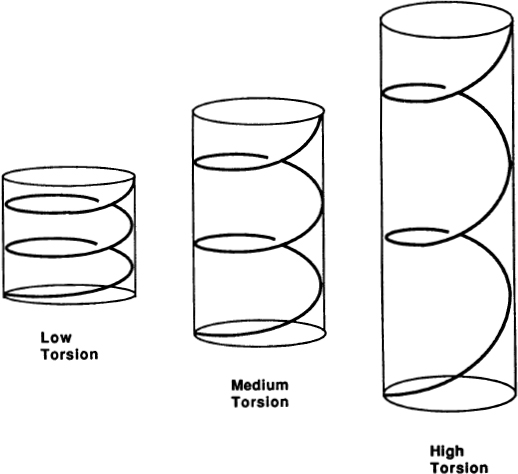

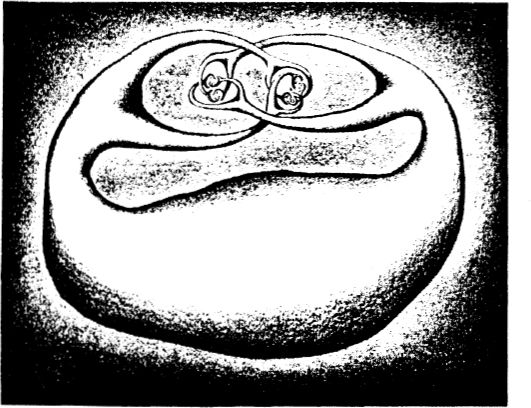

Although it’s not quite relevant, I can’t resist observing here that a DNA molecule is something like a very long table of opposites. The molecule consists of two intertwined “backbones” held together by a long sequence of dyads — matching “base pairs.” The DNA molecule reproduces itself by unzipping down the middle, each unzipped half serving as a template for assembling the missing half. In the same way, given half of a table of opposites, it is not too hard to reconstruct the missing half. Does the similarity between a DNA molecule and a table of opposites have any real significance? Yes. The pattern that DNA and the table of opposites share is pervasive enough to be regarded as an archetype, or an important pattern. Even more pervasive is the archetype of the dyad (also known as the number 2).

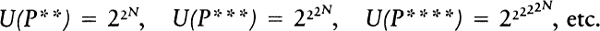

A dyad is a basically static grouping of concepts — a sort of frozen tug of war. One of G. W. F. Hegel’s (who was my great great great grandfather) contributions to philosophy was the idea of grouping concepts into triads, which consist of three concepts arranged in the well-known thesis-antithesis-synthesis pattern. The triad is an essentially dynamic grouping, for each synthesis can become the thesis for a new antithesis. The concepts we will be discussing in this book can be grouped into a series of triads, as shown in Fig. 12 on page 18.

Of course this kind of grouping can be forced too hard and should not necessarily be taken very seriously. Any method of organizing our concepts can easily turn into a “Procrustean bed.” For those who don’t know the story of Procrustes’s bed, let me recall that Procrustes was a legendary outlaw who lived in the wilderness near ancient Athens. His house was near a road, and he would invite weary travelers to spend the night in a special bed that he kept for visitors. The catch was this: If you were too tall for the bed, Procrustes would chop off those parts of you that stuck out; and if you were too short for the bed, Procrustes would stretch you by tying your feet to a stake, bending down a tall sapling, and tying its top to your neck!

Fig. 11 Can you arrange the loose half-dyads in the right order?

Just as Hegel goes a step beyond the Greeks, the psychologist C. G. Jung goes a step beyond Hegel. For Jung, the fundamental pattern of thought is not the triad, but the tetrad, a balanced, mandala-like arrangement of four concepts, also known as the quaternity:

The quaternity is an archetype of almost universal occurrence. It forms the logical basis for any whole judgment. If one wishes to pass such judgment, it must have this fourfold aspect. For instance, if you want to describe the horizon as a whole, you name the four quarters of heaven…. There are always four elements, four prime qualities, four colors, four castes, four ways of spiritual development, etc. So, too, there are four aspects of psychological orientation…. In order to orient ourselves, we must have a function which ascertains that something is there (sensation); a second function which establishes what it is (thinking); a third function which states whether it suits us or not, whether we wish to accept it or not (feeling); and a fourth function which indicates where it came from and where it is going (intuition). When this has been done, there is nothing more to say… . The ideal of completeness is the circle or sphere, but its natural minimal division is a quaternity. (C. G. Jung, A Psychological Approach to the Dogma of the Trinity, 1942. In Collected Works, Vol. 11, p. 167.)

In Figure 10 I already drew some tetrads (I prefer “tetrad,” which Jung also uses sometimes, to the unwieldy “quaternity”); here, in Figure 13 1 show our basic tetrad — the tetrad Jung mentions — as well as further tetrads that will be discussed below. Do the parts of Jung’s tetrad match the members of our math tetrad in a term-for-term way? As an admirer of the noble Procrustes, I am tempted to say “Yes” and to argue that:

1. Sensation ≈ Number. What sensation is really about is making distinctions. The world of sensations is granular in structure. A red patch is not a green patch. It is this making of distinctions that leads to the world of number.

2. Feeling ≈ Space. Having a feeling about something involves being connected to it. We stand back as impartial observers to gather our sensations, but to get a feeling for things, we flow out and merge into them. Only in continuous space is such merging possible.

3. Thinking ≈ Logic. Thinking involves seeing the abstract structures that link our sensations and our feelings. In the process of thinking we look for underlying patterns and compare them. This leads to logic.

4. Intuition ≈ Infinity. Intuition means getting some deep sense of reality as a whole. Viewing the world as a single, mysterious whole leads quite readily to the formation of the concept of infinity.

Just how long was Procrustes’s bed, anyway?

Seriously, it is a little surprising how well the two tetrads seem to fit. Until now we have been drawing tetrads as the corners of a square; one might also think of a tetrad as comprising the corners of a three-dimensional tetrahedron.

For some reason, tetrahedra aren’t as well known as their klutzy Egyptian cousins, the pyramids. The difference between a pyramid and a tetrahedron is that a pyramid is square on the bottom, instead of triangular. What makes the tetrahedron so nice is that no matter which way you turn it, it looks the same. Each corner of a tetrahedron is the same distance from all the other corners. If we think of a triad as an equilateral triangle, then we might say that a tetrad, viewed as a tetrahedron, is made up of four triads.

It would be misleading to say that Hegel and Jung invented triads and quaternities. They simply brought these forms to public attention, in much the same way that a chemist might point out that the members of a large group of molecules share a certain kind of atomic architecture. Dyads, triads, and tetrads are archetypes, where “archetype” means “a recurrent form of human thought.” Triads and tetrads have been around for as long as people have.

Plato, for instance, frequently reasoned dialectically, fitting theses, antitheses, and syntheses together into chains of triads. The Pythagoreans had their own mathematical quaternity: arithmetic, geometry, music, and astronomy.

In the Middle Ages these four branches of mathematics were called the quadrivium, and they made up the core of any scientific course of study. Music bridges arithmetic and geometry, in that music consists of discrete notes arranged in continuous time. Astronomy also lies between arithmetic and geometry, in that astronomy concerns discrete bodies moving about in continuous space. Music and astronomy are in some measure opposed to each other, in that songs are created by men’s logical minds, while the stars are simply given by the infinite cosmos.

Thought patterns … archetypes. “Archetype,” by the way, is another of the wonderful words that Jung made up. Let me quote him once again:

Again and again I encounter the mistaken notion that an archetype is determined in regard to its content, in other words that it is a kind of unconscious idea (if such an expression be admissible). It is necessary to point out once more that archetypes are not determined as regards their content, but only as regards their form and then only to a very limited degree. A primordial image is determined as to its content only when it has become conscious and is therefore filled out with the material of conscious experience. Its form, however,… might perhaps be compared to the axial system of a crystal, which, as it were, preforms the crystalline structure in the mother liquid, although it has no material existence of its own. This first appears according to the specific way in which the ions and molecules aggregate. The archetype in itself is empty and purely formal, nothing but a … possibility of representation which is given a priori. The representations themselves are not inherited, only the forms, and in that respect they correspond in every way to the instincts, which are also determined in form only. The existence of the instincts can no more be proved than the existence of the archetypes, so long as they do not manifest themselves concretely. (C. G. Jung, The Archetypes and the Collective Unconscious, 1934.)

It is evident that the thought forms dyad, triad, and tetrad are objectively given archetypes. They correspond to the very basic numbers 2, 3, and 4. The number 5 is also quite basic, and we might suppose there to be a thought form consisting of five related concepts. Let’s call this form a pentad. One way of drawing a pentad is as a “quincunx,” a legitimate dictionary word meaning “an arrangement of five things with one at each corner and one in the middle of a square.”

This is, of course, a flattened picture. Just as the quaternity takes its truest form if we let it pop up into a three-dimensional tetrahedron, it turns out that a pentad takes its most natural shape if we let it sproing out into a four-dimensional “pentahedroid.”

I call these shapes “the most natural,” because each shape has the property that all of its corner points are at the same distance from all the other corner points. Shapes like these are generically called simplexes. I should point out that for the picture of the pentahedroid in Figure 16 to be correct, you have to imagine that the central point is pushed away into the fourth dimension a bit, so as to make all the lines equal in length. The pentahedroid is sometimes also called a “five-cell,” because it can be thought of as being made up of five equal tetrahedra, just as a tetrahedron can be thought of as being made up of four equal triangles.

Pentahedroids aren’t often seen in these parts, but as abstract patterns they do certainly exist, and if an archetype isn’t an abstract pattern, then what is?

Fig. 16 Simplexes: segment, triangle, tetrahedron, pentahedron.

In any case, what we’re looking for is a fifth concept that fits in at equal distances from all four of the concepts we already have. I want to select a fifth mathematical concept as basic as number, space, logic, and infinity. I would also like there to be a corresponding fifth psychological concept, a concept as basic as sensation, feeling, thinking, and intuition. How shall I find these concepts?

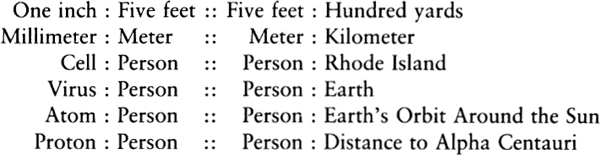

It would perhaps not be too much of a distortion to say that the successive eras of human intellectual history in the last thousand years have corresponded to number (the Middle Ages), space (the Renaissance), logic (the Industrial Revolution), and infinity (Modern Times). With the advent of computers, the age of infinity is now drawing to a close. We are entering a new age of mathematics. What is the fifth mathematical concept that best characterizes our new era?

Information.

Logic connects number and space by reasoning about the similar forms that occur in both areas. Infinity connects number and space by endless processes of approximation. In what way does the concept of information connect number, space, logic, and infinity?

Looked at quite concretely, mathematics is about solving problems. Mathematics turns shapes into areas, conjectures into theorems, equations into solutions. Mathematics turns questions into answers; this is a process of generating information.

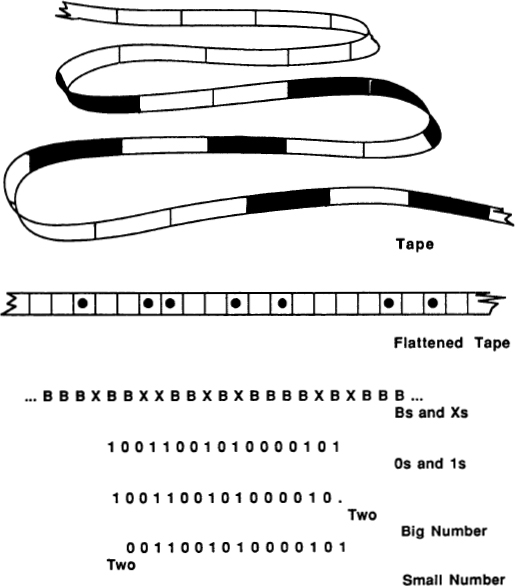

Like logic, information is a somewhat ill-defined concept. We can discuss information at three primary levels: message length, complexity, and understanding.

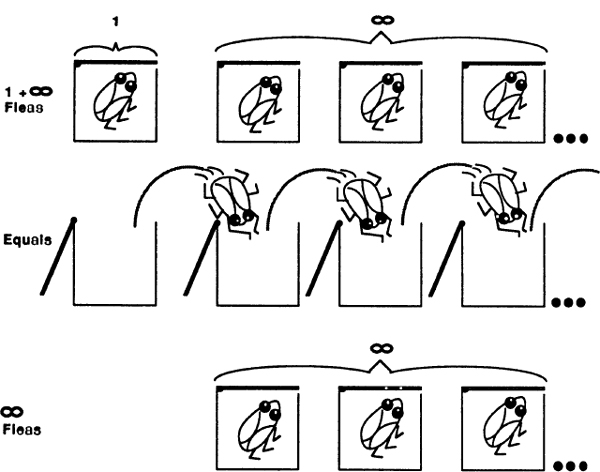

At the simplest level, we feel that information has to do with sending and receiving messages. It seems natural to suppose that the information in a message has to do with the length of the message. A long phone call has more information than a short one; a fat book more information than a thin one.

At the next level, we relate information to the idea of complexity. Some patterns strike us as simple, not having much information. Other, more structured patterns seem to be telling us more, to carry a lot of information. Five minutes of silence over the phone tells you a lot less than thirty seconds of animated conversation. If a blank page seems uninteresting, it is because it carries little complexity, little information. One might consider relating an object’s information content to the length of the shortest list of instructions for building a copy of the object in question. Under this definition, a random-seeming mess would have a lot of “information,” as it would be hard to give a short description of it. An unmown, weedy lawn would have more information than a golf green.

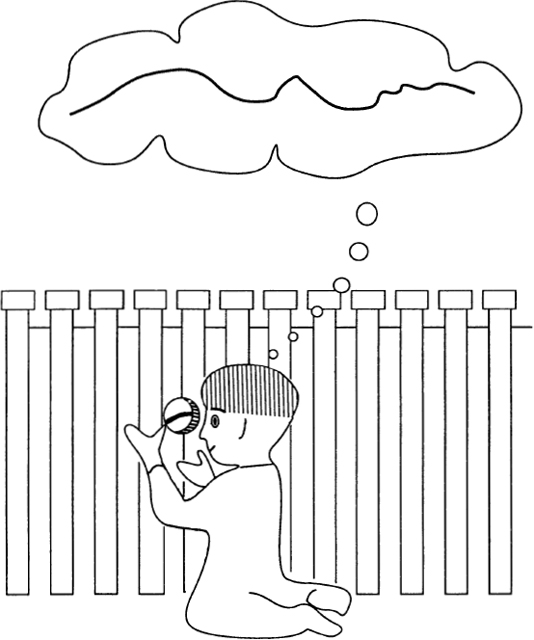

At a third level, we think of information as having to do with knowledge and with understanding. This notion of information connects with the idea of messages in a natural way, because it is reasonable to say that knowledge always arises through a dialogue — between two individuals, between a person and the world, or even between two parts of a person’s mind. For no matter what branch of knowledge you wish to study, your course of study will ordinarily involve looking at (or listening to, or smelling, or tasting, or feeling) various kinds of objects. Some of the objects you look at will be natural — clouds, waves, trees, and dogs. Your observations will always affect the objects, to a greater or lesser extent, and your observations will leave traces on your own brain. Some of the objects you look at will be people, and some of these people will talk to you and make signs with their hands. You may ask these people questions, and they may answer. Some of the objects you look at will be books about things you are interested in. To digest a book, you will think about it; you will write in its margins; you will argue about it with yourself, or with your mental image of the author. Learning things involves absorbing information — raw information from the physical world, as well as information from other people. Learning things also involves generating information — asking questions, forming memory traces, and writing things down. Whenever you are learning, you are sending and receiving transmissions, generating and absorbing information. You are communicating.

Even more than our first four basic concepts, the concept of information currently resists any really precise definition. Relative to information we are in a condition something like the condition of seventeenth-century scientists regarding energy. We know there is an important concept here, a concept with many manifestations, but we do not yet know how to talk about it in exactly the right way.

Despite all this, there is a branch of mathematical science called information theory. Appropriately enough, the modern mathematical theory of information was founded by Claude Shannon, an engineer at Bell Laboratories, home of the telephone. Shannon’s most important papers, written in the 1940s, arose from work having to do with improving the reliability of long-distance telephone and telegraph lines. Indeed, Shannon originally called his work communication theory, rather than information theory.

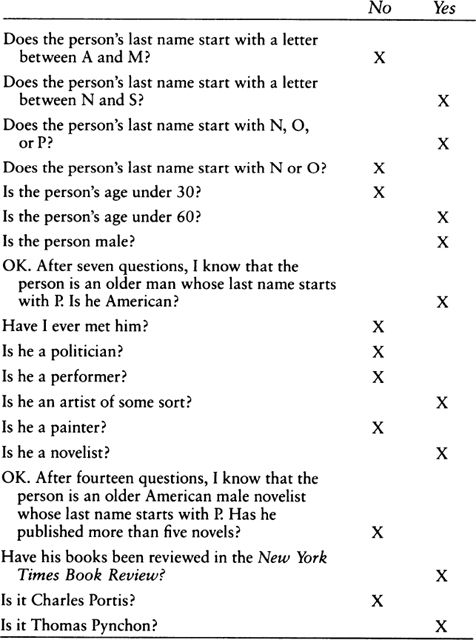

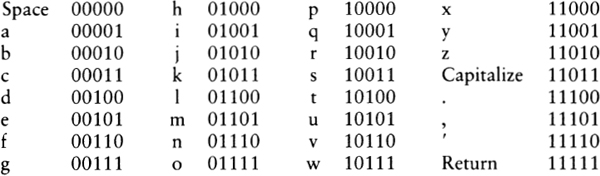

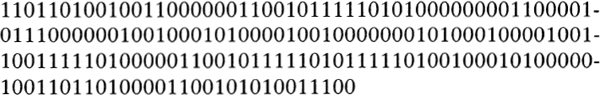

How exactly does one go about measuring information so as to formulate a scientific theory about it? For Shannon, information is measured in “bits.” If I ask you a yes-or-no question, then your answer supplies me with one bit of information. If I play a game of Twenty Questions with you, then, in the process of my asking you twenty yes-or-no questions, I am getting twenty bits of information out of you. A halftone picture in a newspaper can be thought of as having as many bits as it has black-or-white dots. A message in Morse code might be said to have as many bits as there are long-or-short beeps. A flip-flop on-off switch in a computer’s memory bank stores up one bit of information. But what about things that don’t naturally fall into patterns of yes-or-no choices? How do we assign a bit count to a painting, or to a page of English prose? The Twenty Questions idea is useful here.

Recall that in Twenty Questions the objective is for the asker to figure out which famous person the answerer is thinking of. The asker begins with questions like: “Is the person male?” “Is the person American?” “Is the person presently living?” “Is the person in show business?” It is a little surprising how often the asker can actually figure out which famous person the answerer is thinking of on the basis of only twenty bits of information! (It turns out that Twenty Questions usually works because there are only about one million famous people to choose from. Mathematically inclined readers will recognize that this follows from the fact that one million is approximately equal to two to the twentieth power.)

In general, given any message, the information content of the message is said to be equal to the number of yes-and-no questions one would have to ask to guess the actual message. Obviously, a long written message in English is going to carry a pretty large number of bits of information. Shannon estimated that normal written English has an information content of about 9.14 bits per word, though an English passage that uses a lot of unusual words will have a greater information content. For Shannon, the information content of a message is, in a sense, related to the degree of unpredictability of the message.

Fig. 18 Thomas Pynchon in eighteen bits of information.

An example: A novel by Vladimir Nabokov carries more information than does a Gothic romance; that is, if a single word is missing from a Nabokov book, it might take a dozen guesses to ascertain the word, whereas a word missing from a Gothic romance can probably be guessed in two tries. This observation is not necessarily a value judgment; it is an objective fact about the kinds of language used in the two types of books. Nabokov uses arcane words; authors of Gothics are expected to avoid such words.

There is, of course, no real need for a message to be made up of words. A message might just as well be a picture, an equation, a wave form, or even a thought. The medium for transmitting the information is known as the channel. When we speak to one another, the channel we are using is sound waves. Looked at a bit differently, we speak by varying the air pressure around us. If people had never heard of speech, how strange it would seem! Imagine, by comparison, a race of beings who communicate among themselves by varying the magnetic-field strengths in their vicinity.

The thoughtful reader will see a number of problems with Shannon’s Twenty Questions measure of information, and these problems are real. What if the asker is a recluse who has never heard of any of the famous people? What if the asker is Japanese, and you must first teach her English? What if the asker is a giant slug from Saturn accustomed to talking via methane mucus densities? The problem is that one receiver’s information is another receiver’s random noise.

One of the confusing things about information is that it is, to some extent, a relative concept rather than an absolute one. The situation is a bit like logic — if I say that something is logically provable, then you can naturally ask, “Provable from what?” By the same token, if I say that some pattern contains a lot of information, you may well ask, “Information for whom?” At this time, “information” has no universally applicable definition, but the only way we will get anywhere is by trying. More than anything else, Mind Tools is an investigation into the nature of information.

Let me say a little more about the question of how information can be said to unify the four traditional branches of mathematics. Looked at in a certain way, most mathematical techniques have to do with rules for finding things out. In grade school we memorize the times tables up to ten times ten, and we learn techniques for working out the values of larger products. In algebra we learn how to solve simple equations in one or two unknowns. When we study Euclidean geometry, we learn how to put axioms together into proofs of theorems. Calculus provides a set of techniques for computing irregular areas and volumes, and so on. Mathematics is, to a large degree, a body of techniques for transforming one kind of information into another. Working a math problem is akin to decoding a message.

The decoding techniques of mathematics are collectively known as algorithms, a word derived from the name of the ninth-century Arab mathematician al-Khuwarizmi. Al-Khuwarizmi wrote a book on the art of calculating with the then-unfamiliar “Arabic” number notation that we all use today. In modern times, the science of programming computers is sometimes known as “algorithmics.”

Harking back to the general considerations of the last section, what aspects of human psychology might we best relate to information? One answer is communication. Insofar as we do not exist as isolated individuals, we try to communicate with each other. This is a basic psychological activity. Closely related to communication is memory. Insofar as we do not exist solely at one isolated instant in time, we try to remember what happened to us in the past. Communication involves being able to reproduce some information pattern at a distant place; analogously, memory involves being able to reproduce some information pattern at a distant time.

Anyone at the receiving end of a communication is involved with “information processing.” I may communicate with you by writing this book, but you need to organize the book’s information in terms of mental categories that are significant for you. You must process the information.

Spoken and written communication are, if you stop to think about it, fully as remarkable as telepathy would be. How is it that you can know my thoughts at all, or I yours? You have a thought, you make some marks on a piece of paper, you mail the paper to me, I look at it, and by some mysterious communication algorithm I construct in my own brain a pattern that has the same feel as your original thought.

Nowadays, computers are no more controversial than television. Like them or not, they’re a fact of life. The revolution is over. Computers are in our factories, on our desks, and just about everywhere else. Microcomputers are built into most new appliances and toys. What were we so frightened of?

I think the real issue was that the computer revolution forced people to begin viewing the world in a new way. The new world view that computers have spread is this: everything is information. It is now considered reasonable to say that, at the deepest, most fundamental level, our world is made of information. Under this new world view, what you see is what you get, and what you say is what you are. For postmodern people, reality is a pattern of information, a pattern in fact-space.

Number, space, logic, infinity, and information. I have already discussed how the five basic concepts relate to each other philosophically and psychologically; now I’d like to use them as the basis for a brief history of ideas. If this is the Information Age, what were the earlier Ages of Western thought? How do they fit together, and how did they start?

1. Middle Ages. The use of written language made it possible to invent and preserve specific names for things. The written name of a thing was a near-magical symbol for the thing itself.

The characteristic activity of the Middle Ages was labeling. Medieval science lists endless strings of special cases. The objects in medieval paintings were used as symbols for various archetypal vices and virtues. Theologians concerned themselves with finding names for levels of beatitude. The thrust of the era was to turn away from the actual world and to shuffle through the stacks of labels.

The activity of labeling is essentially number-oriented. Numbers themselves are simple, universal labels, and numerologists view them this way. The medieval belief that the entire cosmos could be categorized according to preordained schemes is essentially a belief that the world is like a heap of numbers. The Middle Ages was the Age of Number.

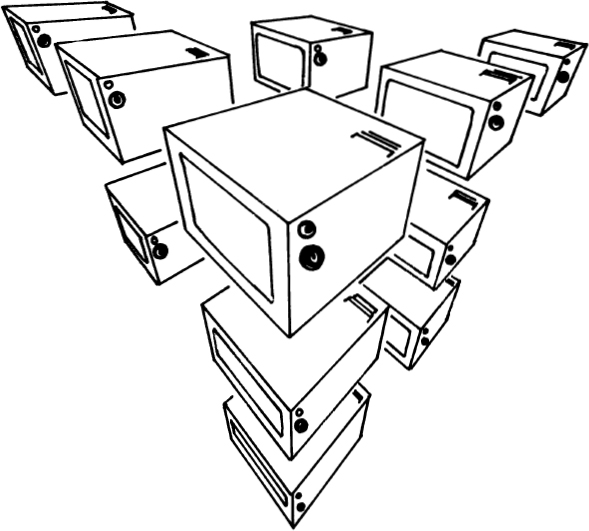

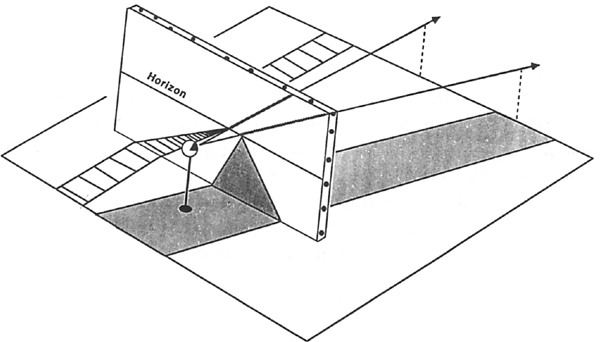

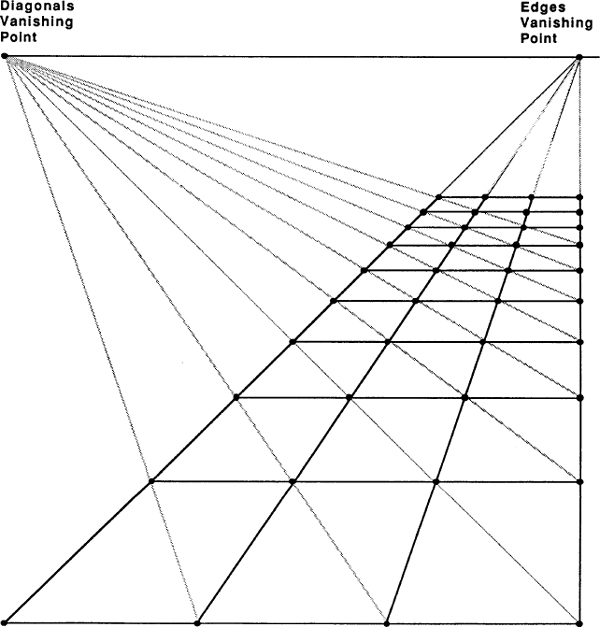

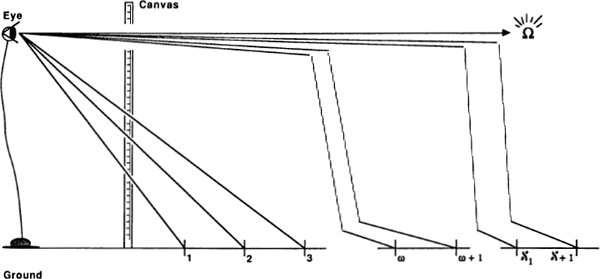

2. Renaissance. The key fact about Renaissance perspective painting is that physical space is treated in a mathematical way. A medieval painting is a flat screen with icons displayed on it to code up a number like message, but a perspective picture ties the picture objects into a single mathematical space. This reflects a radically different way of looking at the world. Instead of being names in God’s mind, things became structures in mathematical space. The Renaissance was the Age of Space.

Galileo’s astronomical discoveries made it clear that the space between the planets is essentially no different from the space we walk around in. More importantly, his experiments with balls rolling down ramps showed that physical motions are regulated by the same laws in every location. Instead of viewing objects as “acting according to their nature,” people began to think of objects as acting in accordance with forces that spread out through space. Volta’s discovery of electricity, and of electricity’s effects on the muscles of frogs, tended to further support the vision of the world as a space pervaded by fields of force.

The new discoveries were spread by means of the printing press. Reading, as McLuhan points out, necessarily induces people to rely more on their visual sense. A subtler effect of printed books is that the same book can be present in many places; this too enhanced the view of the world as space.

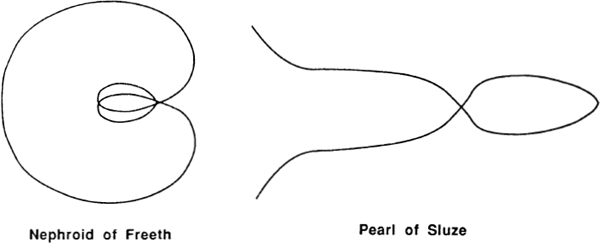

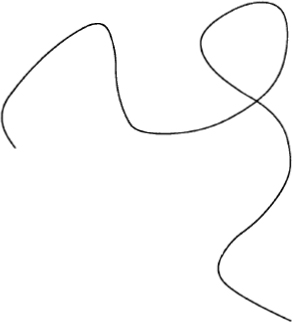

We find the mathematicians of this era concerning themselves with many kinds of space forms that earlier thinkers had deemed to be intractable. Instead of ignoring shapes that lacked simple names, the Renaissance mathematicians sought to understand them as actual forms.

3. Industrial Revolution. A complex machine is a perfect example of a logical system. Chains of cause and effect dart back and forth through the machine with wholly predictable outcomes. What could be more logical than a steam engine? This was the Age of Logic.

Newton’s classic Principia is essentially a logical system. Instead of worrying about how objects could “act at a distance” and exert forces on each other, Newton chose to find universal laws to describe the action of the world’s machinery. For Newton, the solar system is like a huge clock. The full flowering of the logic-based approach to physics came with Maxwell’s equations for electricity and magnetism. Even though Maxwell stated his equations in terms of space-like force fields, he is known to have thought of them as descriptions of invisible machinery.

Economists of the Industrial Revolution liked to think of society as a big machine. The notion of an “economic man” who acts rationally to maximize his wealth is wholly logical and wholly mechanistic.

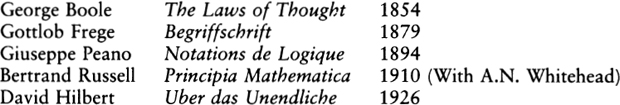

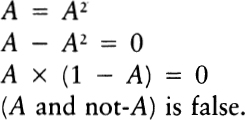

In mathematics, the Industrial Revolution saw algebraic calculation rise to new levels of expertise. George Boole had the notion of converting rational thought into a kind of algebra, and symbolic logic was born.

4. Modern Times. One thing that characterizes the modern era is the loss of certainty. Truth is no longer some number like rules, nor a serene space-like picture, nor a system of logic. Truth is, rather, infinitely complex. The crackling voice of the radio speaks as if from the endless ether beyond, as it should in the Age of Infinity.

Statistical mechanics explain such phenomena as heat and pressure in terms of large numbers of interacting individuals. The numbers of atoms involved are, relative to us, infinite. These figurative infinities become actual in quantum mechanics. The essence of modern quantum mechanics is that no complete description of the world can be contemplated; due to the uncertainty principle, quantum mechanics must treat the world as something that is essentially beyond our full comprehension. Mathematically, the theory is presented in terms of infinite-dimensional space. Relativity theory makes possible the science of cosmology, which attempts to study the universe as a whole.

In society, the modern era has seen the rise of mass movements and world war. This has led to widespread alienation, as people despair of having any effect on the essentially infinite complexities of modern life. Many individuals have turned aside from society to seek a direct mystical union with the Infinite itself.

In mathematics, the modern age brought Georg Cantor’s set theory, an exact science of the infinite, and Godel’s theorem set bounds to what logic can do without intuition.

5. The Postmodern Era. The computer is so suggestive a model for a human brain that it forces us to look at what it is we actually do. It is now quite normal to regard oneself as a finite system that processes information, and not as an immortal soul. The soaring cosmic uncertainties that inspired the Modern era can now be viewed as simple limitations on human information complexity.

Contemporary physicists are turning more and more to methods of computer simulation. An idea very much in the air these days is that physical systems can be thought of as information processors. A drop of ink is placed in a glass of water, and the water processes the drop into streamers. People are proving results about how rapidly a physical system can generate information and, working in a slightly different direction, some researchers are trying to show that certain simple physical systems behave like irreducibly complex “universal computers.”

Socially, the rise of information is ending the alienation so characteristic of modern mass culture. Mass-produced products are being individualized — think of cable TV and of personalized automobile plates. Computerized polling procedures are giving the citizen new power over the government. The notion of information-theoretic complexity as an absolute good pervades postmodern ethics, leading to ever-increasing pluralism.

In mathematics, the study of information is leading to a number of fascinating new insights. Mind Tools is designed to give you a feeling for information, and to show you how information relates to number, space, logic, and infinity.

For one reason or another, we humans very commonly use 0 and 1 as an example of the most basic possible type of distinction. We think in terms of many other such distinctions — off and on, negative and positive, night and day, woman and man — but, at least to a mathematician, zero and one have a special appeal.

What is it about zero and one? Suppose I free-associate a little.

I think it is significant that the international hand gesture for coitus consists of a forefinger (1) bustling in a thumb and forefinger loop (0). This key archetype finds socially acceptable expression as the fat lady and the thin man. The symbol 0 seems egg-like, female, while 1 is spermlike and male. Can this really be an accident? A study of the history of mathematics shows that our present-day symbols were only adopted after centuries of trial and error. It seems likely that the symbols to survive are those that best “fit” our ingrained modes of thought.

An egg is round, and a sperm is skinny. There’s only one egg, but there are lots and lots of sperm. The sperm are active, while the egg just waits. The lovely round woman is besieged by suitors seeking rest, seeking completion.

Formally speaking, both zero and one are undefinable. The primitive concepts “nothing” and “something” cannot be explained in terms of anything simpler. We understand these concepts only because they are built into the world we live in.

Before the beginning of time, the universe is like a 0, an egg. The egg hatches, and out comes 1, the chicken. Chickens here, chickens there, chickens, chickens everywhere.

Why, incidentally, does the egg hatch? Why is there something instead of nothing? Well, if there weren’t any somethings, then we wouldn’t be here asking about it… . Is this enough of an answer?

Occasionally, in moments of deep absorption, all distinctions may seem to fall away, and you do have a kind of 0 experience. As long as you are, in some sense, in touch with the void, you aren’t going to be asking, “Why is there something instead of nothing?” But the moment passes, and you’re a regular person again. Cluck, cluck.

One and zero are like Punch and Judy, endlessly acting their play. What is perhaps astonishing is that mankind has managed to build up a science based on this play: the science of number.

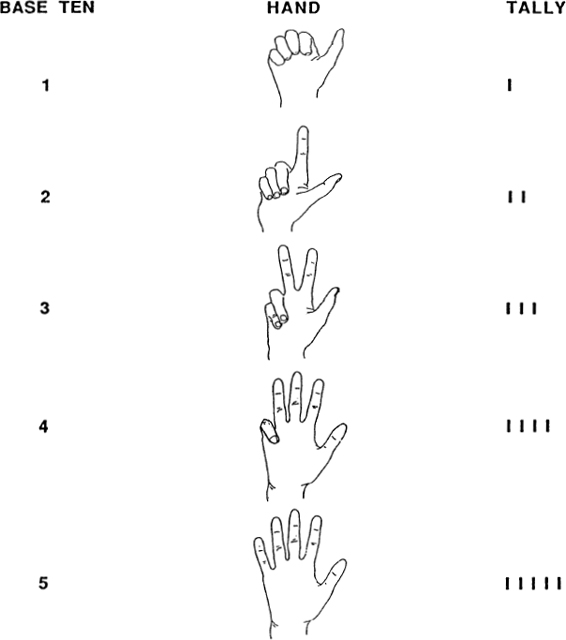

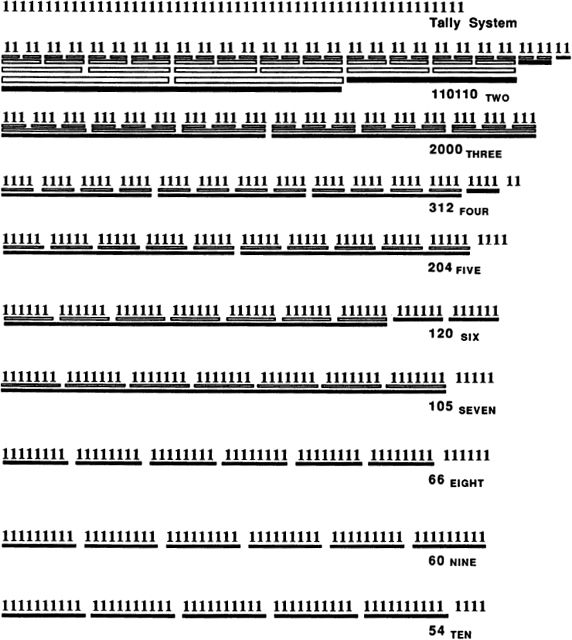

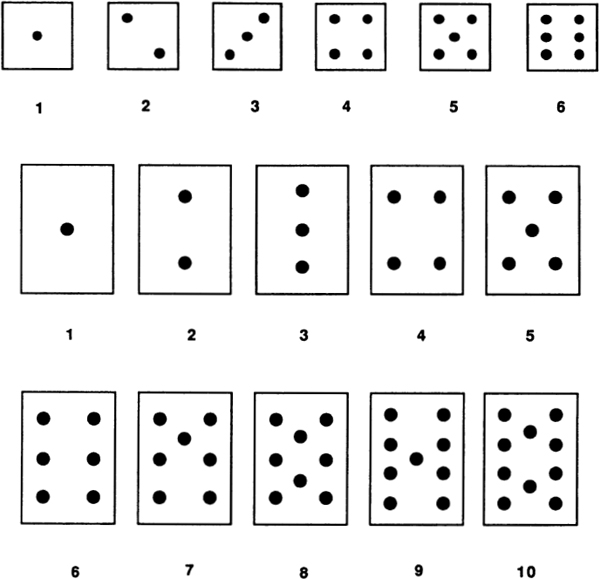

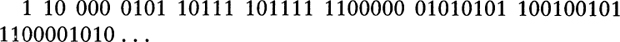

The simplest possible numeration system is the “tally” system. Here a number N is represented by a list of N ones. Ordinary finger-counting uses this system (Fig. 20).

The tally system is awkward for expressing big numbers. The Roman numeration system is considerably more efficient. Recall that the Romans used I, V, X, L, C, D, and M to stand for, respectively, 1, 5, 10, 50, 100, 500, and 1000. Writing X instead of 1111111111 is a big savings. One flaw with the Roman system is that you can’t always read a Roman number one letter at a time — to know that IV is 4 and LX is sixty, you have to see two letters together. This makes Roman numbers hard to read. How many of us have seen the Roman number MDCCLXXVI on the back of a dollar bill without realizing that it’s supposed to be 1776? Another problem with the Roman system is that it peters out at M, so that if I want to express eleven thousand, I have to write MMMMMMMMMMM. Out past M, something like the tally system sets in again.

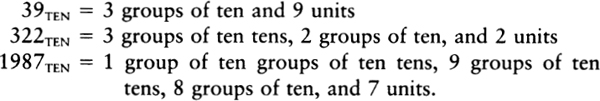

These days, every advanced culture on Earth uses the familiar base-ten system of numeration. Quantities are expressed as sums of units, groups of ten, groups of ten tens, groups of ten groups of ten tens, and so on:

The subscript “TEN” is a reminder that our number system is based on groupings of ten. It is a little hard to grasp that there is nothing sacred about the base-ten numeration system. Simple-minded as it may seem, the only reason we use ten is that we have ten fingers. A numeration system could equally well be based on, say, three — with groups of three, groups of three threes, groups of three groups of three threes, and so on. To make this clear, take a look at the different ways in which 54 units are broken up and described in numeration systems based on two through ten.

Fig. 20 Counting to five.

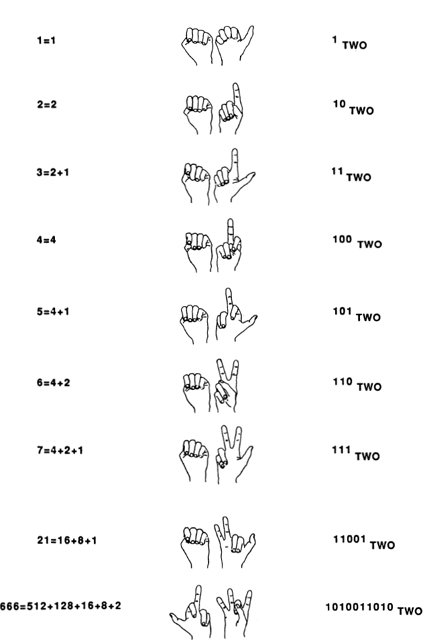

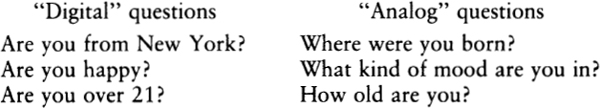

Digital computers use the “binary” or base-two number system. One reason for this is that computer memories are based on internal switches which can be set two ways: on or off. By letting on be 1 and off be 0, computers can represent numbers by setting their memory switches in various arrangements.

Fig. 21 54 in different number bases.

By applying a binary system to the positions of your ten fingers, you can actually count up to 1,023. This is done as follows. Lay your two hands down side by side, palms up as in Fig. 22. Running from right to left, associate each digit with a successive number from the “doubling sequence”: 1, 2, 4, 8, 16, 32, 64, 128, 256, and 512. These are, of course, powers of two. The “grouping by twos” process suggested in Fig. 21 indicates that each whole number can be represented in a unique way as a sum of some numbers from the doubling sequence.

If you stick up the appropriate finger for each doubling-sequence number used in a given number’s breakdown, you get a finger pattern specific to that number, as illustrated in Fig. 23. To the right of the finger patterns, I have written the corresponding base-two notation for each pattern.

Using only one hand, you can represent any number between 00000TWO and 11111TWO, which is any number between 0 and 31TEN; 32TEN possibilities in all. If you use your toes as well as your hands (though raising and lowering toes is not easy) you could reach 1111111111111111111lTWO? which is 1,048,576TEN, or a bit more than a million.

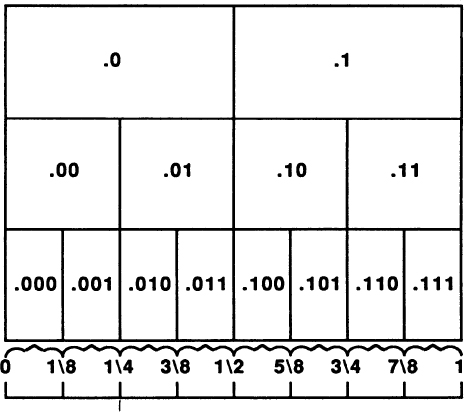

If we try to base a numeration system on one, we end up with the tally system, because all powers of 1 are equal to 1. Two then has the virtue of being the smallest number that can be used as the base of a numeration system. For purposes of analyzing how much information a decision among N possibilities involves, it is good to think in terms of base two.

To choose among thirty-two possibilities is to make five 0/1 choices in writing out a five-digit binary number. Another way of putting this is to say that a choice among thirty-two possibilities requires five bits of information, where a bit of information is a single choice between0 and 1. It is significant that thirty-two is two to the fifth power, and a choice among thirty-two possibilities takes five bits of information. In general, choosing among two to the N possibilities requires N bits of information. Making the choice is like selecting a path along a path that has N binary forks in it.

Fig. 23 Base-two finger counting.

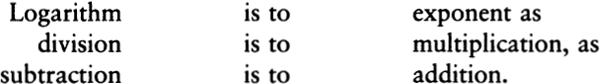

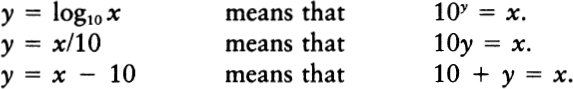

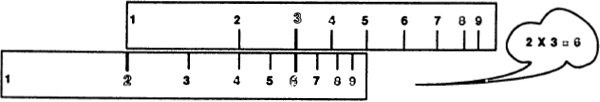

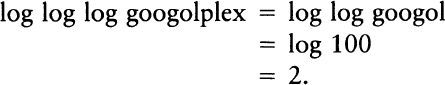

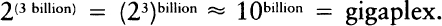

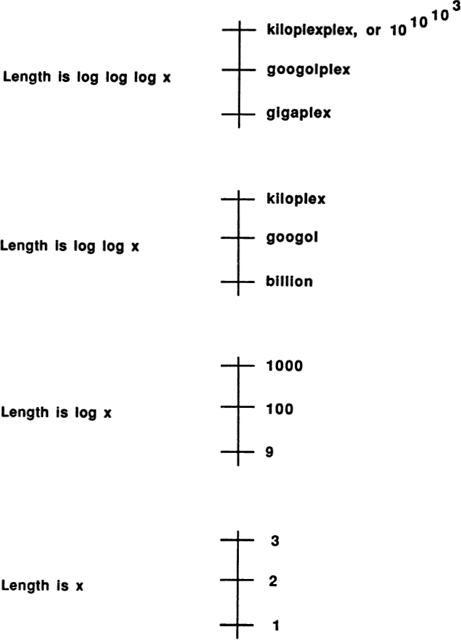

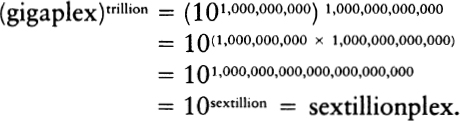

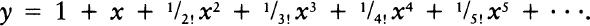

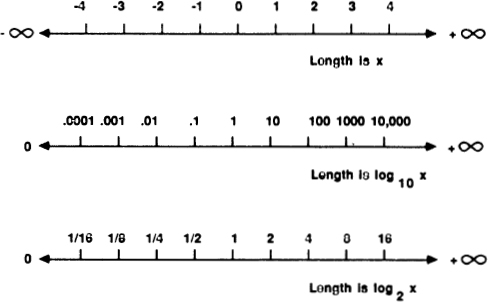

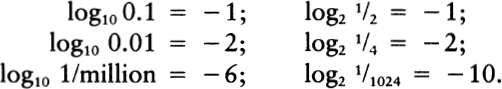

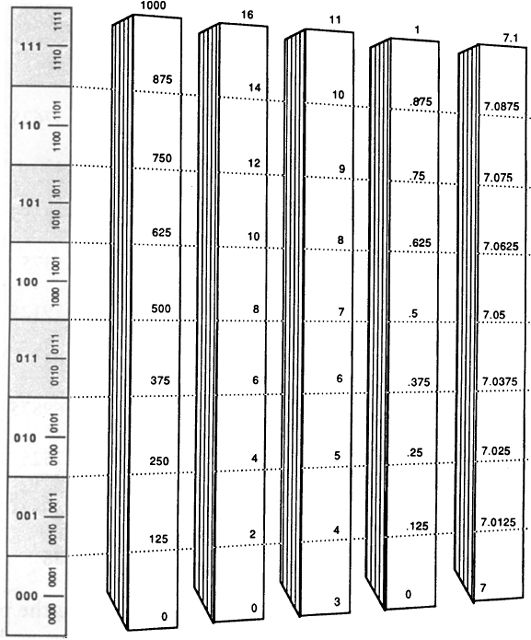

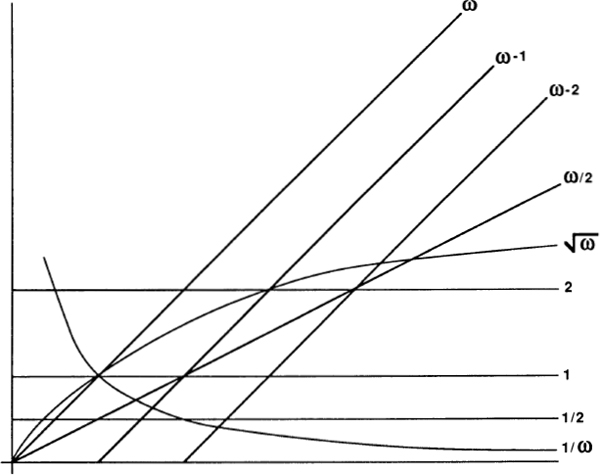

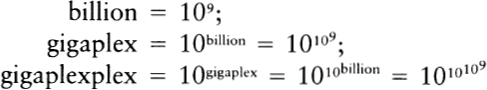

To express this insight more clearly, we need to talk about logarithms. Information theory is usually formulated in terms of logarithms. In the past, this always put me off; like anyone else, I hated logarithms, but then I had a key insight: the logarithm of a number is approximately equal to the number of digits it takes to write the number out. In base ten, log 10 is 1, log 100 is 2, log 1987 is about 3, log 12345 is about 5, and the log of one billion is 10.

Formally, we define the logarithm operation as the inverse of exponentiation. In base ten, the logarithm of a number N is precisely the power to which ten would have to be raised to equal N.

We don’t have to base our logarithms on ten. When we are doinginformation theory, it is much better to base our logarithms on two. In base two, the logarithm of a number N is precisely the power to which two would have to be raised to equal N:

No matter what base we use, logarithms have a number of very beautiful properties. Three properties that make logarithms useful for calculation are

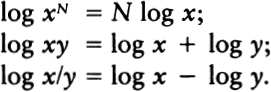

In the old days, people used to compute a large product like 1987 × 7891 by looking up log 1987 and log 7891, adding the logs, and looking up the number whose log is equal to the sum. The computation was done either by means of a log-antilog table, or by means of a slide rule. A slide rule basically consists of two identical sticks printed with number names in such a way that a number’s name appears at a position whose actual distance from the stick’s end is the logarithm of the number. Products are computed by the analog process of measuring off the corresponding stick lengths. Electronic calculators actually do their work by using logarithms, though one doesn’t notice this except when the hidden log-antilog steps lead to round-off errors such as saying “1.9999999” when “2” is meant.

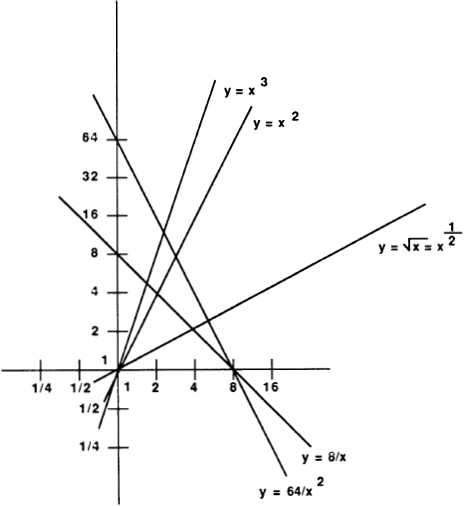

It is often useful to draw graphs in which one or both axes are scaled logarithmically. Looking at the graphs on page 48 makes it clear that, working in any given base, the number of digits it takes to write a number N lies between log N and (log N) + 1.

Fig. 25 A slide rule in action.

The reason information theory makes such frequent use of logarithms base two is that the most fundamental unit of information is a bit, and a bit of information represents a choice between two possibilities. The number of digital bits it takes to write out a’number N in binary notation is approximately equal to the base-two logarithm of N.

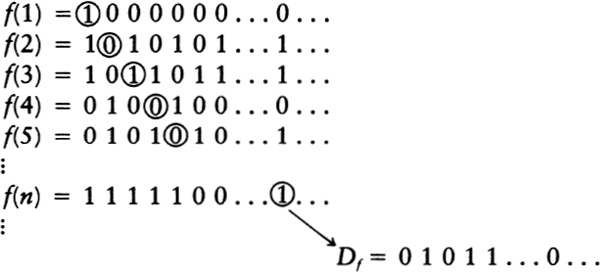

Shannon thought of information in terms of reduction of uncertainty. If someone is about to send you a message, you are uncertain about what the message will say, and the greater the number of possible messages, the greater your uncertainty. The arrival of the message reduces your uncertainty and gives you information. Shannon defines the information in the message as equal to the base-two logarithm of the total number of messages that might possibly have been sent. If we write I for his measure of information and M for the number of possible messages, then we can write Shannon’s definition as an equation:

I = log2 M.

This means that if there are only two possible messages, say “Yes” and “No,” then getting the message gives you one bit of information. Four possible messages means two bits of information, eight possible messages means three bits of information, and so on. One million is approximately two to the twentieth power, so if there are one million possible messages, each message carries some twenty bits of information. Put differently, one can choose among one million possibilities by asking twenty yes-or-no questions.

Fig. 26 In any base, log N is approximately equal to the number of digits it takes to write N.

In general, writing a number in binary notation takes about three times as many digits as it does to write the number in decimal notation. Nevertheless, we do not think of decimal notation as a more efficient code for a number, because the information cost of remembering a decimal digit is about three times the cost of remembering a binary digit. This is made quite precise by an equation that relates different-based logarithms:

log2 M = (log2 10) (log10 M).

Think of log2 M as the number of binary symbols it takes to express M; think of log10 M as the number of decimal symbols it takes to express M; and think of log2 10 as a conversion factor giving the number of binary bits it takes to choose one of the ten decimal symbols: 0, 1, 2, 3, 4, 5, 6, 7, 8, 9. Put differently, log2 10 is the information content per decimal digit. The information in M is the product of the information per digit and the number of digits it takes to write M. The value of log2 10 is, by the way, 3.32. That means a decimal digit is worth 3.32 bits of information. Log2 26 is 4.70, which means that a letter of the alphabet is worth 4.7 bits of information. (See Fig. 27.)

Whole numbers have an obstinate solidity to them. No matter how much you try, you cannot break 5 into two equal whole numbers — 5 breaks into a 1 and a 4, or into a 2 and a 3, and that’s all there is to it. Someone might say, “What about breaking 5 into 2 ½ and 2 ½?” but for now we’re not interested in that kind of talk. Right now we’re thinking of numbers as made up of unbreakable units.

A number is an eternal, universal pattern. The Greeks may have identified certain of their gods with numbers, but now those gods are forgotten, and the numbers live on. It is perhaps conceivable that some extraterrestrial races do not think in terms of number at all. Sentient clouds of gas, for instance, might never encounter any sensations discrete enough to suggest the idea of counting. But if any intelligent race does form the idea of discrete objects, we may be certain that they will arrive at just the same numbers that we use. Number is an objectively existing feature of the world.

Different numbers of units make up various kinds of patterns. In the Introduction we talked at some length about how certain archetypes are associated with some of the smaller numbers:

| 1 | monad — unity |

| 2 | dyad — opposition |

| 3 | triad — thesis-antithesis-synthesis |

| 4 | tetrad — balance of quaternity |

| 5 | quintad — a step further out |

Now let’s go on and look at some other notions that various familiar numbers suggest. In doing this, I will often refer to the Pythagoreans. Pythagoras himself lived in Greece and Italy during the sixth century B.C. He was not only a philosopher, but something of a wizard. His followers banded together, to form a kind of religious community on the island of Samos. The most influential doctrine of these “Pythagoreans” was the notion that, at the deepest level, the universe is made of numbers. In his Metaphysics, Aristotle describes the Pythagorean teaching:

They thought they found in numbers, more than in fire, earth, or water, many resemblances to things which are and become; thus such and such an attribute of numbers is justice, another is soul and mind, another is opportunity, and so on; and again they saw in numbers the attributes and ratios of the musical scales. Since, then, all other things seemed in their whole nature to be assimilated to numbers, while numbers seemed to be the first things in the whole of nature, they supposed the elements of numbers to be the elements of all things, and the whole heaven to be a musical scale and a number.

The Pythagoreans thought of numbers as embodying various basic concepts. In general, the even numbers (2, 4, 6, 8, …) were thought of as female, and the odd numbers (3, 5, 7, 9, …) were thought of as male. Two specifically stood for woman, and 3 for man. One reason for this may lie in the appearance of the male and female genitalia.

Given that 5 is the sum of 2 and 3, 5 was thought of as standing for marriage.

Another Pythagorean train of thought held 1 to stand for God, or the unified Divinity underlying the world. Two, as the first moving away from unity, stood for strife, or opinion. This interpretation of 2 is present in the notion of the dyad, which pairs opposing concepts.

Three is usually thought of as a lucky number; the Christian religionis based on the notion of a triune God. Another important feature of 3 is that three dots describe the simplest possible two-dimensional shape: the triangle. Churches in Austria and southern Germany usually have over their altars pictures of God as an eye inside a triangle. For some reason, this image has found its way onto the American dollar bill.

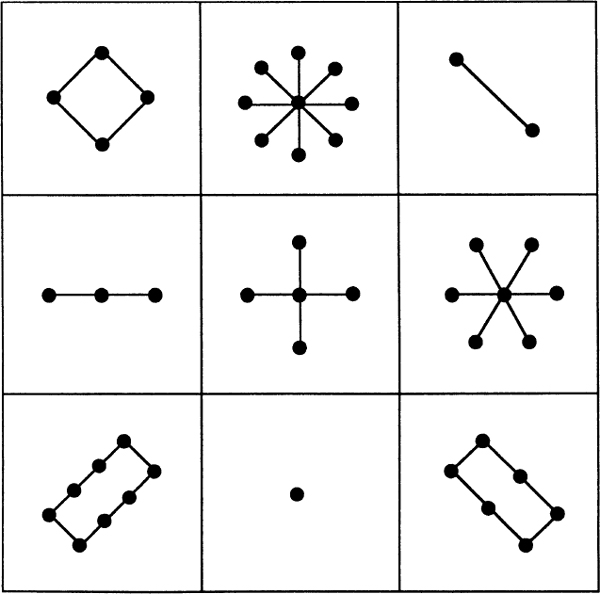

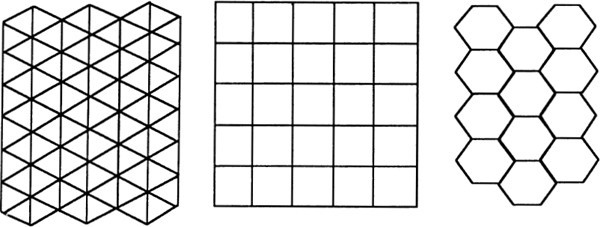

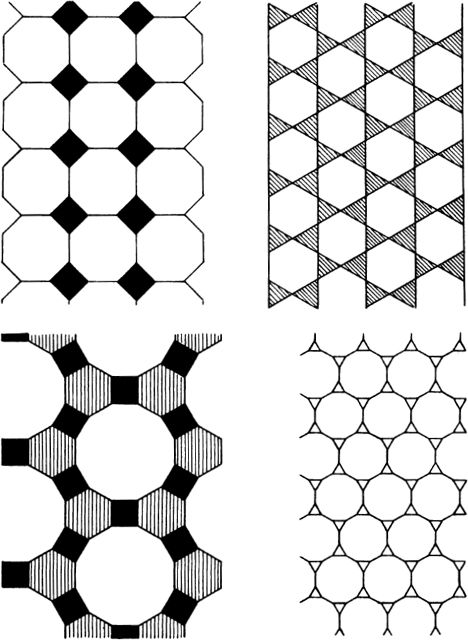

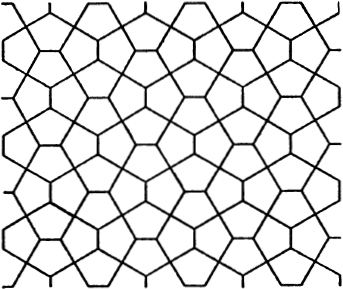

In the rest of this section I am going to be thinking of numbers primarily as patterns in space. That is, I will think of numbers as collections of dots, and will look at some of the patterns into which various-sized collections can be formed. The Pythagoreans often used space patterns to shed light on number properties. In doing this, one tries to keep the patterns as simple and as “digital” as possible; the primary topic here is, after all, number rather than space.

One of the earliest examples of people using dot patterns to represent numbers appears in the Chinese image known as the lo-shu. Here there are patterns representing the numbers 1 through 9, and these patterns are arranged into a “magic square.” Supposedly, Em-porer Yu saw the lo-shu pattern on the back of a tortoise on the banks of the Yellow River in 2200 B.C. The pattern is called a magic square because the sum of the numbers along any horizontal, vertical, or diagonal is always the same: 15.

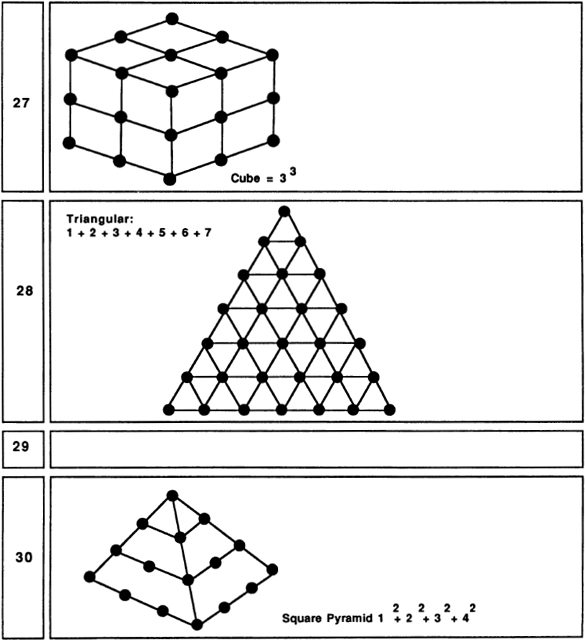

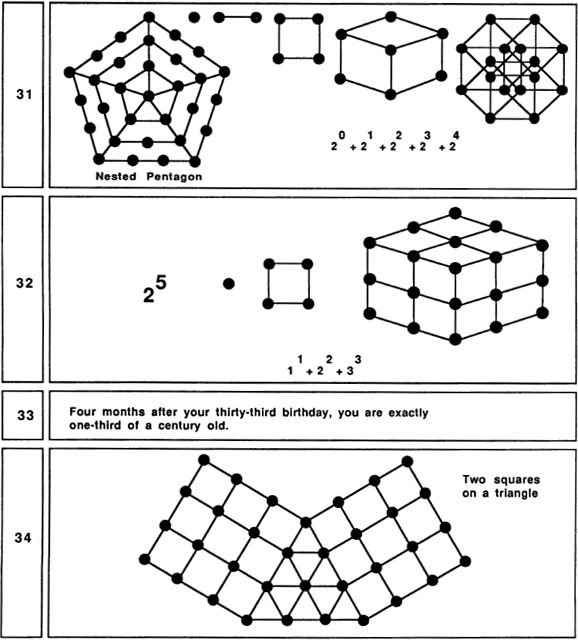

My very long Fig. 29 includes simple pictures of as many of the numbers 1 through 100 as I could come up with. Some of the pictures are traditional, some are my inventions. Readers may be able to discover some simple patterns that I have overlooked entirely. As you read through the rest of this section’s text, keep referring to Fig. 29.

Fig. 28 The lo-shu magic square.

Since 4 breaks so readily into the equal halves 2 and 2, the number 4 was thought to stand for justice. Some residue of the notion survives in the expression “a square deal.” The vertical sides of the square make square (or rectangular) shapes ideal for building things. Perhaps it is this stability that has made “square” come to mean a solid (and somewhat boring) citizen. A unique feature of 4 is that not only is it 2 plus 2, it is also 2 times 2 and 2 to the 2nd power. At this low number level the distinctions between addition, multiplication, and exponentiation are not yet fully developed.

The number 5 is significant for many reasons. A human body has five big things sticking out of it (head, two arms, two legs), and the Pythagoreans sometimes identified 5 with the body or with health. Another important human aspect of 5 is that an undamaged hand has five fingers. Followers of Pythagoras sometimes wore lucky five-pointed stars … just like sheriffs in a Western! Children usually draw houses as irregular pentagons.

Somehow 5 also acquired a negative significance during the Middle Ages. Almost any attempt to summon up the devil includes the drawing of a pentagram (a five-pointed star). One reason for this might be that it is in fact rather difficult to draw an accurate pentagram. Perhaps sorcerers used the pentagram to make it hard for amateurs to imitate their ceremonies.

Six was valued by the Pythagoreans because it has the unusual property of being the sum of its proper divisors. That is, 6 can be broken into six Is, three 2s, or two 3s, and remarkably enough, 6 = 1 + 2 + 3. The Pythagoreans called such numbers perfect numbers. (The next perfect number after 6 is 28, which breaks into twenty-eight Is, two 14s, four 7s, or two 14s, and 28 = 1 + 2 + 4 + 7 + 14.) Another interesting fact about 6 is that, like 3, it is a “triangular number,” meaning that six dots can be arranged into a regular triangular pattern. Six is important in the Old Testament, for there God made the world in six days. The six-pointed Star of David came, in later years, to be a symbol for Judaism. Two interesting natural properties of 6 are that many flowers are based on arrangements of 6, and that bees, who feed on flowers, store their honey in six-sided wax cells.

A less-familiar pattern based on six dots is the threedimensional octahedron. The octahedron can be thought of as two square-based pyramids placed base to base. Each of its faces is an equilateral triangle.

Seven is often used in the Old Testament to stand for a very large number. One reason for this might be that 7 does not allow itself to be put into any simple, symmetrical arrangement. Seven is said to be prime, meaning that it has no divisors other than 1 and itself. Of course 5 is also prime, but 5 has so many other properties that one doesn’t think of it as a “typical” prime. Seven could be called the first typical prime. Another refractory feature of 7 is that, if we limit ourselves to the simple tools of ruler and compass, it is impossible to construct a regular seven-sided figure (heptagon). All the numbers less than 7 can be put into familiar patterns, but 7 is most naturally thought of as a straight line of dots, which could be why 7 suggests the idea of largeness, or of continuing on toward infinity. The uniqueness of 7 has led people to think of it as a lucky number; this association could also have something to do with the dice game of craps, where an initial roll of 7 wins automatically. Seven has the attractive property 7 = 111TWO =1 + 2 + 4.

Numbers that can be written as a series of Is in some numeration system are called “rep-numbers.” The nice thing about rep-numbers is that they can be drawn as tree patterns where each fork has the same number of branches. One other pattern that 7 embodies is that we can arrange seven dots into a hexagonal grid.

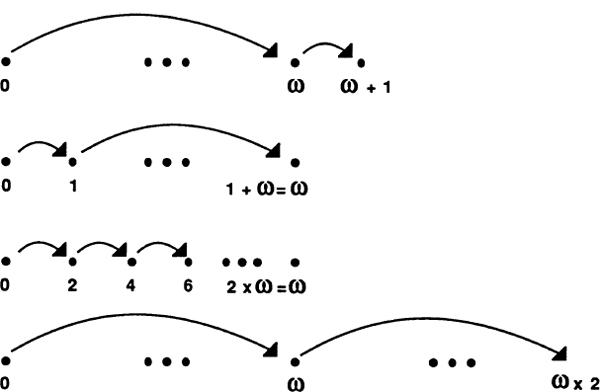

Eight is an interesting number, since it is the first number that is a perfect cube — that is, 8 = 2 × 2 × 2. Eight is, so to say, even with a vengeance, and its smooth curves make it really an even more feminine number than 2. A curious thing about our symbol for 8 is that, if we push this symbol onto its side, we get the common symbol for infinity. Perhaps it is 8’s ability to be halved, rehalved, and halved again that suggests the notion of endlessness.

Nine is the first really typical square number. If we think of it as “thrice three,” it sounds quite grand. An interesting thing about the transition from 8 to 9 is that one is going from two cubed to three squared! It is quite hard to think of nine dots without arranging them into a 3-by-3 grid; at 9 the human imagination is worn out, and we stop inventing new number symbols. One other way of thinking about nine is to imagine placing an extra dot in the center of a 2 x 2 x 2 cube to get a “centered cube.”

Fig. 29, No. 6.

Ten is where we begin using our positional decimal notation. Like 3 and 6, 10 is a triangular number: 10 = 1 + 2 + 3 + 4. (In general, any number is called triangular if it is the sum of a consecutive number sequence starting with 1.) This fact is, of course, the basis for the game of tenpins, or bowling. The Pythagoreans valued 10 highly, as 1, 2, 3, and 4 seemed so fundamental to them.

Fig. 29, No. 7.

Eleven is often thought of as a lucky number. This could be because, in our number system, 11 represents a new beginning, a fresh run through the basic sequence 1 to 9. As such, 11 makes one think of rebirth and increase. Another pleasant feature of 11 is the fact that its two digits are the same. This suggests notions of duplication and plenitude. It is also interesting that 11 is the sum of the three most magical earlier numbers: 1, 3 and 7.

Twelve is a number with very many associations. There were twelve apostles; eggs are sold in dozens; a clock shows twelve hours; a year

has twelve months; and the zodiac has twelve signs. Twelve is “more divisible” than any larger number — that is, no number after 12 is divisible by so great a proportion of the numbers less than itself.

Twelve also has the property that, if we set a triangular pattern of six dots on top of a square pattern of nine dots (with one edge overlapping), we get a kind of “square pentagon” of twelve dots.

It turns out that there are exactly five “regular polyhedra” in threedimensional space. A regular polyhedron is a solid that has two properties: (1) each corner looks the same as all the others, (2) each face is a regular polygon congruent to all the other faces. The five regular polyhedra, also known as the Platonic solids, are as follows:

| tetrahedron | a four-cornered arrangement of four triangles |

| cube | an eight-cornered arrangement of six squares |

| octahedron | a six-cornered arrangement of eight triangles |

| icosahedron | a twelve-cornered arrangement of twenty triangles |

| dodecahedron | a twenty-cornered arrangement of twelve pentagons |

Thirteen is thought of as an unlucky number. This could be because, in the New Testament, Judas is the thirteenth apostle. The notion of Friday the thirteenth being unlucky is probably related to Judas and to the Good Friday crucifixion. Another unsettling thing about 13 is that it is prime, and even more so than 7, lacking in any interesting structural properties. Another reason for 13’s unpopularity is that it contains the second, and thus perhaps false, appearance of the divine number 3. Still, if we scratch beneath the surface, we find that 13 has one very interesting feature: 13 = 9 + 3 + 1 = 111THREE.

Another pattern which can be formed with 13 dots is a “centered square.” The centered squares can be thought of as patterns of nested squares. Five, although we did not mention this before, is also a centered-square number.

Fourteen, being twice 7, is a fairly lucky-seeming number. A nice thing about 14 is that it is the sum of three perfect squares: 14 = l2 + 22 + 32. This enables us to think of 14 dots as arranged into a kind of pyramid pattern.

Fifteen is another triangular number: 15 = 1 + 2 + 3 + 4 + 5. Fifteen is also a base-two rep-number and can therefore be drawn as a tree pattern.

Sixteen is the first number that is the fourth power of a number; that is, 16 is 2 raised to the 4th power. Thus 16 suggests the mysterious notion of four-dimensional space. It is possible to draw a sixteen-cornered pattern that represents a2x2 × 2 × 2 hypercube. Sixteen can also be represented by a pattern of nested pentagons. In our society and “Sweet Sixteen” is often thought of as the age when a girl reaches womanhood.

There is really very little to say about 17. It is 8 plus 9, which is somewhat interesting, I suppose. Alternatively, one might represent 17 as a “casket” pattern: two 2 × 2 squares on either side of a 3 × 3 square.

Eighteen also provides slim pickings, but 19 is interesting in two ways. First, 19 dots fit into a nice hexagonal grid pattern; second, 19 dots can be arranged into an octahedral pattern.

Twenty is significant as the number of corners on a dodecahedron; another thing about 20 is that it is a “tetrahedral” number. We say that a number is tetrahedral if it is the sum of successive triangular numbers. Twenty spheres (try tennis balls or oranges) can be stacked in a tetrahedral pattern.

As we move further down our list of pictures, we find numbers that embody no simple pattern at all. The first really hard case is 23. For some reason 91 is one of the most exciting numbers of all!

It is interesting, even mesmerizing, to see how many patterns and connections are hidden in our first 100 numbers. I’ve thought about nothing else for a couple of weeks now, and I’d like to stop. Last night I got so bothered about ll’s lack of a good pattern that I got up out of bed to check that a tetrahedron of four billiard balls fits nicely on top of a hexagonal grid of seven billiard balls, which is something, I suppose.

But what good is all this? A good, if superficial, use for these patterns is in decorating birthday and anniversary cards. People enjoy knowing that their age (or number of years married) embodies some interesting geometrical form.

At a deeper level, this exercise gives one a much better insight into

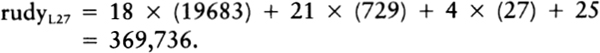

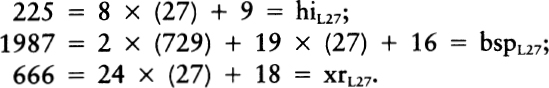

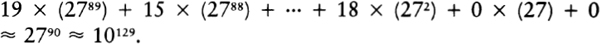

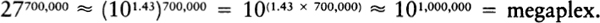

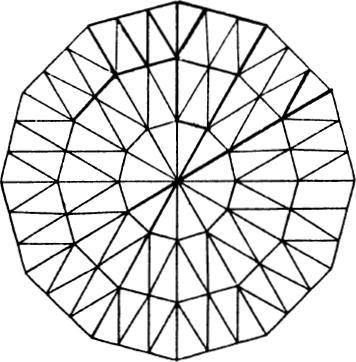

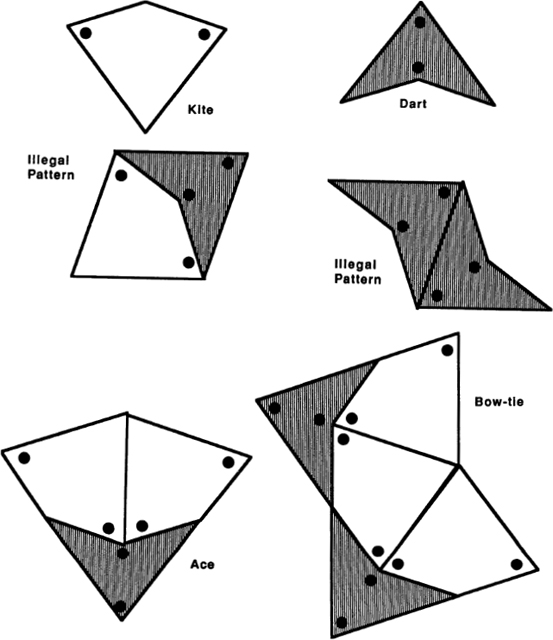

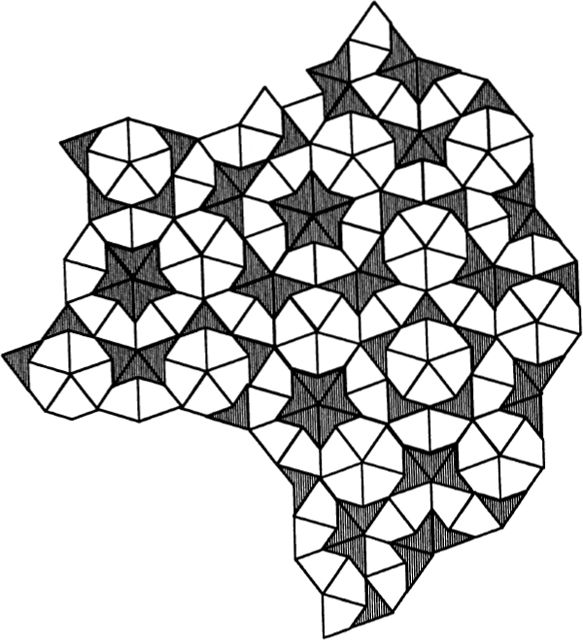

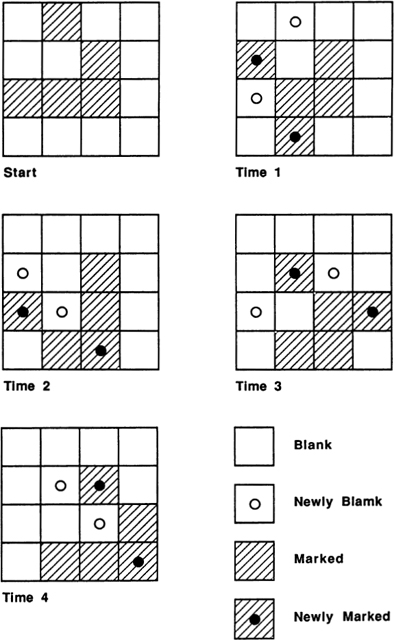

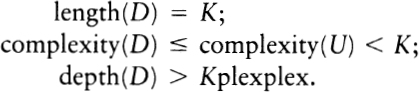

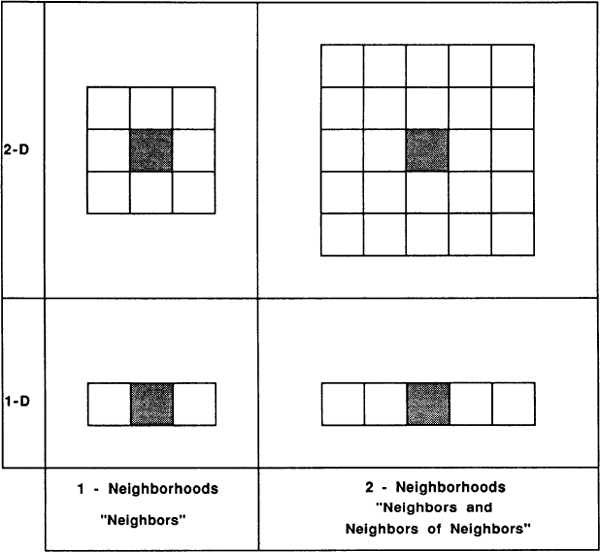

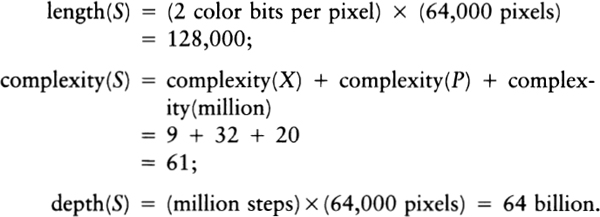

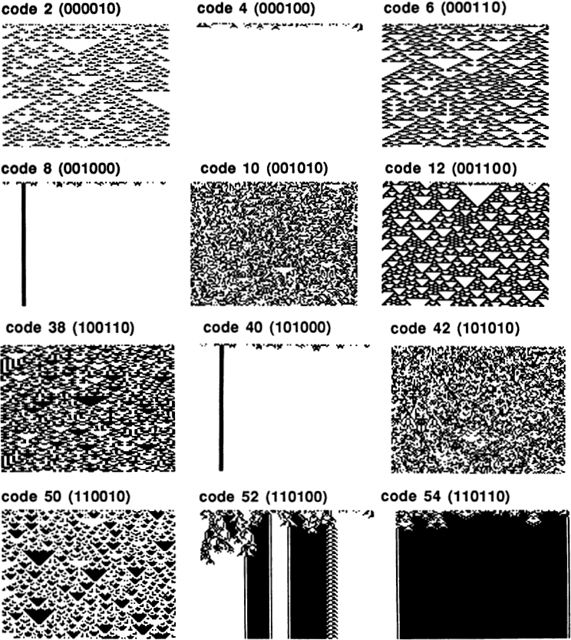

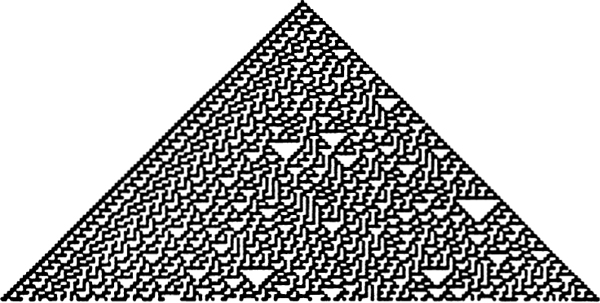

the nature of numbers. All the patterns I drew are based on combinations of small numbers. Small numbers are added, multiplied, squared, and cubed. These simple combinations yield many more patterns than one might have imagined. The endless list of numbers contains unexpected regularities, and some genuine surprises, like the rich structures of 91 and 100.